(Sub)Gradients and Convexity (contd)

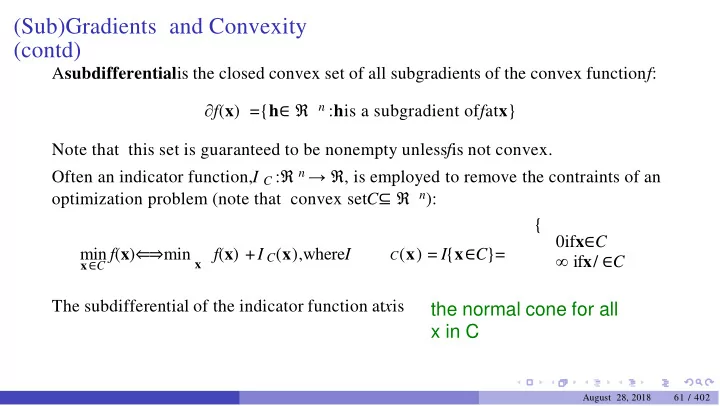

Asubdifferentialis the closed convex set of all subgradients of the convex functionf: ∂f(x) ={h∈ ℜ n :his a subgradient offatx} Note that this set is guaranteed to be nonempty unlessfis not convex. Often an indicator function,I C :ℜ n → ℜ, is employed to remove the contraints of an

- ptimization problem (note that convex setC⊆ ℜ n):

{ 0ifx∈C ∞ ifx/ ∈C min f(x)⇐⇒min x f(x) +I C(x),whereI

C(x) = I{x∈C}= x∈C

The subdifferential of the indicator function atxis

the normal cone for all x in C

August 28, 2018 61 / 402