SLIDE 1

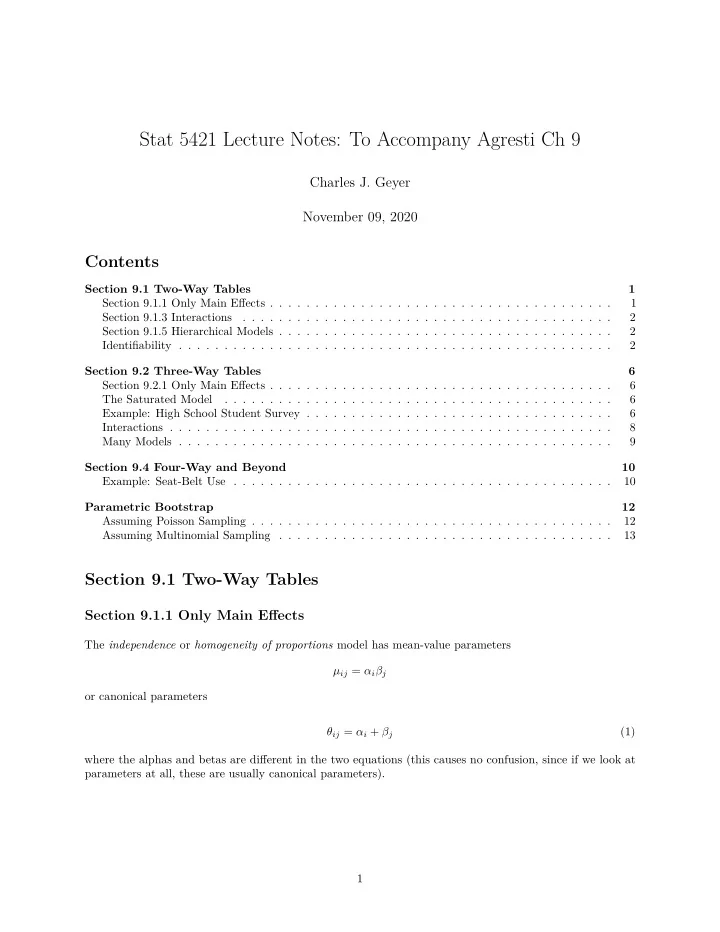

Stat 5421 Lecture Notes: To Accompany Agresti Ch 9

Charles J. Geyer November 09, 2020

Contents

Section 9.1 Two-Way Tables 1 Section 9.1.1 Only Main Effects . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1 Section 9.1.3 Interactions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Section 9.1.5 Hierarchical Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Identifiability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Section 9.2 Three-Way Tables 6 Section 9.2.1 Only Main Effects . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 The Saturated Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 Example: High School Student Survey . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 Interactions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 Many Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9 Section 9.4 Four-Way and Beyond 10 Example: Seat-Belt Use . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10 Parametric Bootstrap 12 Assuming Poisson Sampling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12 Assuming Multinomial Sampling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

Section 9.1 Two-Way Tables

Section 9.1.1 Only Main Effects

The independence or homogeneity of proportions model has mean-value parameters µij = αiβj

- r canonical parameters