SLIDE 18 Representer theorem for deep neural networks

35

(U. JMLR 2019)

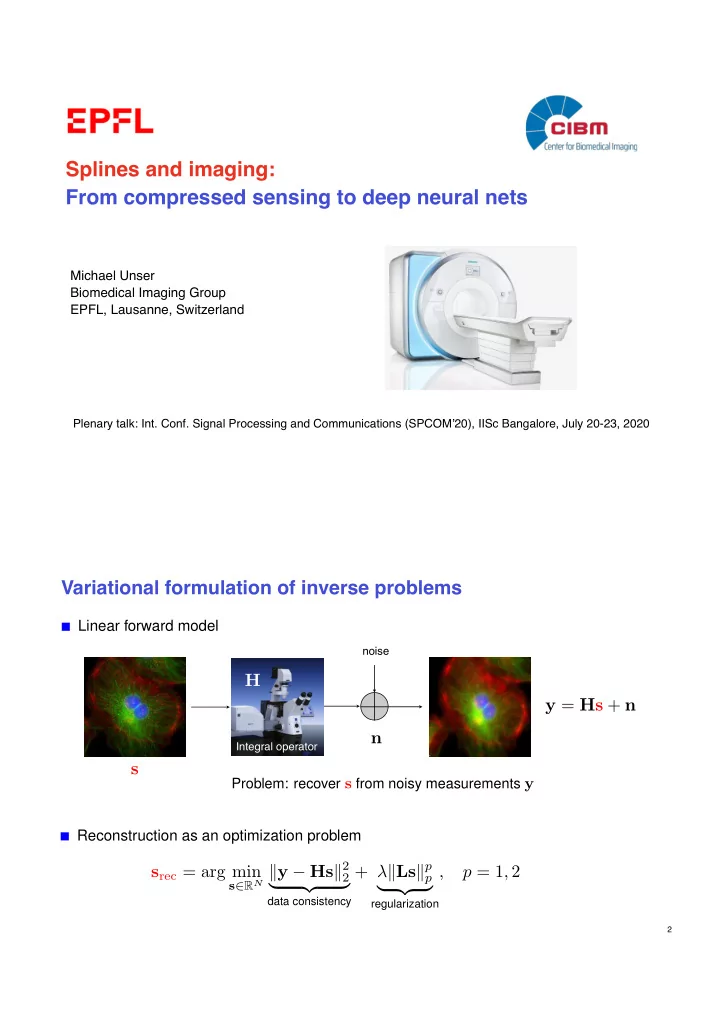

Theorem (TV(2)-optimality of deep spline networks) neural network f : RN0 ! RNL with deep structure (N0, N1, . . . , NL)

x 7! f(x) = (L `L L−1 · · · `2 1 `1) (x)

normalized linear transformations `` : RN`−1 ! RN`, x 7! U`x with weights

U` = [u1,` · · · uN`,`]T 2 RN`×N`−1 such that kun,`k = 1

free-form activations ` =

- σ1,`, . . . , σN`,`

- : RN` ! RN` with σ1,`, . . . , σN`,` 2 BV(2)(R)

Given a series data points (xm, ym) m = 1, . . . , M, we then define the training problem

arg min

(U`),(n,`∈BV(2)(R))

M X

m=1

E

N

X

`=1

R`(U`) + λ

L

X

`=1 N`

X

n=1

TV(2)(σn,`) !

(1)

E : RNL ⇥ RNL ! R+: arbitrary convex error function R` : RN`×N`−1 ! R+: convex cost

If solution of (1) exists, then it is achieved by a deep spline network with activations of the form

σn,`(x) = b1,n,` + b2,n,`x +

Kn,`

X

k=1

ak,n,`(x − τk,n,`)+,

with adaptive parameters Kn,` ≤ M − 2, τ1,n,`, . . . , τKn,`,n,` ∈ R, and b1,n,`, b2,n,`, a1,n,`, . . . , aKn,`,n,` ∈ R.

Outcome of representer theorem

36

Link with `1 minimization techniques

TV(2){σn,`} =

Kn,`

X

k=1

|ak,n,`| = kan,`k1

Each neuron

- fixed index (n, `)

- is characterized by

- its number 0 ≤ Kn,` of knots (ideally, much smaller than M);

- the location {⌧k = ⌧k,n,`}Kn,`

k=1 of these knots (ReLU biases);

- the expansion coefficients bn,` = (b1,n,`, b2,n,`) ∈ R2,

an,` = (a1,n,`, . . . , aK,n,`) ∈ RK.

These parameters (including the number of knots) are data-dependent and adjusted automatically during training.

<latexit sha1_base64="07zaAV79momeL2YeyW4oNDsgW3A=">ANLXiclVdb9s2FHa9W+tlW7M9DgOIxQaSwjUsF1u7S4ACvaBFL8vSJi0apQElHduMKUolqdQuobf9mr3sYfsrexgw7HU/Ya871MWR5LZIBdiheb7zfUfnkIeMF3Om9HD417n2e+9/8OFH5y90Pl75NPLq5/vq+iRPqw50c8k89qoAzAXuaQ5PYwk09Dg8WY3rP3JCUjFIvFYL2I4DOlEsDHzqcapo/X2V7eoPyUCEhkJ0nU9NtnsjtkcAsJEAHPS3R94gLnW9a21SVMEX9KJfU1SPYKcd6i03E9mDBhmIYQ59KOa0eEaUVEnogSXfociD3jozoW7K0S6IxmYkIEZsAMr5ok/CBENRIf5ADz2lGNCD7tYPBZueAuFRHjhGalxNk6PZdvbHzPoFsZvij20nfW5OxXI19FdQau7C/T3iMcycqgnAPKbCZov4EYwxTwE4rvuA+It+bY3ceyUin38MSp/bBGXid3no27fdTtd5/QihetehGXBxhK3yLuNdzNvbQ76LgtOUPs7CjzHzIWDqbd6Ez5OAiUkWeZHoMq1bhEogxvXGJKCaXg4gRjp8mZRQERAaHCdKY/VoqMQc+rbEpAgkRmfpEzgYHB0cWM4GYPWR04xWCjVTw7R+sX/nODyE9CVPI5VerAGcb60FCJEtyujERBTP0ZnYDJlm9KejgVkHEk8SM0yWZrOBoqtQg9RGKkU9W02cnX2uyMVGNV/W8MO1UZw4SPb52aJiIEw3CzwMaJ5zoiNg9QwImwdeYHur7+F4JtYmrMTx2Do0N3rXDArzCsH24LvRoZkCPwGNdgkCXvpRGIljDumIeOLAMY04To1rhqX406v0yPZHlZ0bwEd4ZW3+O8ixjc8lmuTJhMIh6kRk68FfYcHD1m/4wXcFIWBSY4QAB+Qdh1WA8nkAug5HU4+TUwzZjlyOaclKLTokrEw7GqsI87ZSDtMFs5c/OXIR7Vm58/Xfituk6I7fHsZTvkhKEv427zmCbUuIpXF7Yc1KDG3aqcO3AJXN5OPgWXeqZzpoj6XbyqNSLBDd59RlZDXIm0TeqHP1VGeZd83E4s1CV0qhqkwukB7gXnBPxS6Z160WBwmyr4LTpijLELEbgGw4r6dvKJxVpKqSFWIp0iw2m9hyWgVibH0lNowzhG+zWIRPOhkvqRNj364SnyFWDNWEwmzZGS94FEMPqOc2G4Y8bwRVFXw7EjtN3Ft6/M8s9t8QSae1RHPmogbuDpK42mcbdiXOF+VjGu0D6sGB82jXcYx7NLlxDsmeZOEwMvsJ3ZwmlckBK4cU/wUJGMigmeK2Y7tU296nA/ilNMtQzJ/SbXzaXpZtN0d2m62zThUasLW7CidhtvZAxk9RVur6wC75X1x9IFeQXJK+Tp1SHzBmS+skabCLmCeNlAuFEIE7oSszdr4Garza+B4Cskc8yJbrhLKZV3v3YHqR5rkN8uPJ3d9wagIm3zl0yMnuMHx/MvRj2I/w5cZLsA9ku2YAvQgpxyfWsMfcZ3Mc+tPMZozAhlmiHwaYDkJWdns5PHxcvJ4OXlXBMw3xVIOjbM07MjIy+eVL81OyXw7Qnf3JV5yp1SbDLV0uTWPc48oLhnNLewAIuIsxNsz5rp3OXvI9C6vOrFeLMgCm+FBLQ/wKfjKsCrv5joKXZ7mGuUwzuRcRwf7zpVo+1D+TXQDNb/aQo/5VQWiK1ZRiMGoXU1Esrx4dtqEiUErwqOs2L4epgfzRwrgyGP482rl8rLo3nW1+2vm5tpzW1db1p3WTmuv5bd/af/a/r39x9pva3+u/b32Tw5tnyt8vmjVnrV/weD/84c</latexit>