January 10, 2017 Sam Siewert, AIAA SciTech 2017 - Fusion of Networked Sensor Systems

Software-Defined Multi-Spectral Imaging System Image and - - PowerPoint PPT Presentation

Software-Defined Multi-Spectral Imaging System Image and - - PowerPoint PPT Presentation

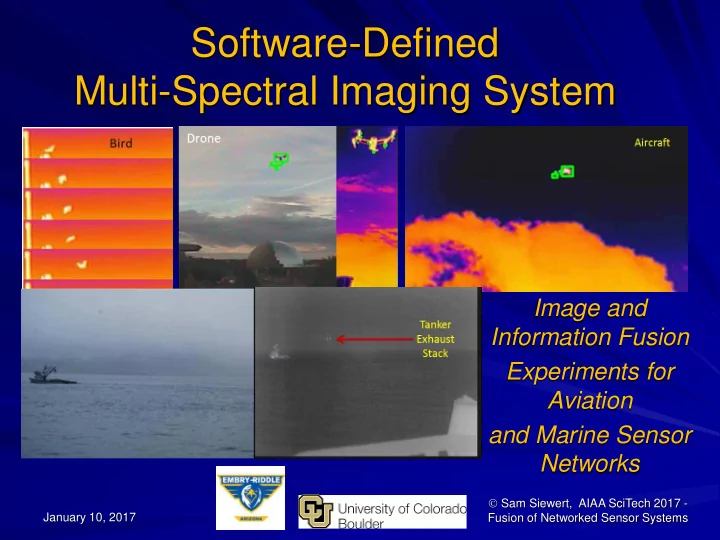

Software-Defined Multi-Spectral Imaging System Image and Information Fusion Experiments for Aviation and Marine Sensor Networks Sam Siewert, AIAA SciTech 2017 - January 10, 2017 Fusion of Networked Sensor Systems Goals and Objectives

Goals and Objectives

Feature Rich Software Defined Multi-Spectral Imaging System

– Geo-1: Southwestern Sonoran Desert, Colorado Plateau – Geo-2: Alaska and US Arctic Environments – In-situ monitors (rooftops, buoys, poles) – Share and Add Geos – Light Aircraft (ERAU RV-12) – Marine Vessel and UAS Detection, Tracking, Classification, Identification

Complimentary High Spatial, Temporal, Spectral Resolution

– Specific Geolocations, Campus, Airport, Marine Port – Compliments Satellite Remote Sensing – Cooperative ADS-B and S-AIS – Active RADAR/LIDAR Systems – Adds EO/IR and Acoustic Passive Sensing to Active Existing – Enhance Information Aggregation (flightradar24.com, MarineTraffic.com) – Networked Instruments with Image Fusion for Information Fusion – Low Power (Battery of Fuel Cell Extended Operation) < 10 Watts Peak

Low-Cost, Simplified Use Sensor Fusion Instrument - Open Reference Detect, Track, Classify and Identify Aerial and Marine Objects

– Determine Performance Methodology for EO/IR and Fusion Sensor Networks – Compare Candidate Methods to Baseline

Sam Siewert 2

2015/16 – ADAC & ERAU Sponsored

UAA – ADAC, SmartCam ERAU (Undergraduate Research Team)

– Sam Siewert, PI, Assistant, Prof. – Demi Matthew Vis – AE/SE Student – Ryan Claus – SE Student – Nicholas DiPinto – SE Student – Arctic Power Team – Power Team Poster

CU Boulder – Embedded Systems Engineering Graduate Program

– Ram Krishnamurthy – MS EE – Surjith Singh – MS, ESE – Akshay Singh – ME, ESE – Shivasankar Gunasekaran – ME,ESE – Swaminath Badrinath – ME, ESE

Industry Advising/Collaboration Participants

– Randall Myers, Mentor Graphics

Sam Siewert 3

This material is based upon work supported by the U.S. Department

- f

Homeland Security under Grant Award Number, DHS-14-ST-061-COE-001A-02. The views and conclusions contained in this document are those of the authors and should not be interpreted as necessarily representing the official policies, either expressed

- r

implied,

- f

the U.S. Department of Homeland Security.

2016/17 Team – ERAU Sponsored

ERAU – Drone Net

– Sam Siewert, PI, Assistant, Prof. – Demi Matthew Vis – AE/SE Student – Ryan Claus – SE Student

CU Boulder – Embedded Systems Graduate

– Ram Krishnamurthy – MS EE – Surjith Singh – MS, ESE – Akshay Singh – ME, ESE – Shivasankar Gunasekaran – ME,ESE – Omkar Seelam – ME, ESE

Industry Advising/Collaboration Participants

– Randall Myers, Mentor Graphics (PCB, CAD, Systems) – Joe Butler, Intel Corporation (IoT)

Sam Siewert 4

Open Reference SDMSI Configuration

2 Basler Pulse Visible Cameras 1 FLIR Vue LWIR Camera with ZnSe Window Jetson TK1, Panda Wireless, USB3 Hub, Power, NEMA Enclosure

Sam Siewert 5

USCG – Arctic Shield

Potential SDMSI Buoy and Pole Mounts to Enhance AIFC (Arctic Information Fusion Concept)

Sam Siewert 6

http://www.uscg.mil/d17/ArcticShield/Documents/USCG%20Arctic%20operations.pdf

Smart Camera Deployment - Marine

Land Towers (Light Stations, Ports, Weather Stations) Self-Powered Ocean Buoys Mast mounted on Vessels

Sam Siewert 7 http://www.oceanpowertechnologies.com/ http://www.esrl.noaa.gov/gmd/obop/brw/ http://www.uscg.mil/d17/cgcspar/

Smart Camera Deployment - Aerial

UAV Systems - ERAU ICARUS Group Experimental Aviation and Small Aircraft - ERAU Kite Aerial Photography, Balloon Missions (ERAU, UAA, CU Boulder)

Sam Siewert 8

Sam Siewert – ERAU ICARUS Group

Actual - Roof Mount Experiment

Starting point – evolve to aircraft, buoy and UAS later Embry Riddle flight line provides lots of light aircraft traffic Simple UAS testing in Campus (semi-Urban) environment Wildlife – insects, bats, birds, etc.

Sam Siewert 9

Information Fusion Concepts

Integration and System of Systems Between ADS-B and S-AIS for Vessel / Aircraft / UAS Awareness Smart Cameras Can Monitor and Plan Uplink Opportunity as Well as Wake up and Uplink

Sam Siewert 10

System Fusion For Uplink

Ice Detection/Tracking Feasibility Tests

Clear Segmentation of Ice, Rock, Water, Drainage over Rocks, Vegetation – As Expected for 14 micron LWIR

Sam Siewert 11

Melt-water drainage

Preliminary Ice Tracking Feasibility

Bergs of small size easily segmented for detection and tracking High contrast to water (air @ 63-52F 7/10/15)

Sam Siewert 12

Preliminary Vessel Tracking Feasibility

Good detection of engines and exhaust in fog Idle or adrift vessels harder to detect than underway (active)

Sam Siewert 13

Exhaust stacks for Tanker at TAP ?? 25mm Athermal Lens - LWIR 25mm Visible 200mm DSLR Visible

Visibility of Thermal Features in Fog

Hot-spots (engines, exhaust, cabin, lights) segment well Improve with Common Intrinsic/Extrinsic Characteristics and Image Fusion Valdez Harbor, Alaska

Sam Siewert 14

Vessel Detection, Tracking, Identification At Ports, Light Stations, and In Straits Integrate with Arctic Information Fusion Concept (S-AIS)

Feasibility Testing in Marine Domain

- Marguerite Ace Leaves Long Beach

- HD visible imaging of departures

- And transits with ID

- LWIR night/fog detection and tracking

- Correlation to S-AIS and DBMS

- (Field Test – June 2015, Long Beach)

Sam Siewert 15

Detect bodies in the water, Port trespassing, Complements USCG Aircraft FLIR Systems

Feasibility for SAR Ops / Port Security

Surfers in the Water Hand-held, Cutter Mounted, Buoys Complements Existing Helicopter and C130 FLIR (Field Test – June 2015, Malibu)

Trespassers at Night Shown on Jetty Hand-held, Port Drop-in-Place, Buoys Complements Existing Security Off-Grid Installations (Field Test – June 2015, San Pedro)

Sam Siewert 16

Trespassers at Night (Motion, Audio Cues)

Conceptual Configuration

Sam Siewert 17

Panchromatic, NIR, RGB

Jetson Tegra X1 With GP-GPU Co-Processing

Cloud Analytics and Machine Learning Flash SD Card (local database)

LWIR

Saliency & Behavioral Assessment Thermal Fusion Assessment

Many multi- spectral focal planes …

2D/3D Spatial Assessment

Experimental System Block Diagram

2 Watts at Idle, Plus 1.5 Watts per Camera = 6.5W E.g. Sobel, 30Hz, 20 Mega Pixels/Sec/Watt, 7.3W Peak – SPIE Sensor Tech + Apps

Sam Siewert 18

1) Sync’d Capture 2) Resolution Match 3) Image Registration 4) Detection 5) Classification 6) Identification

Detection Experiments for Aircraft and UAS

Preliminary Roof-top Field Trials at ERAU Prescott

Sam Siewert 19

Baseline Motion Trigger Detection

Difference Images over Time (adjustable) Threshold - Statistically Significant Pixel Change Filters (Atmospheric, Cloud, Constant Background Motion) – Dispersion of Changes Detection Performance – ROC, PR-Curve, F-measure [TP, FP, FN, TN analysis] Classification/Identification - Confusion Matrix

Sam Siewert 20

https://en.wikipedia.org/wiki/Precision_and_recall

PR best for Image Retrieval E.g. https://images.google.com/ ROC best for Target Detection

Frame by Frame Analysis

TP – Determined by Human Review Frame by Frame Alternative is by Physical Experiment Design “Autoit” Program to Analyze

Sam Siewert 21

Aircraft Detection Performance - Baseline

Video Links – Aircraft, Bugs, FP, TP+FP, [TN], [Full]

Sam Siewert 22

UAS Detection Performance – Baseline

Video Link – UAS+Aircraft, Bugs, FP, TP+FP, [TN], [Full]

Sam Siewert 23

Candidate SOD (BinWang14) - Aircraft

Modified to Run BinWang14 SOD => MD Baseline Video Links – TP+FP, [TN], [Full]

Sam Siewert 24

Candidate SOD (BinWang14) - UAS

Modified to Run BinWang14 SOD => MD Baseline Video Links – TP+FP, [TN], [Full]

Sam Siewert 25

Search and/or Development of UAS & Aircraft SOD + Classifier + Identification

Likely Requires Custom Detection – SOD Classification Based on Shape, Behavior and Contrast/Color/Texture in Multiple Bands (RGB, NIR, LWIR) Considering Acoustic Cue Fusion Cross Check with ADS-B, RADAR/LIDAR Data Produce Improved flightradar24.com Meta-data Find Ghost UAS and Aircraft [Non-compliant], Log Others

Sam Siewert 26

Needs Debugging – Literally!

Many Insects Detected in Visible to LWIR Opportunity to work on Bird / Aviation Interaction Testing

Sam Siewert 27

Summary and Future Work

Methods to Evaluate UAS/Aircraft Shared NAS Instruments (EO/IR) Open Reference Design to Replicate (HW, FW, SW) Bench Testing – 2 Watts Idle, < 10 Watts Peak Operation Detection Performance Baseline to Compare To

– Test Candidate SOD Algorithms – Deep Learning ANN – Research Customized SOD

Please Download our Benchmarks, Detectors, Test Cases

– https://github.com/siewertserau/fusion_coproc_benchmarks – https://github.com/siewertserau/EOIR_detection – http://mercury.pr.erau.edu/~siewerts/extra/papers/AIAA-SDMSI-data-2017/

Open Source Hardware, Firmware, Software for Multispectral EO/IR and Information Fusion Applications Build a Drone Net – Campus, Port and at Multiple Geos!

Sam Siewert 28

Backup Slides and References

Sam Siewert 29

References

Sam Siewert 30

- 1S. Siewert, V. Angoth, R. Krishnamurthy, K. Mani, K. Mock, S. B. Singh, S. Srivistava, C. Wagner, R. Claus, M. Demi

Vis, “Software Defined Multi-Spectral Imaging for Arctic Sensor Networks”, SPIE Algorithms and Technologies for Multispectral, Hyperspectral, and Ultraspectral Imagery XXII, Baltimore, Maryland, April 2016.

2 S. Siewert, J. Shihadeh, Randall Myers, Jay Khandhar, Vitaly Ivanov, “Low Cost, High Performance and Efficiency

Computational Photometer Design”, SPIE Sensing Technology and Applications, SPIE Proceedings, Volume 9121, Baltimore, Maryland, May 2014.

3Piella, G. (2003). A general framework for multiresolution image fusion: from pixels to regions. Information fusion,

4(4), 259-280.

4Blum, R. S., & Liu, Z. (Eds.). (2005). Multi-sensor image fusion and its applications. CRC press. 5Liu, Z., Blasch, E., Xue, Z., Zhao, J., Laganiere, R., & Wu, W. (2012). Objective assessment of multiresolution image

fusion algorithms for context enhancement in night vision: a comparative study. Pattern Analysis and Machine Intelligence, IEEE Transactions on, 34(1), 94-109.

6Simone, G., Farina, A., Morabito, F. C., Serpico, S. B., & Bruzzone, L. (2002). Image fusion techniques for remote

sensing applications. Information fusion, 3(1), 3-15.

7Mitchell, H. B. (2010). Image fusion: theories, techniques and applications. Springer Science & Business Media. 8Szeliski, R. (2010). Computer vision: algorithms and applications. Springer Science & Business Media. 9Sharma, G., Jurie, F., & Schmid, C. (2012, June). Discriminative spatial saliency for image classification. In Computer

Vision and Pattern Recognition (CVPR), 2012 IEEE Conference on (pp. 3506-3513). IEEE.

10Toet, A. (2011). Computational versus psychophysical bottom-up image saliency: A comparative evaluation study.

Pattern Analysis and Machine Intelligence, IEEE Transactions on, 33(11), 2131-2146.

References

Sam Siewert 31

11Valenti, R., Sebe, N., & Gevers, T. (2009, September). Image saliency by isocentric curvedness and color. In

Computer Vision, 2009 IEEE 12th International Conference on (pp. 2185-2192). IEEE.

12Wang, M., Konrad, J., Ishwar, P., Jing, K., & Rowley, H. (2011, June). Image saliency: From intrinsic to extrinsic

- context. In Computer Vision and Pattern Recognition (CVPR), 2011 IEEE Conference on (pp. 417-424). IEEE.

13Liu, F., & Gleicher, M. (2006, July). Region enhanced scale-invariant saliency detection. In Multimedia and Expo,

2006 IEEE International Conference on (pp. 1477-1480). IEEE.

14Cheng, M. M., Mitra, N. J., Huang, X., & Hu, S. M. (2014). Salientshape: Group saliency in image collections. The

Visual Computer, 30(4), 443-453.

15http://global.digitalglobe.com/sites/default/files/DG_WorldView2_DS_PROD.pdf 16http://www.spaceimagingme.com/downloads/sensors/datasheets/DG_WorldView3_DS_2014.pdf 17Richards, Mark A., James A. Scheer, and William A. Holm. Principles of modern radar. SciTech Pub., 2010. 18Brown, Christopher D., and Herbert T. Davis. "Receiver operating characteristics curves and related decision

measures: A tutorial." Chemometrics and Intelligent Laboratory Systems 80.1 (2006): 24-38.

19Wang, Bin, and Piotr Dudek. "A fast self-tuning background subtraction algorithm." Proceedings of the IEEE

Conference on Computer Vision and Pattern Recognition Workshops. 2014.

20Panagiotakis, Costas, et al. "Segmentation and sampling of moving object trajectories based on representativeness."

IEEE Transactions on Knowledge and Data Engineering 24.7 (2012): 1328-1343.

References

Sam Siewert 32

21Public SDMSI shared data web site for video sequences captured and used in two experiments presented in this

paper - http://mercury.pr.erau.edu/~siewerts/extra/papers/AIAA-SDMSI-data-2017/

22Perazzi, Federico, et al. "Saliency filters: Contrast based filtering for salient region detection." Computer Vision and

Pattern Recognition (CVPR), 2012 IEEE Conference on. IEEE, 2012.

23Achanta, Radhakrishna, et al. "SLIC superpixels compared to state-of-the-art superpixel methods." IEEE

transactions on pattern analysis and machine intelligence 34.11 (2012): 2274-2282.

24Hou, Xiaodi, and Liqing Zhang. "Saliency detection: A spectral residual approach." 2007 IEEE Conference on

Computer Vision and Pattern Recognition. IEEE, 2007.

25Global Contrast based Salient Region Detection. Ming-Ming Cheng, Niloy J. Mitra, Xiaolei Huang, Philip H. S. Torr,

Shi-Min Hu. IEEE Transactions on Pattern Analysis and Machine Intelligence (IEEE TPAMI), 37(3), 569-582, 2015.

26flightradar24.com, ADS-B, primary/secondary RADAR flight localization and aggregation services. 27Birch, Gabriel Carisle, John Clark Griffin, and Matthew Kelly Erdman. UAS Detection Classification and

Neutralization: Market Survey 2015. No. SAND2015-6365. Sandia National Laboratories (SNL-NM), Albuquerque, NM (United States), 2015.