Simple Linear Regression

- Suppose we observe bivariate data (X, Y ), but we do not know

Simple Linear Regression Suppose we observe bivariate data ( X, Y ), - - PowerPoint PPT Presentation

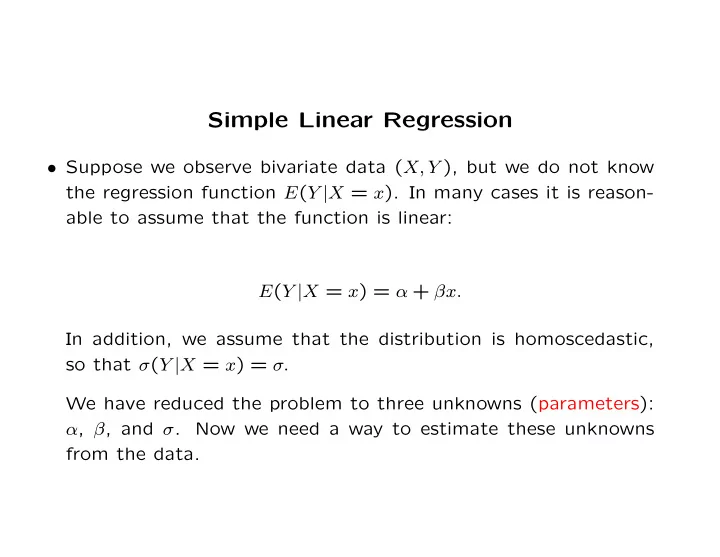

Simple Linear Regression Suppose we observe bivariate data ( X, Y ), but we do not know the regression function E ( Y | X = x ). In many cases it is reason- able to assume that the function is linear: E ( Y | X = x ) = + x. In addition,

1 2 3 4 5 6 7

0.5 1 1.5 2

2 4 6 8

0.5 1 1.5 2

2 4 6 8

0.5 1 1.5 2

50 100 150 200 250 300 0.5 1 1.5 2 50 100 150 200 250 300

50 100 150 200 250 0.5 1 1.5 2 50 100 150 200 250 300

50 100 150 200 250 300 0.5 1 1.5 2 50 100 150 200 250 300

50 100 150 200 250 0.5 1 1.5 2 50 100 150 200 250

50 100 150 200 250 1 1.5 2 2.5 3 50 100 150 200 250 1 1.5 2 2.5 3

1 2 3 4 5 6

0.5 1 1.5 2

1 2 3 4 5 6

0.5 1 1.5 2

0.5 1 1.5

0.5 1 1.5 2

0.5 1 1.5 2

0.5 1 1.5 2

1

0.5 1 1.5 2

1

0.5 1 1.5

10 20 30 40

0.5 1 1.5 2

5 10 15 20

5 10 15 20 25

Residuals

0.5 1 1.5 2

0.5 1 1.5

Residuals

0.5 1 1.5 2

0.5 1 1.5 2 2.5

0.5 1 1.5 2

Normal Quantile

10 20 30 40 50 60 70 80 20 25 30 35 40 45 50

Non outliers 2 SD outliers 3 SD outliers Ann Arbor, MI

5 10 15 20 25 30 20 30 40 50 60 70 80

1 2 3 4 5 6

1 2 3 4 5

0.5 1 1.5 2 2.5

0.5 1 1.5 2 2.5 3

Residuals Fitted values

0.5 1 1.5 2

1 2 3 4 5

0.5 1 1.5

0.5 1 1.5

Residuals Fitted values

0.5 1 2 4 6 8 10 12 14 16

0.2 0.4 0.6 0.8 1

0.2 0.4 0.6

Residuals Fitted values

0.5 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8 2

0.2 0.4 0.6

Residuals Fitted values

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5 2 4 6 8 10 12 14 16 18 20

0.5 1 1.5 2 0.5 1 1.5 2 2.5 3

Residuals Fitted values

0.5 1 1.5 2 0.5 1 1.5 2 2.5 3

0.2 0.4 0.6 0.8

0.5 1 1.5

Residuals Fitted values