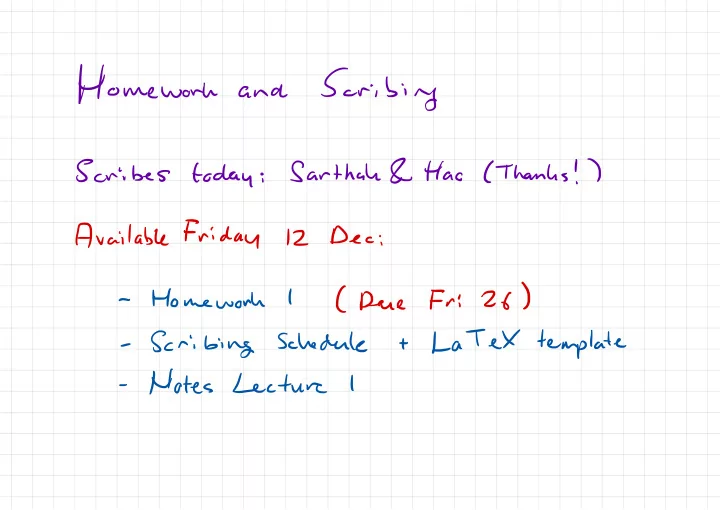

SLIDE 1 Homework

and

Scribing

Scribes

today

;

Sarthah

&

Hao

(

Thanks

! )

Available

Friday

12

Dec

;

- Homework

- Scribing

- Notes