1

1

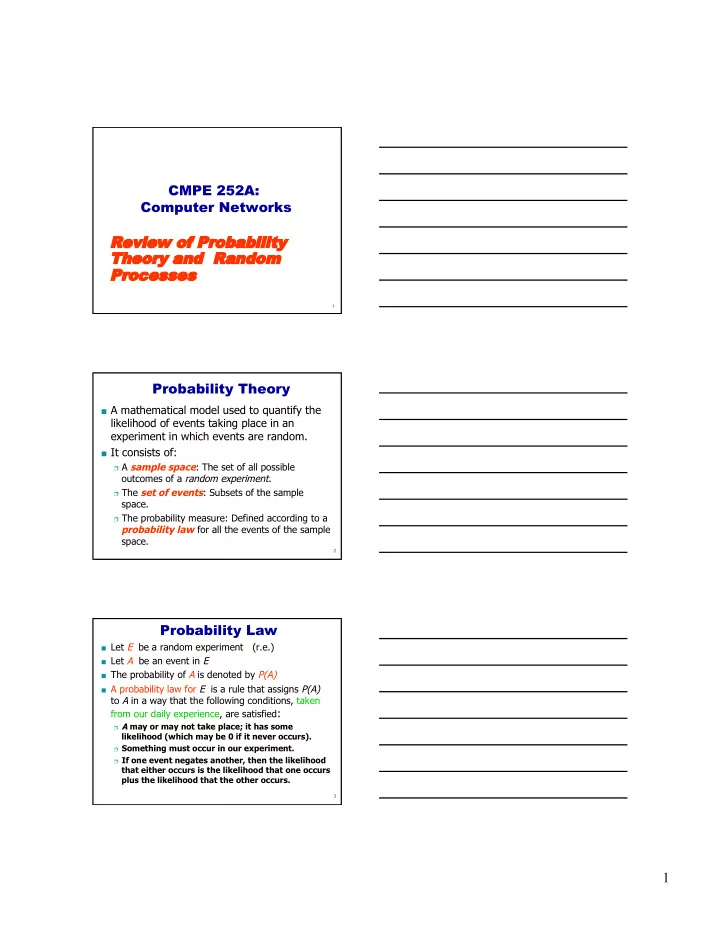

CMPE 252A: Computer Networks

Rev eview iew of

- f Probabilit

- bability

Theor heory and and Random andom Proces

- cesses

es

2

Probability Theory

A mathematical model used to quantify the

likelihood of events taking place in an experiment in which events are random.

It consists of:

A sample space: The set of all possible

- utcomes of a random experiment.

The set of events: Subsets of the sample

space.

The probability measure: Defined according to a

probability law for all the events of the sample space.

3

Probability Law

Let E be a random experiment (r.e.) Let A be an event in E The probability of A is denoted by P(A) A probability law for E is a rule that assigns P(A)

to A in a way that the following conditions, taken from our daily experience, are satisfied:

A may or may not take place; it has some

likelihood (which may be 0 if it never occurs).

Something must occur in our experiment. If one event negates another, then the likelihood