SLIDE 1 1

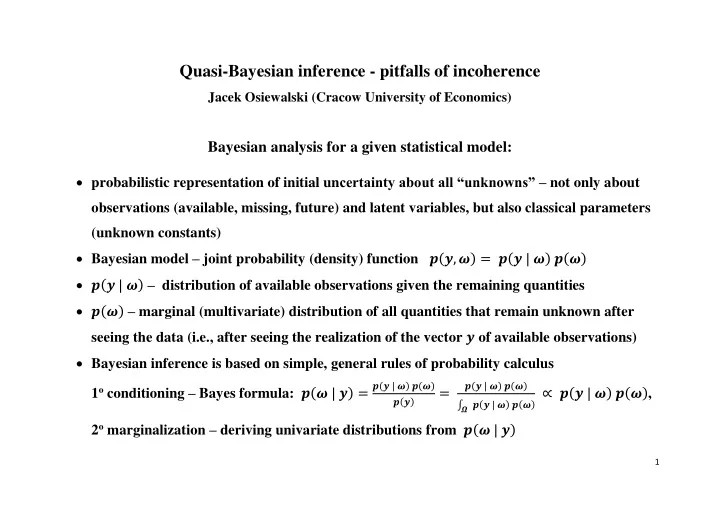

Quasi-Bayesian inference - pitfalls of incoherence

Jacek Osiewalski (Cracow University of Economics)

Bayesian analysis for a given statistical model:

probabilistic representation of initial uncertainty about all “unknowns” – not only about

- bservations (available, missing, future) and latent variables, but also classical parameters

(unknown constants) Bayesian model – joint probability (density) function 𝒒(𝒛, 𝝏) = 𝒒(𝒛 | 𝝏) 𝒒(𝝏) 𝒒(𝒛 | 𝝏) – distribution of available observations given the remaining quantities 𝒒(𝝏) – marginal (multivariate) distribution of all quantities that remain unknown after seeing the data (i.e., after seeing the realization of the vector 𝒛 of available observations) Bayesian inference is based on simple, general rules of probability calculus 1o conditioning – Bayes formula: 𝒒(𝝏 | 𝒛) =

𝒒(𝒛 | 𝝏) 𝒒(𝝏) 𝒒(𝒛)

=

𝒒(𝒛 | 𝝏) 𝒒(𝝏) ∫ 𝒒(𝒛 | 𝝏) 𝒒(𝝏)

𝜵

∝ 𝒒(𝒛 | 𝝏) 𝒒(𝝏), 2o marginalization – deriving univariate distributions from 𝒒(𝝏 | 𝒛)

SLIDE 2

2

“Coherent inference” – the one following strict rules of probability calculus

Quasi-Bayesian inference:

Bayes formula used mechanically, outside the full probabilistic context – incoherence ! 𝒒(𝒛 | 𝝏) = 𝒉(𝒛; 𝝏) corresponds to some traditional statistical model 𝒒(𝝏) = 𝒈(𝝏; 𝒛) is specified using given 𝒛, so it cannot be the marginal distribution !!! thus 𝒒(𝝏 | 𝒛) ∝ 𝒉(𝒛; 𝝏) 𝒈(𝝏; 𝒛) IS NOT the posterior in a Bayesian model with initially assumed 𝒒(𝒛 | 𝝏), but it can be the posterior in a completely different Bayesian model question: what are the true building blocks (statistical model and prior) corresponding to such 𝒒(𝝏 | 𝒛)? it would be useful to know true assumptions, not only the declared ones fundamental pitfall of incoherence – 𝒒(𝝏 | 𝒛) corresponds to some statistical model and prior assumptions to be discovered ! So-called “Empirical Bayes” (EB) is the most popular quasi-Bayesian approach, advocated in non-Bayesian, sampling-theory texts on inference in hierarchical multi-level statistical models → Here we show hidden assumptions behind the EB inference in hierarchical models

SLIDE 3 3

SOME SIMPLE EXAMPLE FIRST (Example 1)

Bayesian model: 𝒒(𝒛, 𝝂) = 𝒒(𝒛 | 𝝂) 𝒒(𝝂) = 𝒈𝑶

𝒐(𝒛 | 𝝂 𝒇𝒐, 𝒅𝑱𝒐)𝒈𝑶 𝟐 (𝝂 | 𝒃, 𝒘)

Decomposition: 𝒒(𝒛, 𝝂) = 𝒒(𝒛) 𝒒(𝝂 | 𝒛) = 𝒈𝑶

𝒐(𝒛 | 𝒃 𝒇𝒐, 𝒅𝑱𝒐 + 𝒘 𝒇𝒐𝒇𝒐 ′ )𝒈𝑶 𝟐 (𝝂 | 𝒃𝒛, 𝒘𝒛)

where 𝒘𝒛 = (

𝒐 𝒅 + 𝟐 𝒘) −𝟐

, 𝒃𝒛 = (

𝒐 𝒅 + 𝟐 𝒘) −𝟐

(

𝒐 𝒅 𝒛

̅ +

𝟐 𝒘 𝒃), 𝒛

̅ =

𝟐 𝒐 𝒇𝒐 ′ 𝒛 , 𝒇𝒐 = (𝟐 𝟐 … 𝟐)′

Quasi-Bayesian inference: imagine a non-Bayesian statistician who agrees to use Bayes formula 𝒒(𝝂 | 𝒛) ∝ 𝒒(𝒛 | 𝝂) 𝒒(𝝂) but disagrees to subjectively specify 𝒃 (prior mean); instead he/she puts 𝒛 ̅ (sample average) and (informally) uses 𝒒∗(𝝂) = 𝒈𝑶

𝟐 (𝝂 | 𝒛

̅, 𝒘) and 𝒒∗(𝝂 | 𝒛) = 𝒈𝑶

𝟐 (𝝂 | 𝒛

̅, (

𝒐 𝒅 + 𝟐 𝒘) −𝟐

) Is there any hidden Bayesian model (sampling + prior) formally justifying such “posterior”? Consider 𝒒 ̃(𝒛, 𝝂) = 𝒒(𝒛 | 𝝂) 𝒒∗(𝝂) = 𝒈𝑶

𝒐(𝒛 − 𝝂 𝒇𝒐| 𝟏, 𝒅𝑱𝒐) 𝒈𝑶 𝟐 (𝝂 − 𝒛

̅ | 𝟏, 𝒘) it decomposes into 𝒒 ̃(𝝂 | 𝒛) = 𝒒∗(𝝂 | 𝒛) and 𝒒 ̃(𝒛) ∝ 𝐟𝐲𝐪 (−

𝟐 𝟑𝒅 𝒛′𝑵𝒛), 𝑵 = 𝑱𝒐 − 𝟐 𝒐 𝒇𝒐𝒇𝒐 ′

̃(𝒛 | 𝝂) = 𝒈𝑶

𝒐 (𝒛 | 𝝂 𝒇𝒐, 𝒅 (𝑱𝒐 − 𝒅 𝒐(𝒅+𝒐𝒘) 𝒇𝒐𝒇𝒐 ′ )) and 𝒒

̃(𝝂) constant (!!!) true sampling model assumes dependence (equi-correlation); true prior is flat, improper

SLIDE 4

4

MAIN PART: Statistical models with hierarchical structure

conditional distribution of observations: 𝒒(𝒛|𝜾) = 𝒉(𝒛; 𝜾), 𝒛𝝑𝒁, 𝜾𝝑𝚰; distribution of random parameters (latent variables): 𝒈𝟏(𝜾; 𝜷), 𝜷𝝑𝑩 ⊆ ℝ𝒕; joint distribution (α fixed): 𝒒(𝒛|𝜾) 𝒈𝟏(𝜾; 𝜷) = 𝒉(𝒛; 𝜾) 𝒈𝟏(𝜾; 𝜷) = 𝒈𝟐(𝜾|𝒛; 𝜷) 𝒊(𝒛; 𝜷) decomposition 𝒊(𝒛; 𝜷) marginal distribution of 𝒛 𝒈𝟐(𝜾|𝒛; 𝜷) =

𝒉(𝒛;𝜾) 𝒈𝟏(𝜾;𝜷) 𝒊(𝒛;𝜷)

∝ 𝒉(𝒛; 𝜾) 𝒈𝟏(𝜾; 𝜷) conditional distribution of 𝜾 (Bayes formula)

SLIDE 5

5

SIMPLE EXAMPLE OF A HIERARCHICAL MODEL (Example 2) 𝜾𝒋 – unobservable characteristic, randomly distributed over 𝒐 observed units (𝒋 = 𝟐, … , 𝒐), 𝜾 = (𝜾𝟐 … 𝜾𝒐)′, 𝜾𝒋~𝒋𝒋𝑶(𝜷, 𝒆), 𝒆 > 𝟏 known; 𝒚𝒋 = (𝒚𝒋𝟐 … 𝒚𝒋𝒏)′, 𝒚𝒋𝒌~𝒋𝒋𝑶(𝜾𝒋, 𝒅𝟏) (𝒌 = 𝟐, … , 𝒏) – independent measurements of 𝜾𝒋 (𝒅𝟏 known) 𝒛𝒋 =

𝟐 𝒏 𝒇𝒏 ′ 𝒚𝒋 = 𝒚

̅𝒋. – sufficient statistic (for fixed 𝜾𝒋); 𝒛𝒋~𝒋𝒋𝑶(𝜾𝒋, 𝒅), 𝒅 =

𝒅𝟏 𝒏 , 𝒛 = (𝒛𝟐 … 𝒛𝒐)′

𝒒(𝒛|𝜾) = 𝒈𝑶

𝒐(𝒛|𝜾, 𝒅𝑱𝒐), 𝒈𝟏(𝜾; 𝜷) = 𝒈𝑶 𝒐(𝜾|𝜷𝒇𝒐, 𝒆𝑱𝒐)

Decomposition of the product 𝒒(𝒛|𝜾) 𝒈𝟏(𝜾; 𝜷) into 𝒈𝟐(𝜾|𝒛; 𝜷) 𝒊(𝒛; 𝜷), where 𝒊(𝒛; 𝜷) = ∫ 𝒒(𝒛|𝜾)

ℝ𝒐

𝒈𝟏(𝜾; 𝜷) 𝒆𝜾 = 𝒈𝑶

𝒐(𝒛|𝜷𝒇𝒐, (𝒅 + 𝒆)𝑱𝒐),

𝒈𝟐(𝜾|𝒛; 𝜷) = 𝒈𝑶

𝒐(𝜾| 𝒆−𝟐 𝒅−𝟐+𝒆−𝟐 𝜷𝒇𝒐 + 𝒅−𝟐 𝒅−𝟐+𝒆−𝟐 𝒛, 𝟐 𝒅−𝟐+𝒆−𝟐 𝑱𝒐)

(final precision = sample + prior) 𝑭(𝜾|𝒛; 𝜷) = 𝒙 ∙ 𝜷𝒇𝒐 + (𝟐 − 𝒙) ∙ 𝒛, 𝒙 =

𝒆−𝟐 𝒅−𝟐+𝒆−𝟐 𝝑(𝟏, 𝟐) (𝒙 = prior precision / final precision)

𝑭(𝜾|𝒛; 𝜷) – point in 𝚰 = ℝ𝒐 lying on the line segment between (𝜷 𝜷 … 𝜷)′ and (𝒛𝟐 𝒛𝟑 … 𝒛𝒐)′ 𝒈𝟐(𝜾|𝒛; 𝜷) follows Bayes Theorem for any fixed 𝜷, so then we have coherence; but how to get 𝜷?

SLIDE 6 6

Empirical Bayes (EB)

inference on 𝜾 based on the conditional distribution 𝒈𝟐(𝜾|𝒛; 𝜷) obtained using Bayes Theorem, BUT for some point estimate of unknown 𝜷𝝑𝑩, e.g., using so-called type II maximum likelihood: 𝜷 ̂ = 𝜷 ̂𝑵𝑴 = 𝐛𝐬𝐡 𝐧𝐛𝐲 𝑴(𝜷; 𝒛) = 𝐛𝐬𝐡 𝐧𝐛𝐲 𝒊(𝒛; 𝜷), 𝜷𝝑𝑩 So EB uses 𝒒 ̂(𝜾|𝒛) = 𝒈𝟐(𝜾|𝒛, 𝜷 ̂) ∝ 𝒒(𝒛|𝜾)𝒈𝟏(𝜾; 𝜷 ̂), i.e. the “posterior” corresponding to the “prior” with hyper-parameter based on 𝒛 !!! EXAMPLE 2 (continued) 𝑴(𝜷; 𝒛) = 𝒊(𝒛; 𝜷) = 𝒈𝑶

𝒐(𝒛|𝜷𝒇𝒐, (𝒅 + 𝒆)𝑱𝒐) = (𝟑𝝆 ∙ 𝒅+𝒆 𝒐 )

𝟐 𝟑 𝒈𝑶

𝟐 (𝜷|𝒛

̅,

𝒅+𝒆 𝒐 ) 𝒈𝑶 𝒐(𝑵𝒛|𝟏, (𝒅 + 𝒆)𝑱𝒐),

𝜷 ̂ = 𝜷 ̂𝑵𝑴 = 𝒛 ̅ =

𝟐 𝒐 𝒇𝒐 ′ 𝒛 ,

𝑵 = 𝑱𝒐 −

𝟐 𝒐 𝒇𝒐𝒇𝒐 ′ ,

𝒒 ̂(𝜾|𝒛) = 𝒈𝟐(𝜾|𝒛, 𝜷 ̂) = 𝒈𝑶

𝒐(𝜾|𝜾

̂𝑭𝑪 ,

𝟐 𝒅−𝟐+𝒆−𝟐 𝑱𝒐), 𝜾

̂𝑭𝑪 =

𝒆−𝟐 𝒅−𝟐+𝒆−𝟐 𝒛

̅𝒇𝒐 +

𝒅−𝟐 𝒅−𝟐+𝒆−𝟐 𝒛

uncertainty about 𝜷 not taken into account obvious incoherence of inferences on 𝜾

SLIDE 7

7

Bayesian hierarchical model (BHM)

𝒒(𝒛, 𝝏) = 𝒒(𝒛, 𝜾, 𝜷) = 𝒒(𝒛|𝜾) 𝒒(𝜾|𝜷) 𝒒(𝜷), 𝒒(𝜷) – the prior for 𝜷𝝑𝑩 𝝏 = (𝜾, 𝜷), conditional independence: 𝒛 ⊥ 𝜷 | 𝜾 – leads to 𝒒(𝒛|𝝏) = 𝒒(𝒛|𝜾) 𝒒(𝒛|𝜾) = 𝒉(𝒛; 𝜾), 𝒒(𝜾|𝜷) = 𝒈𝟏(𝜾; 𝜷) – the same as in EB final decomposition of Bayesian model: 𝒒(𝒛, 𝜾, 𝜷) = 𝒒(𝒛) 𝒒(𝜾, 𝜷|𝒛) = 𝒒(𝒛) 𝒒(𝜷|𝒛) 𝒒(𝜾|𝒛, 𝜷) 𝒒(𝜾|𝒛, 𝜷) = 𝒒(𝒛|𝜾) 𝒒(𝜾|𝜷) 𝒒(𝒛|𝜷) = 𝒉(𝒛; 𝜾) 𝒈𝟏(𝜾; 𝜷) 𝒊(𝒛; 𝜷) = 𝒈𝟐(𝜾|𝒛; 𝜷) 𝒒(𝜷|𝒛) = 𝒒(𝒛|𝜷) 𝒒(𝜷) 𝒒(𝒛) = 𝒊(𝒛; 𝜷) 𝒒(𝜷) 𝒒(𝒛) 𝒒(𝒛) = ∫ 𝒒(𝒛|𝜷

𝑩

) 𝒒(𝜷) 𝒆𝜷 Remarks: 𝒒(𝜾|𝒛) = ∫ 𝒈𝟐(𝜾|𝒛; 𝜷) 𝒒(𝜷|𝒛) 𝒆𝜷

𝑩

– uncertainty about 𝜷 is formally taken into account Bayes Theorem is used twice: for latent variables (given parameters) and for parameters

SLIDE 8 8

EXAMPLE 2 (continued) – Bayesian hierarchical model with: 𝒒(𝒛|𝜾) = 𝒈𝑶

𝒐(𝒛|𝜾, 𝒅𝑱𝒐), 𝒒(𝜾|𝜷) = 𝒈𝑶 𝒐(𝜾|𝜷𝒇𝒐, 𝒆𝑱𝒐), 𝒒(𝜷) = 𝒈𝑶 𝟐 (𝜷|𝒃, 𝒘)

𝒒(𝜾) = ∫ 𝒒(𝜾|𝜷) 𝒒(𝜷) 𝒆𝜷 = 𝒈𝑶

𝒐(𝜾|𝒃𝒇𝒐, 𝒆𝑱𝒐 + 𝒘𝒇𝒐𝒇𝒐 ′ ) +∞ −∞

𝒒(𝜾|𝒛) ∝ 𝒒(𝒛|𝜾) 𝒒(𝜾) = 𝒈𝑶

𝒐(𝒛|𝜾, 𝒅𝑱𝒐) 𝒒(𝜾)

- r, equivalently, 𝒒(𝜾|𝒛) = ∫

𝒒(𝜾|𝒛, 𝜷) 𝒒(𝜷|𝒛) 𝒆𝜷 = ∫ 𝒈𝟐(𝜾|𝒛; 𝜷) 𝒒(𝜷|𝒛) 𝒆𝜷

+∞ −∞ +∞ −∞

where 𝒒(𝜷|𝒛) = 𝒈𝑶

𝟐 (𝜷| ( 𝒐 𝒅+𝒆 + 𝟐 𝒘) −𝟐

(

𝒐 𝒅+𝒆 𝒛

̅ +

𝒃 𝒘) , ( 𝒐 𝒅+𝒆 + 𝟐 𝒘) −𝟐

) Finally: 𝒒(𝜾|𝒛) = 𝒈𝑶

𝒐(𝜾| 𝒅−𝟐 𝒅−𝟐+𝒆−𝟐 𝒛 + 𝒆−𝟐 𝒅−𝟐+𝒆−𝟐 ( 𝒐 𝒅+𝒆 + 𝟐 𝒘) −𝟐

(

𝒐 𝒅+𝒆 𝒛

̅ +

𝒃 𝒘) ∙ 𝒇𝒐, 𝟐 𝒅−𝟐+𝒆−𝟐 𝑱𝒐 + ( 𝒐 𝒅+𝒆 + 𝟐 𝒘) −𝟐

(

𝒆−𝟐 𝒅−𝟐+𝒆−𝟐) 𝟑

𝒇𝒐𝒇𝒐

′ ).

If 𝒘−𝟐 ≈ 𝟏, then 𝒒(𝜷) ≈ 𝒅𝒑𝒐𝒕𝒖 , 𝒒(𝜾) ∝ 𝐟𝐲𝐪 (−

𝟐 𝟑𝒆 𝜾′𝑵𝜾) , 𝑵 = 𝑱𝒐 − 𝟐 𝒐 𝒇𝒐𝒇𝒐 ′ , and

𝒒(𝜾|𝒛) ≈ 𝒈𝑶

𝒐(𝜾|𝜾

̂𝑭𝑪,

𝟐 𝒅−𝟐+𝒆−𝟐 𝑱𝒐 + 𝒅𝟑 𝒐(𝒅+𝒆) 𝒇𝒐𝒇𝒐 ′ ); 𝒅𝟑 𝒐(𝒅+𝒆) 𝒇𝒐𝒇𝒐 ′ - reflects uncertainty about 𝜷!

If also 𝒐 is large enough, then 𝒒(𝜾|𝒛) ≈ 𝒒 ̂(𝜾|𝒛); asymptotically, incoherence does not matter

SLIDE 9

9

Small-sample interpretation of Empirical Bayes

For a given EB form of 𝒒 ̂(𝜾|𝒛), we seek for 𝒒 ̃(𝒛|𝜾) and 𝒒 ̃(𝜾) that lead to the Bayesian model 𝒒 ̃(𝒛, 𝜾) = 𝒒 ̃(𝒛|𝜾) 𝒒 ̃(𝜾) of the form 𝒒 ̃(𝒛, 𝜾) = 𝒍(𝒛) 𝒒(𝒛|𝜾) 𝒒(𝜾|𝜷 = 𝜷 ̂) = 𝒍(𝒛) 𝒉(𝒛; 𝜾) 𝒈𝟏(𝜾; 𝜷 ̂), resulting in 𝒒 ̂(𝜾|𝒛) as the true posterior, i.e. 𝒒 ̃(𝜾|𝒛) = 𝒒 ̂(𝜾|𝒛) ∝ 𝒉(𝒛; 𝜾) 𝒈𝟏(𝜾; 𝜷 ̂). From the form of 𝒒 ̃(𝒛, 𝜾) we obtain the (implicit) prior 𝒒 ̃(𝜾) = ∫ 𝒍(𝒛) 𝒉(𝒛; 𝜾) 𝒈𝟏(𝜾; 𝜷 ̂)

𝒁

𝒆𝒛 and then the (implicit) conditional distribution of observations 𝒒 ̃(𝒛|𝜾) = 𝒒 ̃(𝒛, 𝜾) 𝒒 ̃(𝜾) = 𝒍(𝒛) 𝒉(𝒛; 𝜾) 𝒈𝟏(𝜾; 𝜷 ̂) 𝒒 ̃(𝜾) . If both 𝒈𝟏 and 𝒍 are not constant in 𝒛, then 𝒒 ̃(𝒛|𝜾) ≠ 𝒒(𝒛|𝜾) = 𝒉(𝒛; 𝜾) and the true conditional distribution of observations is different from the initially assumed (declared) one.

SLIDE 10 10

EXAMPLE 2 (continued) 𝒒 ̃(𝒛, 𝜾) = 𝒍(𝒛)𝒉(𝒛; 𝜾) 𝒈𝟏(𝜾; 𝜷 ̂) = 𝒍 𝒈𝑶

𝒐(𝒛|𝜾, 𝒅𝑱𝒐) 𝒈𝑶 𝒐(𝜾|𝒛

̅𝒇𝒐, 𝒆𝑱𝒐) = 𝒈𝑶

𝒐 (𝒛|𝜾, (𝟐

𝒅 𝑱𝒐 + 𝟐 𝒆𝒐 𝒇𝒐𝒇𝒐

′ ) −𝟐

) 𝒍(𝟑𝝆)−𝒐

𝟑 𝐟𝐲𝐪

(− 𝟐 𝟑𝒆 𝜾′𝑵𝜾) From 𝒒 ̃(𝒛, 𝜾) we easily derive: 𝒒 ̃(𝜾) = 𝒍(𝟑𝝆)−𝒐

𝟑 𝐟𝐲𝐪 (−

𝟐 𝟑𝒆 𝜾′𝑵𝜾) – improper (only σ–finite), but informative (favors equal 𝜾𝒋)

(for 𝒘−𝟐 ≈ 𝟏 we get 𝒒(𝜾) ≈ 𝒒 ̃(𝜾), so the declared prior coincides with the true one) 𝒒 ̃(𝒛|𝜾) = 𝒈𝑶

𝒐 (𝒛|𝜾, ( 𝟐 𝒅 𝑱𝒐 + 𝟐 𝒆𝒐 𝒇𝒐𝒇𝒐 ′ ) −𝟐

) – conditional distribution with equally correlated observations (instead of independent ones!!!) 𝑾 ̃(𝒛|𝜾) = 𝒅 (𝑱𝒐 −

𝒅 𝒐(𝒅+𝒆) 𝒇𝒐𝒇𝒐 ′ ) 𝑫𝒑𝒔𝒔

̃ (𝒛𝒋, 𝒛𝒌|𝜾) =

𝒅 (𝒐−𝟐)𝒅+𝒐𝒆 (𝒋 ≠ 𝒌),

true 𝒒 ̃(𝒛|𝜾) is qualitatively different from declared 𝒒(𝒛|𝜾); problem disappears when 𝒐 → ∞

SLIDE 11

11

Concluding remarks

From the purely Bayesian perspective, using Bayes formula with “prior” dependent on actual data is completely incoherent. Is this, however, of any interest to a non-Bayesian statistician? Perhaps such incoherent quasi-Bayesian approach generates inference tools that are better in terms of sampling- theory properties... Remind that, under certain regularity conditions, Bayesian decision functions (estimators) are admissible (cannot be improved – in terms of risk – uniformly in the parameter space) and form complete classes of such decision functions. Here it has been shown that incoherent, quasi-Bayesian approaches can be interpreted as Bayesian for other sampling models, not for the declared (assumed) ones. When a quasi-Bayesian procedure is not Bayesian for the declared sampling model, it may produce inadmissible decision functions (within this sampling model). Being coherent (i.e., being Bayesian and obeying rules of probability) prevents from such risks – and it does so for (almost) free...

THANK YOU FOR YOUR ATTENTION!