CS440/ECE448 Lecture 15: Bayesian Inference and Bayesian Learning

Slides by Svetlana Lazebnik, 10/2016 Modified by Mark Hasegawa-Johnson, 3/2019

CS440/ECE448 Lecture 15: Bayesian Inference and Bayesian Learning - - PowerPoint PPT Presentation

CS440/ECE448 Lecture 15: Bayesian Inference and Bayesian Learning Slides by Svetlana Lazebnik, 10/2016 Modified by Mark Hasegawa-Johnson, 3/2019 Bayesian Inference and Bayesian Learning Bayes Rule Bayesian Inference Misdiagnosis

Slides by Svetlana Lazebnik, 10/2016 Modified by Mark Hasegawa-Johnson, 3/2019

a joint probability: ! ", $ = ! $ " ! " = ! " $ ! $

! " $ = ! $ " !(") !($)

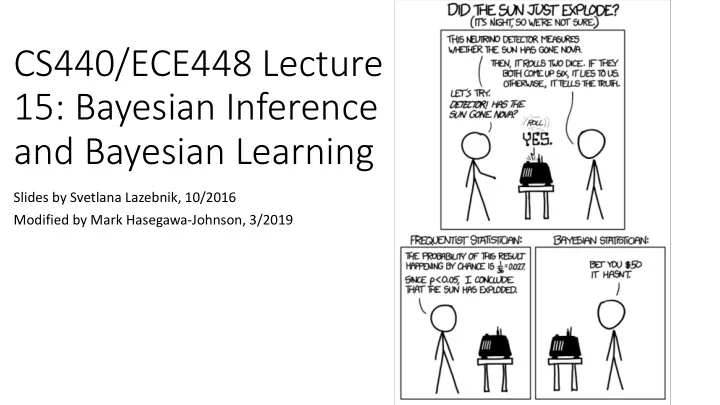

(example: the sun exploded)

amount of light falling on a solar cell)

probabilities that are much, much easier to measure (P(B|A)).

(1702-1761)

Eliot & Karson are getting married tomorrow, at an outdoor ceremony in the desert.

! " = 0.014 and ! ¬" = 0.956

When it actually rains, the weatherman (correctly) forecasts rain 90% of the time. ! , " = 0.9

! , ¬" = 0.1

! " , = ! , " !(") !(,) = ! ,, " !(") ! ,, " + !(,, ¬") = ! , " !(") ! ,|" !(") + ! , ¬" !(¬") = (0.9)(0.014) 0.9 0.014 + (0.1)(0.956) = 0.116

! " # = ! # " !(") !(#)

(the probability that light hits our solar cell, if the sun still exists and it’s daytime).

cell, if we don’t really know whether the sun still exists or not?)

! " # = ! # " !(") ! # " ! " + ! # ¬" ! ¬"

(1702-1761)

This version is what you memorize. This version is what you actually use.

What is the probability that she actually has breast cancer? P(cancer | positive) = P(positive | cancer)P(cancer) P(positive) 0776 . 095 . 008 . 008 . 99 . 096 . 01 . 8 . 01 . 8 . = + = ´ + ´ ´ = = P(positive | cancer)P(cancer) P(positive | cancer)P(cancer)+ P(positive | ¬cancer)P(¬Cancer)

CHECK YOUR SYMPTOMS FIND A DOCTOR FIND LOWEST DRUG PRICES

HEALTH A-Z DRUGS & SUPPLEMENTS LIVING HEALTHY FAMILY & PREGNANCY NEWS & EXPERTS

To Get Health

ADVERTISEMENT

HEALTH INSURANCE AND MEDICARE HOME

News Reference Quizzes Videos Message Boards Find a Doctor

RELATED TO HEALTH INSURANCE

Health Insurance and Medicare ! Reference !

If your doctor tells you that you have a health problem or suggests a treatment for an illness or injury, you might want a second opinion. This is especially true when you're considering surgery or major procedures. Asking another doctor to review your case can be useful for many reasons:

" # $

TODAY ON WEBMD

Clinical Trials

What qualifies you for one?

Working During Cancer Treatment

Know your benefits.

Going to the Dentist?

How to save money.

Enrolling in Medicare

How to get started.

SEARCH

(

SIGN IN

SUBSCRIBE

Considering Treatment for Illness, Injury? Get a Second Opinion https://www.webmd.com/health-insurance/second-opinions#1

L(y,a) y=heads y=tails a=heads 1 a=tails 1 c=0 c=1 P(L(Y,a)=c) 0.6 0.4

Suppose the agent guesses that Y=a.

!(*(#, +) = 0|' = () = !(# = +|' = () ! * #, + = 1 ' = ( = ! # ≠ + ' = ( = ∑123 !(# = %|' = ()

! "($, &) ! = ) = *

+

" ,, & - $ = , ! = ) = *

+./

/

!

∗= argmax! ) * = ! + = , = argmax!

) + = , * = ! )(* = !) )(+ = ,) = argmax! ) + = , * = ! )(* = !)

Maximum Likelihood (ML) decision: !

∗ /0 = argmax a)(+ = ,|* = !)

Maximum A Posterior (MAP) decision: a* MAP = argmax! ) * = ! + = , = argmax!) + = , * = ! )(* = !)

likelihood prior posterior

P(class | document)

posterior P(class | document)

P(document | class) for all classes and priors P(class)

and priors P(class)

class

and priors P(class)

class

individual words p(wi | class)

P(document | class) = P(w1, ... ,wn | class) = P(wi | class)

i=1 n

p(class)

spam: 0.33 ¬spam: 0.67 P(word | ¬spam) P(word | spam) prior

US Presidential Speeches Tag Cloud http://chir.ag/projects/preztags/

US Presidential Speeches Tag Cloud http://chir.ag/projects/preztags/

US Presidential Speeches Tag Cloud http://chir.ag/projects/preztags/

maximizes the likelihood of the training data:

P(word | class) = # of occurrences of this word in docs from this class total # of words in docs from this class

= = D d n i i d i d

d

1 1 , ,

d: index of training document, i: index of a word

9:2 5

20, … , ' 50].

(the naïve Bayes a.k.a. bag-of-words assumption), then we get: %(7, 8) = 9

0:2 ;

%(+0 = ,0) 9

<:2 5

%('

<0 = ) <0|+0 = ,0) = 9 0:2 ;

."= 9

<:2 5

!"=#>=

'() = # occurrences of word 6 in documents of type < total number of words in all documents of type < @( = # documents of type < total number of documents

! !"#!!,!!!,!!! = 0

! !"!##,###,##! =

set? (Hint: what happens if you give it probability 0, then it actually occurs in a test document?)

more time than you actually did P(word | class) = # of occurrences of this word in docs from this class + 1 total # of words in docs from this class + V (V: total number of unique words) P(word | class) = # of occurrences of this word in docs from this class total # of words in docs from this class

P(class | document)∝ P(class) P(wi | class)

i=1 n

P(class1) … P(classK) P(w1 | class1) P(w2 | class1) … P(wn | class1) Likelihood

prior P(w1 | classK) P(w2 | classK) … P(wn | classK) Likelihood

…

Prediction

Training Labels Training Samples Training

Features Features

Test Sample Learned model Learned model