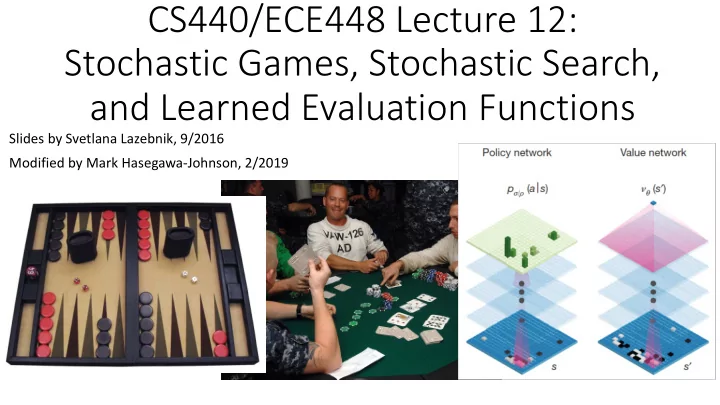

CS440/ECE448 Lecture 12: Stochastic Games, Stochastic Search, and Learned Evaluation Functions

Slides by Svetlana Lazebnik, 9/2016 Modified by Mark Hasegawa-Johnson, 2/2019

CS440/ECE448 Lecture 12: Stochastic Games, Stochastic Search, and - - PowerPoint PPT Presentation

CS440/ECE448 Lecture 12: Stochastic Games, Stochastic Search, and Learned Evaluation Functions Slides by Svetlana Lazebnik, 9/2016 Modified by Mark Hasegawa-Johnson, 2/2019 Reminder: Exam 1 (Midterm) Thu, Feb 28 in class Review in

Slides by Svetlana Lazebnik, 9/2016 Modified by Mark Hasegawa-Johnson, 2/2019

/0 /1234

789:4 /1234 ;%<"=)

/0 /1234

789:4 /1234 > ;%<"=)

> ;%<"=) = ?

1@AB1/34

C=(D"DE#EFG ($FH(I% ×;%<"=)(($FH(I%)

§ Utility(node) if node is terminal § maxaction Value(Succ(node, action)) if type = MAX § minaction Value(Succ(node, action)) if type = MIN § sumaction P(Succ(node, action)) * Value(Succ(node, action)) if type = CHANCE

coin to match the one she has.

! " ×1 + ! " × −5 = −2

! " ×(−1) + ! " ×5 = 2

/0123 45673

/:;23 45673

4:33:;< :;=5

Source States are grouped into information sets for each player

! "#$%&' = )

*+,-*./0

1&23%345467 28692:# ×"#$%&'(28692:#)

! "#$%&' ≈ 1 @ )

ABC D

"#$%&'(4E6ℎ &%@'2: G%:#)

computation you can afford.

factor, and no good heuristics – like Go?

use randomized simulations

using a tree policy (trading off exploration and exploitation)

a default policy (e.g., random moves) until a terminal state is reached

to update the value estimates

Current state = root of tree Node weights: wins/total playouts for current player Leaf nodes = nodes where no simulation (”playout”) has been performed yet

Training phase:

billions of possible starting states.

billion random games Generalization:

Testing phase:

state at your horizon using Value(state) ≈ a1*x1+a2*x2+…

data

achieved during actual game play. Some starting states will be missed, so generalized evaluation function is necessary

actual game play

should not waste their time

play go.”

Anton Ninno Roy Laird, Ph.D. antonninno@yahoo.com roylaird@gmail.com special thanks to Kiseido Publications

neural networks

image

approximation machinery

distribution over possible moves (policy) or expected value of position

January 2016

to maximize expected final outcome

source Pachi Go program 85% of the time

January 2016

position s and following the learned policy for both players

predicted outcome

January 2016

January 2016

counts N(s,a), action values Q(s,a)

exploration bonus (proportional to P but inversely proportional to N)

combination of value network estimate and outcome of simulated game using rollout network

average of values of all simulations passing through that edge

January 2016

January 2016

January 2016

International Master Level (arXiv, September 2015)

learning to learn a good evaluation function

beta search

in 2016

in a lifetime of playing

Texas Hold’em poker (2017)

http://xkcd.com/1002/ See also: http://xkcd.com/1263/