12/03/12 1

Machine Learning: Algorithms and Applications

Floriano Zini Free University of Bozen-Bolzano Faculty of Computer Science Academic Year 2011-2012 Lecture 3: 12th March 2012

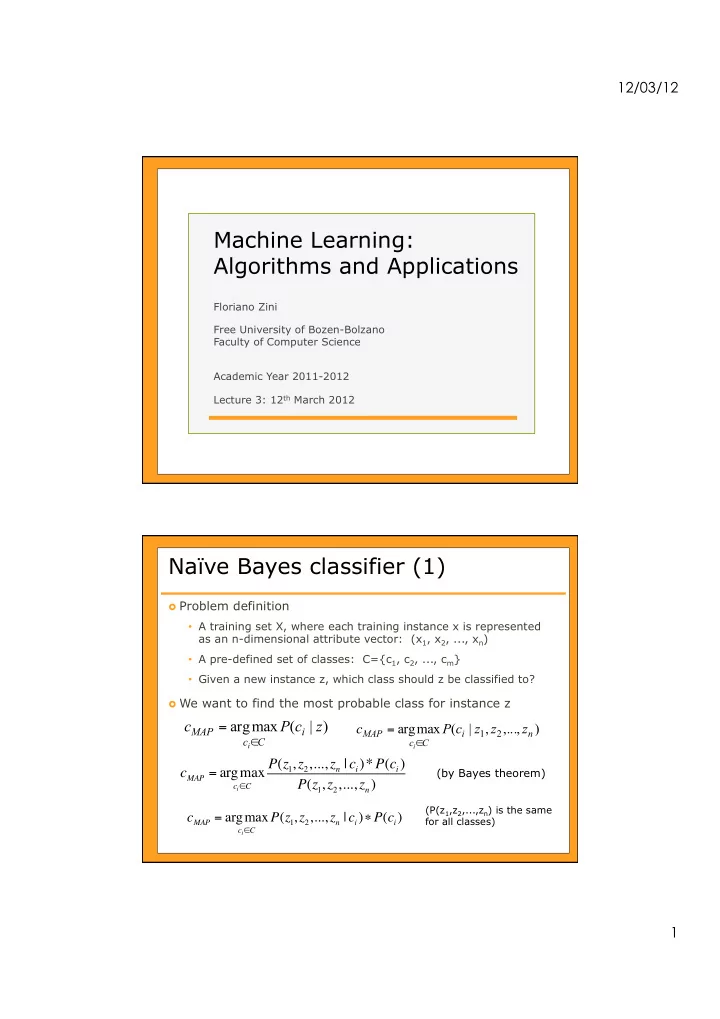

Naïve Bayes classifier (1)

Problem definition

- A training set X, where each training instance x is represented

as an n-dimensional attribute vector: (x1, x2, ..., xn)

- A pre-defined set of classes: C={c1, c2, ..., cm}

- Given a new instance z, which class should z be classified to?

We want to find the most probable class for instance z

) | ( max arg z c P c

i C c MAP

i∈

=

) ,..., , | ( max arg

2 1 n i C c MAP

z z z c P c

i∈

=

cMAP = argmax

ci!C

P(z1, z2,..., zn | ci)*P(ci) P(z1, z2,..., zn)

(by Bayes theorem)

cMAP = argmax

ci!C

P(z1, z2,..., zn | ci)"P(ci)

(P(z1,z2,...,zn) is the same for all classes)