Predicting Computer System Failures Using Support Vector Machines

Errin W. Fulpa Glenn A. Finkb Jereme N. Haackb

aWake Forest University

Department of Computer Science Winston-Salem NC, USA

bPacific Northwest National

Laboratory Richland WA, USA Pacific Northwest

NATIONAL LABORATORY

USENIX Workshop on the Analysis of System Logs December 7, 2008

System Event Prediction 1

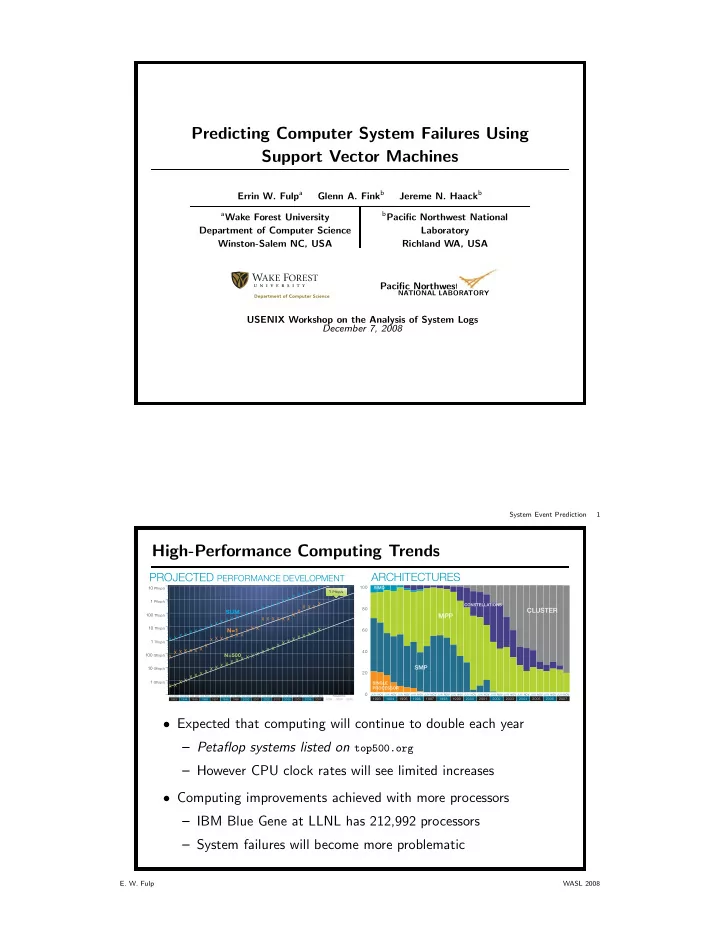

High-Performance Computing Trends

PROJECTED PERFORMANCE DEVELOPMENT

X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X X 1 Gflop/s 10 Gflop/s 100 Gflop/s 1 Tflop/s 10 Tflop/s 100 Tflop/s 1 Pflop/s 10 Pflop/s

SUM N=1

N=500

JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV1993 1994 1995 1996 1997 1998 1999 2006 2007 2000 2001 2002 2003 2004 2005 2009 2010 2008

PROJECTED1 Pflop/s

ARCHITECTURES

20 40 60 80 100

CLUSTER

CONSTELLAT A IONS SIMD

MPP

SMP

SINGLE PROCESSOR

JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN JUN NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV NOV

1993 1994 1995 1996 1997 1998 1999 2006 2007 2000 2001 2002 2003 2004 2005

- Expected that computing will continue to double each year

– Petaflop systems listed on top500.org – However CPU clock rates will see limited increases

- Computing improvements achieved with more processors

– IBM Blue Gene at LLNL has 212,992 processors – System failures will become more problematic

- E. W. Fulp

WASL 2008