1

Statistical NLP

Spring 2011

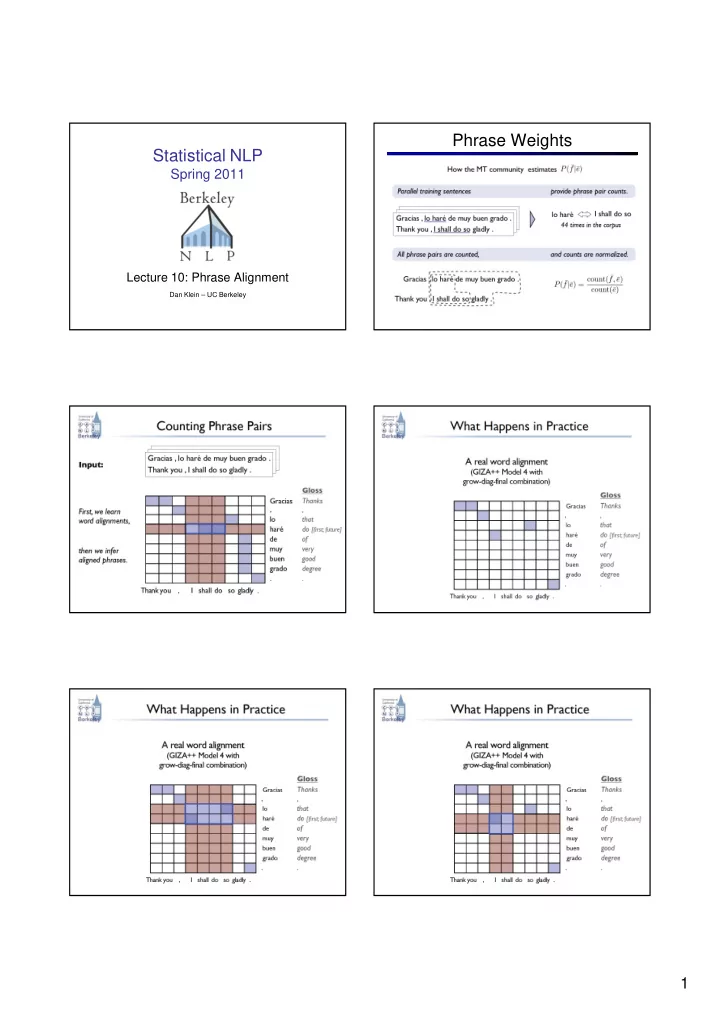

Lecture 10: Phrase Alignment

Dan Klein – UC Berkeley

Phrase Weights Statistical NLP Spring 2011 Lecture 10: Phrase - - PDF document

Phrase Weights Statistical NLP Spring 2011 Lecture 10: Phrase Alignment Dan Klein UC Berkeley 1 Phrase Scoring Phrase Size Phrases do help Learning weights has been tried, several times: But they dont need [Marcu

Dan Klein – UC Berkeley

les chats aiment le poisson cats like fresh fish . . frais .

tried, several times:

a variety of partially understood reasons

all the weight, obvious priors don’t help

In the past two years , a number

US citizens … 过去 两 年 中 , 一 批 美国 公民 … past two year in ,

lots US citizen

Phrase alignment models: Choose a segmentation and a

Past Go over

Underlying assumption: There is a correct phrasal segmentation

In the past two years , a number

US citizens … 过去 两 年 中 , 一 批 美国 公民 … past two year in ,

lots US citizen

Problem 1: Overlapping phrases can be useful (and complementary) Problem 2: Phrases and their sub-phrases can both be useful Hypothesis: This is why models of phrase alignment don’t work well

This talk: Modeling sets of overlapping, multi-scale phrase pairs

In the past two years , a number

US citizens … 过去 两 年 中 , 一 批 美国 公民 … past two year in ,

lots US citizen

Input: sentence pairs Output: extracted phrases

M O T I V A T I O N

In the past two years 过去 两 年 中

past two year in

Sentence Pair Word Alignment Extracted Phrases

M O T I V A T I O N

Sentence Pair Extracted Phrases Conditional model of extraction sets given sentence pairs

In the past two years 过去 两 年 中

1 2 3 4 1 2 3 4 5

In the past two years 过去 两 年 中

1 2 3 4 1 2 3 4 5

Extracted Phrases + ``Word Alignments’’

M O D E L

In the past two years 过去 两 年 中

past two year in

1 2 3 4 1 2 3 4 5

Word-level alignment links Word-to-span alignments Extraction set

M O D E L

In the past two years 过去 两 年 中

past two year in

1 2 3 4 1 2 3 4 5

Sure and possible word links Word-to-span alignments Extraction set

M O D E L

In the past two years 过去 两 年 中

1 2 3 4 1 2 3 4 5

Features on sure links Features on all bispans

F E A T U R E S

过 地球

go over Earth

the Earth Some features on sure links HMM posteriors Presence in dictionary Numbers & punctuation Features on bispans HMM phrase table features: e.g., phrase relative frequencies Lexical indicator features for phrases with common words Monolingual phrase features: e.g., “the _____” Shape features: e.g., Chinese character counts

T R A I N I N G

Hand Aligned: Sure and possible word links Word-to-span alignments Extraction set

Deterministic: A bispan is included iff every word within the bispan aligns within the bispan Deterministic: Find min and max alignment index for each word

T R A I N I N G

Loss function: F-score of bispan errors (precision & recall) Training Criterion: Minimal change to w such that the gold is preferred to the guess by a loss-scaled margin Gold (annotated) Guess (arg max wɸ)

I N F E R E N C E

ITG captures some bispans

R E S U L T S

Chinese-to-English newswire Parallel corpus: 11.3 million words; sentences length ≤ 40 MT systems: Tuned and tested on NIST ‘04 and ‘05 Supervised data: 150 training & 191 test sentences (NIST ‘02) Unsupervised Model: Jointly trained HMM (Berkeley Aligner)

R E S U L T S

HMM: ITG: Coarse: State-of-the-art unsupervised baseline Joint training & competitive posterior decoding Source of many features for supervised models Supervised ITG aligner with block terminals State-of-the-art supervised baseline Re-implementation of Haghighi et al., 2009 Supervised block ITG + possible alignments Coarse pass of full extraction set model

R E S U L T S 84.7 84.0 84.4 82.2 84.2 83.1 83.4 83.8 83.6 84.0 76.9 80.4 Precision Recall 1 - AER HMM ITG Coarse Full

R E S U L T S 69.0 74.2 71.6 74.0 70.0 72.9 71.4 72.8 75.8 62.3 68.4 62.8 69.5 59.5 64.1 59.9 Precision Recall F1 F5 HMM ITG Coarse Full

R E S U L T S 34.4 35.9 34.2 35.7 33.6 34.7 33.2 34.5 31 32 33 34 35 36 37 Moses Joshua HMM ITG Coarse Full

Supervised conditions also included HMM alignments