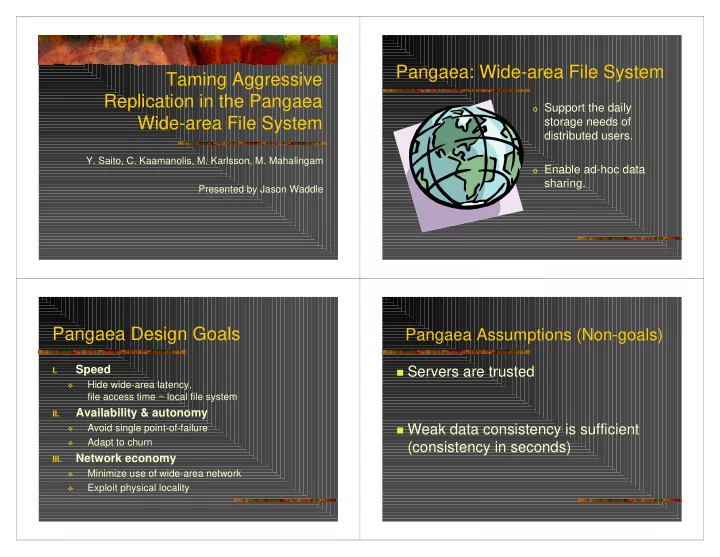

Taming Aggressive Replication in the Pangaea Wide-area File System

- Y. Saito, C. Kaamanolis, M. Karlsson, M. Mahalingam

Presented by Jason Waddle

Pangaea: Wide-area File System

- Support the daily

storage needs of distributed users.

- Enable ad-hoc data

sharing.

Pangaea Design Goals

I.

Speed

- Hide wide-area latency,

file access time ~ local file system

II.

Availability & autonomy

- Avoid single point-of-failure

- Adapt to churn

III.

Network economy

- Minimize use of wide-area network

- Exploit physical locality