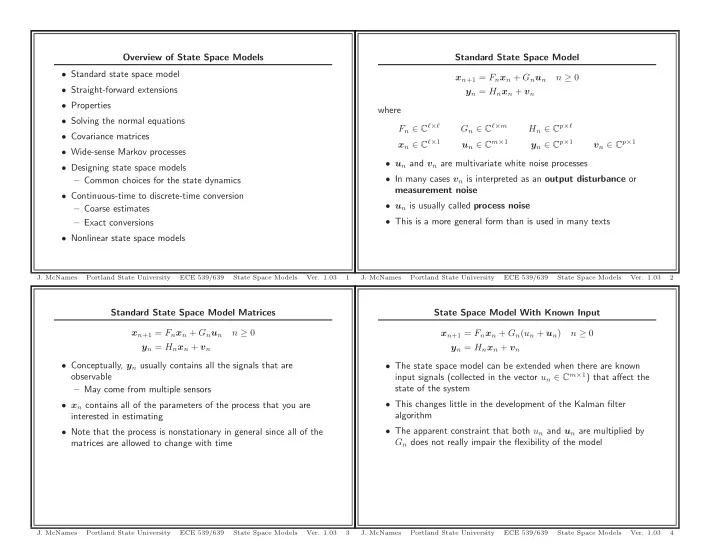

Standard State Space Model Matrices xn+1 = Fnxn + Gnun n ≥ 0 yn = Hnxn + vn

- Conceptually, yn usually contains all the signals that are

- bservable

– May come from multiple sensors

- xn contains all of the parameters of the process that you are

interested in estimating

- Note that the process is nonstationary in general since all of the

matrices are allowed to change with time

- J. McNames

Portland State University ECE 539/639 State Space Models

- Ver. 1.03

3

Overview of State Space Models

- Standard state space model

- Straight-forward extensions

- Properties

- Solving the normal equations

- Covariance matrices

- Wide-sense Markov processes

- Designing state space models

– Common choices for the state dynamics

- Continuous-time to discrete-time conversion

– Coarse estimates – Exact conversions

- Nonlinear state space models

- J. McNames

Portland State University ECE 539/639 State Space Models

- Ver. 1.03

1

State Space Model With Known Input xn+1 = Fnxn + Gn(un + un) n ≥ 0 yn = Hnxn + vn

- The state space model can be extended when there are known

input signals (collected in the vector un ∈ Cm×1) that affect the state of the system

- This changes little in the development of the Kalman filter

algorithm

- The apparent constraint that both un and un are multiplied by

Gn does not really impair the flexibility of the model

- J. McNames

Portland State University ECE 539/639 State Space Models

- Ver. 1.03

4

Standard State Space Model xn+1 = Fnxn + Gnun n ≥ 0 yn = Hnxn + vn where Fn ∈ Cℓ×ℓ Gn ∈ Cℓ×m Hn ∈ Cp×ℓ xn ∈ Cℓ×1 un ∈ Cm×1 yn ∈ Cp×1 vn ∈ Cp×1

- un and vn are multivariate white noise processes

- In many cases vn is interpreted as an output disturbance or

measurement noise

- un is usually called process noise

- This is a more general form than is used in many texts

- J. McNames

Portland State University ECE 539/639 State Space Models

- Ver. 1.03

2