Classification Losses & Risks Discriminant Functions Association Rules

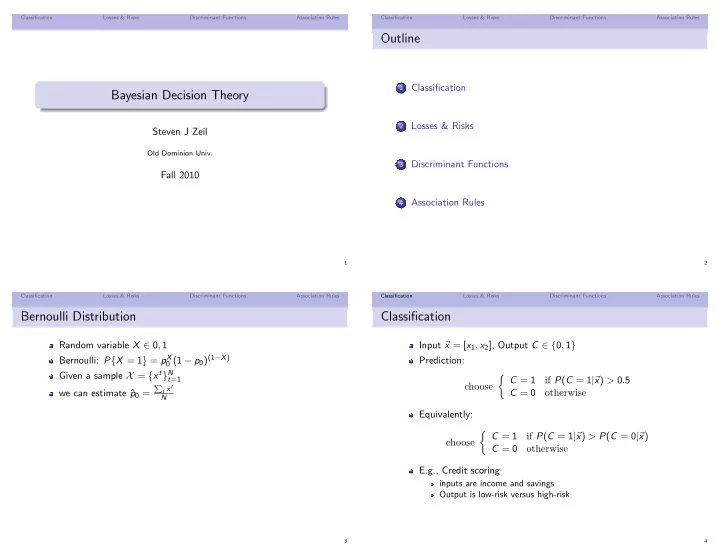

Bayesian Decision Theory

Steven J Zeil

Old Dominion Univ.

Fall 2010

1 Classification Losses & Risks Discriminant Functions Association Rules

Outline

1

Classification

2

Losses & Risks

3

Discriminant Functions

4

Association Rules

2 Classification Losses & Risks Discriminant Functions Association Rules

Bernoulli Distribution

Random variable X ∈ 0, 1 Bernoulli: P{X = 1} = pX

0 (1 − p0)(1−X)

Given a sample X = {xt}N

t=1

we can estimate ˆ p0 =

- t xt

N

3 Classification Losses & Risks Discriminant Functions Association Rules

Classification

Input x = [x1, x2], Output C ∈ {0, 1} Prediction: choose C = 1 if P(C = 1| x) > 0.5 C = 0

- therwise

Equivalently: choose C = 1 if P(C = 1| x) > P(C = 0| x) C = 0

- therwise

E.g., Credit scoring

inputs are income and savings Output is low-risk versus high-risk

4