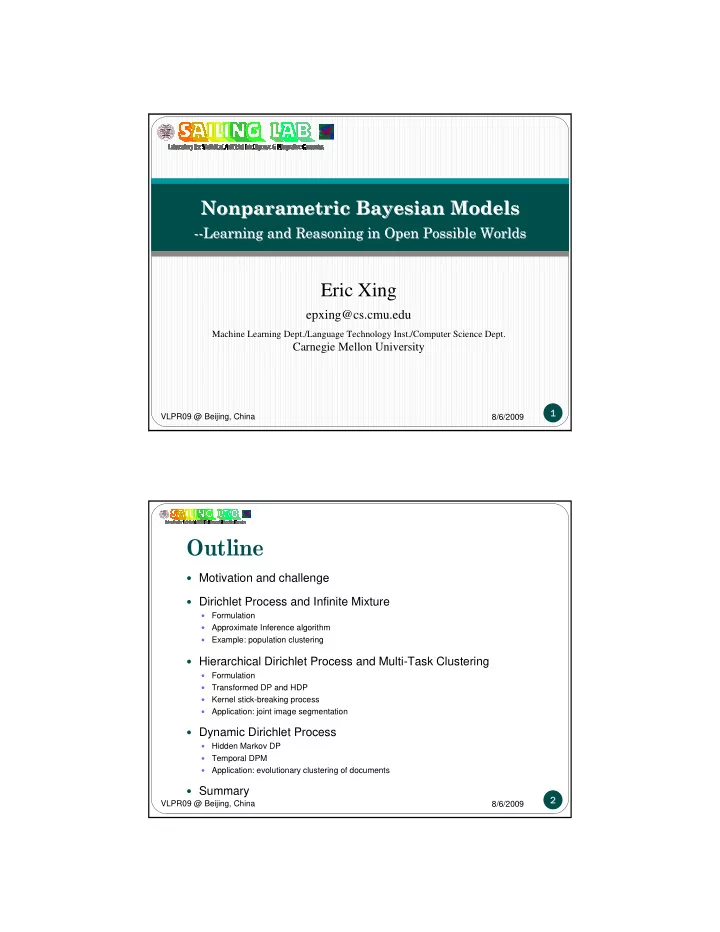

8/6/2009 VLPR09 @ Beijing, China

1

Eric Xing

epxing@cs.cmu.edu

Machine Learning Dept./Language Technology Inst./Computer Science Dept.

Carnegie Mellon University

Nonparametric Nonparametric Bayesian M Bayesian Models

- dels

- -Learning and Reasoning in Open Possible Worlds

Learning and Reasoning in Open Possible Worlds

8/6/2009 VLPR09 @ Beijing, China

2

Outline

Motivation and challenge Dirichlet Process and Infinite Mixture

- Formulation

- Approximate Inference algorithm

- Example: population clustering

Hierarchical Dirichlet Process and Multi-Task Clustering

- Formulation

- Transformed DP and HDP

- Kernel stick-breaking process

- Application: joint image segmentation

Dynamic Dirichlet Process

- Hidden Markov DP

- Temporal DPM

- Application: evolutionary clustering of documents

Summary