1

Neural Net works

J uly 7, 2005 CS 486/ 686 Univer sit y of Wat erloo

CS486/686 Lecture Slides (c) 2005 P. Poupart

2

Out line

- Neural net wor ks

– Percept r on – Supervised learning algorit hms f or neur al net works

- Reading: R&N Ch 20.5

CS486/686 Lecture Slides (c) 2005 P. Poupart

3

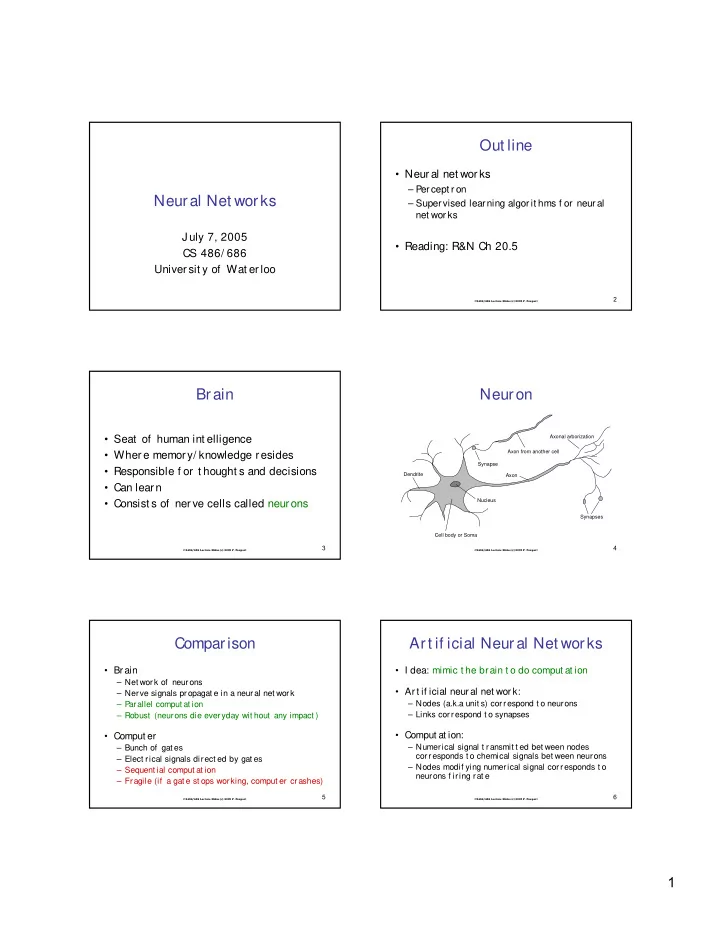

Brain

- Seat of human int elligence

- Where memory/ knowledge resides

- Responsible f or t hought s and decisions

- Can learn

- Consist s of ner ve cells called neurons

CS486/686 Lecture Slides (c) 2005 P. Poupart

4

Neuron

Axon Cell body or Soma Nucleus Dendrite Synapses Axonal arborization Axon from another cell Synapse

CS486/686 Lecture Slides (c) 2005 P. Poupart

5

Comparison

- Brain

– Net work of neurons – Nerve signals propagat e in a neural net work – Parallel comput at ion – Robust (neurons die everyday wit hout any impact )

- Comput er

– Bunch of gat es – Elect rical signals direct ed by gat es – Sequent ial comput at ion – Fragile (if a gat e st ops working, comput er crashes)

CS486/686 Lecture Slides (c) 2005 P. Poupart

6

Art if icial Neural Net works

- I dea: mimic t he brain t o do comput at ion

- Art if icial neural net work:

– Nodes (a.k.a unit s) correspond t o neurons – Links correspond t o synapses

- Comput at ion: