1 FEATURE SELECTION USING ANT COLONY OPTIM IZATION: APPLICATIONS IN HEALTH CARE

João M . C. Sousa1

jmsousa@ist.utl.pt

- S. M . Vieira1, S. N. Finkelstein2,3, A. S. Fialho1,2,

F . Cismondi1,2, S. R. Reti3 and M . D. Howell3

1 Technical University of Lisbon, Instituto Superior Técnico, Dept. of Mechanical Engineering,

CIS/IDMEC – LAETA, Av. Rovisco Pais, 1049-001 Lisbon, Portugal

2 Massachusetts Institute of Technology, Engineering Systems Division, 77 Massachusetts

Avenue, 02139 Cambridge, MA, USA

3 Division of Clinical Informatics, Department of Medicine, Beth Israel Deaconess Medical

Centre, Harvard Medical School, Boston, MA, USA

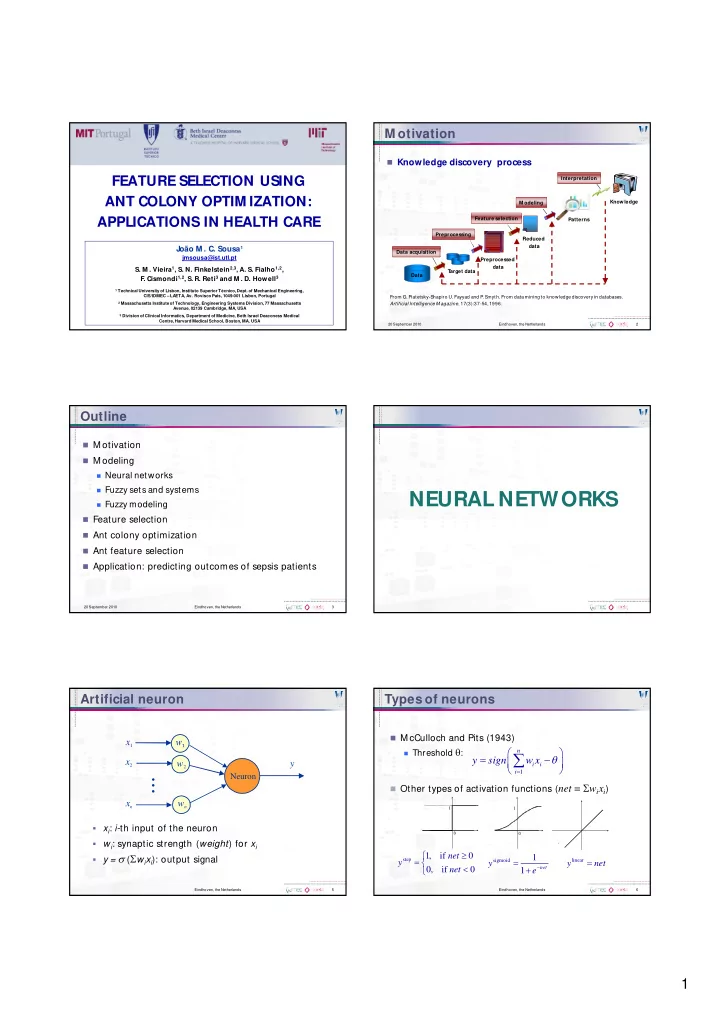

M otivation

Knowledge discovery process

20 September 2010 Eindhoven, the Netherlands 2

M odeling Data T arget data Preprocessed data Reduced data Patterns Knowledge Data acquisition Preprocessing Feature selection Interpretation From G. Piatetsky-Shapiro U. Fayyad and P . Smyth. From data mining to knowledge discovery in databases. Artificial Intelligence Magazine, 17(3):37-54, 1996.

Outline

M otivation M odeling

Neural networks Fuzzy sets and systems Fuzzy modeling

Feature selection Ant colony optimization Ant feature selection Application: predicting outcomes of sepsis patients

20 September 2010 Eindhoven, the Netherlands 3

NEURAL NETWORKS

Eindhoven, the Netherlands 5

Artificial neuron

xi: i-th input of the neuron wi: synaptic strength (weight) for xi y = (wixi): output signal

w2 wn x1 x2 xn

...

y

Neuron

Eindhoven, the Netherlands 6

Types of neurons

M cCulloch and Pits (1943)

Threshold : 1

- n

i i i

y sign w x

- Other types of activation functions (net = wixi)

1 1

step

1, if 0, if

- net

y net

sigmoid

1 1

- net

y e

linear

y net