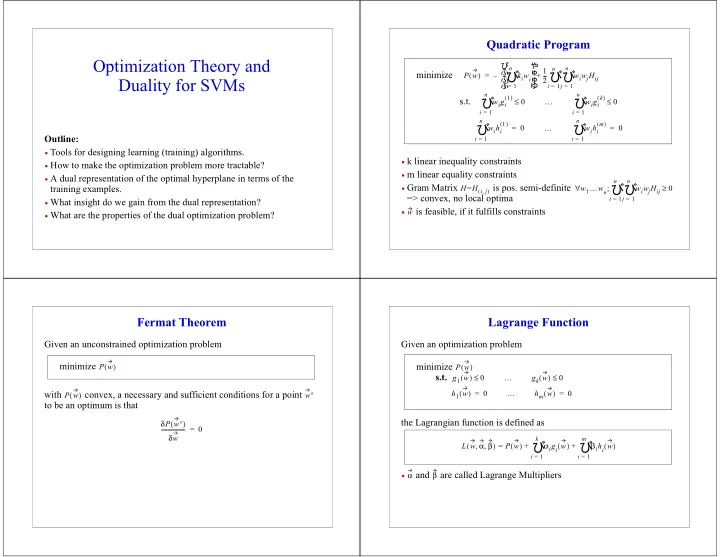

OptimizationTheoryand DualityforSVMs

Outline:

- Toolsfordesigninglearning(training)algorithms.

- Howtomaketheoptimizationproblemmoretractable?

- Adualrepresentationoftheoptimalhyperplaneintermsofthe

trainingexamples.

- Whatinsightdowegainfromthedualrepresentation?

- Whatarethepropertiesofthedualoptimizationproblem?

QuadraticProgram

- klinearinequalityconstraints

- mlinearequalityconstraints

- GramMatrix

ispos.semi-definite =>convex,nolocaloptima

- isfeasible,ifitfulfillsconstraints

minimize s.t.

P w ( ) kiwi

i 1 = n

- –

1 2

- wiwjHij

j 1 = n

- i

1 = n

- +

= wigi

1 ( ) i 1 = n

- ≤

… wigi

k ( ) i 1 = n

- ≤

wihi

1 ( ) i 1 = n

- =

… wihi

m ( ) i 1 = n

- =

H H i j

, ( )

= w1…wn wiwjHij

j 1 = n

- i

1 = n

- ;

∀ ≥ w

FermatTheorem

Givenanunconstrainedoptimizationproblem with convex,anecessaryandsufficientconditionsforapoint

- tobeanoptimumisthat

minimize

- P w

( ) P w ( ) w° δP w° ( ) δw

- =

LagrangeFunction

Givenanoptimizationproblem theLagrangianfunctionisdefinedas

- and arecalledLagrangeMultipliers

minimize s.t.

- P w

( ) g1 w ( ) ≤ … gk w ( ) ≤ h1 w ( ) = … hm w ( ) =

- L w α β

, , ( ) P w ( ) αigi w ( )

i 1 = k

- βihi w

( )

i 1 = m

- +

+ = α β