1

1

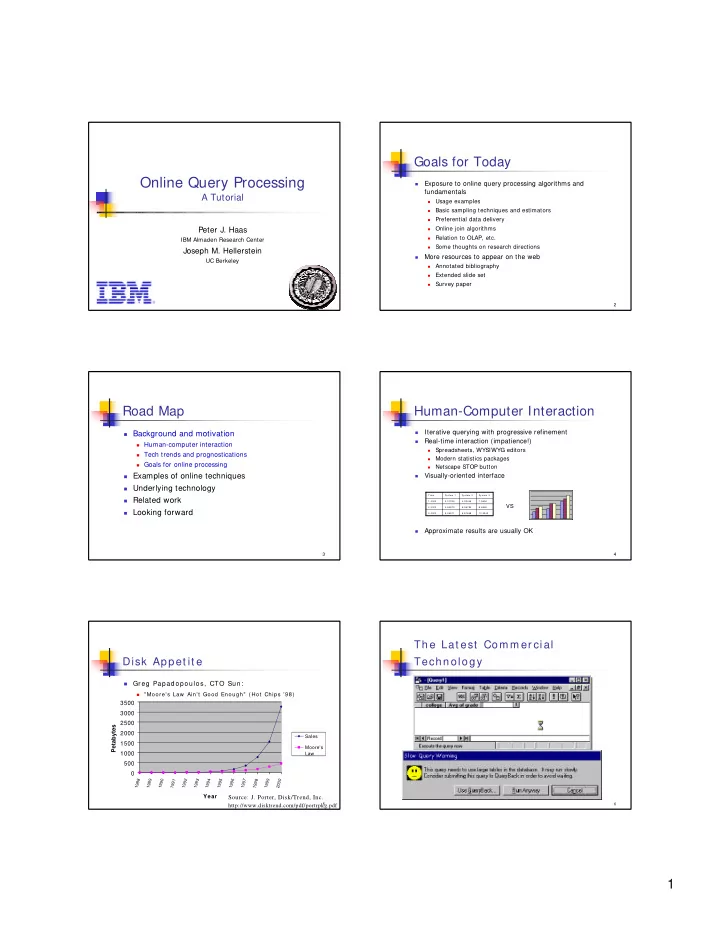

Online Query Processing

A Tutorial

Peter J. Haas

IBM Almaden Research Center

Joseph M. Hellerstein

UC Berkeley

2

Goals for Today

- Exposure to online query processing algorithms and

fundamentals

- Usage examples

- Basic sampling techniques and estimators

- Preferential data delivery

- Online join algorithms

- Relation to OLAP, etc.

- Some thoughts on research directions

- More resources to appear on the web

- Annotated bibliography

- Extended slide set

- Survey paper

3

Road Map

Background and motivation

Human-computer interaction Tech trends and prognostications Goals for online processing

Examples of online techniques Underlying technology Related work Looking forward 4

Human-Computer Interaction

- Iterative querying with progressive refinement

- Real-time interaction (impatience!)

- Spreadsheets, WYSIWYG editors

- Modern statistics packages

- Netscape STOP button

- Visually-oriented interface

- Approximate results are usually OK

10.3343 6.87658 5.46571 3.0000 8.6562 6.56784 4.54673 2.0000 7.5654 4.32445 3.01325 1.0000 Syst em 3 Syst em 2 Syst em 1 Tim e

VS

5

Disk Appet it e

- Greg Papadopoulos, CTO Sun:

- " Moore's Law Ain't Good Enough" ( Hot Chips ’98)

500 1000 1500 2000 2500 3000 3500

1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000

Year Petabytes

Sales Moore's Law

Source: J. Porter, Disk/Trend, Inc. http://www.disktrend.com/pdf/portrpkg.pdf

6