O RGANIZATION OF THE TUTORIAL 8:30 9:30 Part 1: Introduction Part - PowerPoint PPT Presentation

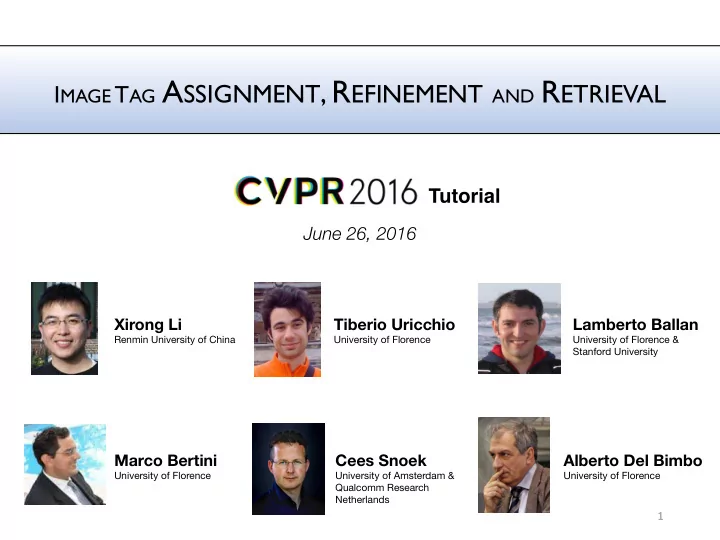

I MAGE T AG A SSIGNMENT , R EFINEMENT AND R ETRIEVAL Tutorial June 26, 2016 Xirong Li Tiberio Uricchio Lamberto Ballan Renmin University of China University of Florence University of Florence & Stanford University Marco Bertini Cees Snoek

U NIFIED FRAMEWORK Auxiliary Components Filter & Precompute ˆ Filtered media , Prior S Learning Training Media S Instance-based Inductive Model-based Tag Relevance f Φ ( x, t ; Θ ) Test Media X Tasks Transductive Transduction-based Assignment Image x Tag t Refinement User Information Θ Retrieval Training media is obtained from social networks, i.e. with unreliable user- generated annotations. It can be filtered to remove unwanted tags or images. 41

A UXILIARY COMPONENTS : FILTER - A common practice is to eliminate overly personalized tags like ‘hadtopostsomething’ - e.g. by excluding tags that are not part of WordNet or Wikipedia - Often tags that do not appear enough times in the collection are eliminated. - Reduction of vocabulary size is also important for when using an image-tag association matrix - Since batch tagging tends to reduce the quality of tags, these types of images can be excluded 42

B ATCH TAGGING A unique user constraint prevents ‘spam’ from batch tagging 43 Li et al. TMM 2009

A UXILIARY COMPONENTS : PRECOMPUTE - It is practical to precompute information for the learning. - A common precomputation is tag occurrence and co-occurrence. - Occurrence can be used to penalize excessively frequent tags - Co-occurrence is used to capture semantic similarity of tags directly from users’ behavior - Semantic similarity typically obtained by Flickr context distance 44

F LICKR CONTEXT DISTANCE • Based on the Normalized Google Distance. h(x) • Measures the co-occurence of two tags with respect to their single tag occurrencies. h(y) • No semantics is involved, works for any tag. h(x,y) NGD( x, y ) = max { log h ( x ) , log h ( y ) } − log h ( x, y ) , FCS (bridge, river) = 0.65 log N − min { log h ( x ) , log h ( y ) } FCS( x, y ) = e − NGD( x,y ) / σ (b) [Jiang et al. 2009] 45

U NIFIED FRAMEWORK Auxiliary Components Filter & Precompute ˆ Filtered media , Prior S Learning Training Media S Instance-based Inductive Model-based Tag Relevance f Φ ( x, t ; Θ ) Test Media X Tasks Transductive Transduction-based Assignment Image x Tag t Refinement User Information Θ Retrieval 46

T AXONOMY Learning Media Instance Model TransducVve Tag 2 1 - Tag + Image 13 15 12 Tag + Image + User 5 7 3 Taxonomy structures 60 papers along Media and Learning dimensions 47

T AXONOMY Learning Media Instance Model TransducVve Tag 2 1 - Tag + Image 13 15 12 Tag + Image + User 5 7 3 Taxonomy structures 60 papers along Media and Learning dimensions 48

M EDIA FOR TAG RELEVANCE Depending on the modalities exploited we can divide the methods between those that use: - Tag - e.g. considering ranking of tags as a proxy of user’s priorities - Tag + image - e.g. considering the set of tags assigned to an image - Tag, image + user information - e.g. considering the behaviors of di ff erent users tagging similar images 49

M EDIA : T AGS These methods reduce the problem to text retrieval Find similarly tagged images by - user-provided tag ranking [Sun et al. 2011] , - tag co-occurrence [Sigurbjönsson and van Zwol 2008; Zhu et al. 2012] or - topic modelling [Xu et al. 2009] These methods assume that test images have already been tagged as well, so unsuited for tag assignment . 50

M EDIA : T AGS AND IMAGES The main idea of these works is to exploit visual consistency, i.e. the fact that visually similar images should have similar tags. Three main approaches: 1. Use visual similarity between test image and database 2. Use similarity between images with same tags 3. Learn classifiers from social images + tags 51

M EDIA : T AGS AND IMAGES Two tactics to combine the similarity between images and tags 1. Sequential : compute visual similarity, then use the tag modality 2. Simultaneous : use both modalities at the same time, • A unified graph composed by the fusion of a visual similarity graph with an image-tag connection graph [Ma et al. 2010] • Tag and image similarities as constraints to reconstruct an image-tag association matrix [Wu et al. 2013; Xu et al. 2014; Zhu et al. 2010] 52

M EDIA : T AGS , IMAGES AND USER INFO In addition to tags and images, this group of works exploits user information, motivated from varied perspectives. Such as: • User identities [Li et al. 2009b], • Tagging preferences [Sawant et al. 2010], • User reliability [Ginsca et al. 2014], • Photo time stamps [Kim and Xing 2013, McParlane et al. 2013a] • Geo-localization [McParlane et al. 2013b] • Image group memberships [Johnson et al. 2015] 53

T AXONOMY Learning Media Instance Model TransducVve Tag 2 1 - Tag + Image 13 15 12 Tag + Image + User 5 7 3 Taxonomy structures 60 papers along Media and Learning dimensions 54

L EARNING FOR TAG RELEVANCE - We can divide the learning methods in transductive and inductive. The former do not make a distinction between learning and test dataset, the latter may be further divided in methods that produce an explicit model and those that are instance based. - We therefore divide the methods in instance-based, model-based and transduction-based. - Typically inductive methods have better computational scalability than transductive ones. 55

I NSTANCE BASED - This class of methods compares new test images with training instances. - There are no parameters and the complexity grows with the number of instances. - Approaches are typically based on variants of k-NN, with or witout weighted voting 56

M ODEL BASED - This class of methods learns its parameters from a training set. A model can be tag-specific or holistic, i.e. for all tags. - Tag-specific: use linear or fast intersection kernel SVMs trained on features augmented by pre-trained classifiers of popular tags, or relevant positive and negative examples - Holistic: use topic modeling with relevance computed using a topic vector of the image and a topic vector of the tag. 57

T RANSDUCTION BASED - This class of methods evaluate tag relevance for a given image- tag pair by minimizing a cost function over a set of images. - The majority of these methods is based on matrix factorization 58

P ROS AND C ONS Instance-based - Pro : flexible and adaptable to manage new images and tags. - Con : require to manage training media , a task that may become complex with increasing amount of data. Model-based - Pro : training data is represented compactly, leading to swift computations, especially when using linear classifiers. - Con : need to retrain to cope with new imagery of a tag or when expanding the vocabulary. Transduction-based - Pro : exploit better inter-tag and inter-image relationships, through matrix factorization. - Con : di ffi cult to manage large datasets, because of memory and/or computational complexity. 59

U NIFIED FRAMEWORK Auxiliary Components Filter & Precompute ˆ Filtered media , Prior S Learning Training Media S Instance-based Inductive Model-based Tag Relevance f Φ ( x, t ; Θ ) Test Media X Tasks Transductive Transduction-based Assignment Image x Tag t Refinement User Information Θ Retrieval 60

T AXONOMY Learning Media Instance Model TransducVve Tag 2 1 - Tag + Image 13 15 12 Tag + Image + User 5 7 3 Taxonomy structures 60 papers along Media and Learning dimensions 61

T AXONOMY TagCooccur SemanVcField TagRanking RobustPCA KNN TagProp TagFeature RelExample TensorAnalysis TagVote TagCooccur+ 62

O RGANIZATION OF THE TUTORIAL 8:30 – 9:30 Part 1: Introduction Part 2: Taxonomy 9:30 – 10:00 Part 3: Experimental protocol Part 4: Evaluation 10:00 – 10:45 Co ff ee break 10:45 – 12:00 Part 4: Evaluation cont’d 12:00 – 12:30 Part 5: Conclusion and future directions 63

P ART 3 O UR EXPERIMENTAL PROTOCOL • Limitations in current evaluation • Training and test data • Evaluation setup 64

L IMITATIONS IN C URRENT EVALUATION - Results are not directly comparable - homemade datasets - selected subsets of a benchmark set - varied implementation - preprocessing, parameters, features, … - Results are not easily reproducible - For many methods, no source code or executable is provided - Single-set evaluation - Split a dataset into training/testing, at risk of overfitting 65

P ROPOSED PROTOCOL - Results are often not comparable - use full-size test datasets - same implemenation whenever applicable - Results are reproducible - open-source - Cross-set evaluation - Training and test datasets are constructed independently 66

S OCIALLY - TAGGED T RAINING D ATA - Data gathering procedure [Li et al. 2012] - using WordNet nouns as querie to uniformly sample Flickr images uploaded between 2006 and 2010 - remove batch-tagged images (simple yet e ff ective trick to improve data quality) - Training sets of varied size - Train1M (a random subset of the collected Flickr images) - Train100k (a random subset of Train1m) - Train10k (a random subset of Train1m) ImageNet already provides labeled examples for over 20k categories. Is it necessary to learn from socially tagged data? 67

S OCIAL TAGS VERUS I MAGE N ET A NNOTATIONS - ImageNet annotations - computer vision oriented, focusing on fine-grained visual objects - single label per image - Social tags - follow context, trends and events in the real world - describe both the situation and the entity presented in the visual content poppy summer winter poppy rot poppy poppy tree ... red tulip orange baum sky nature frost tulip cloud flower red field 2007-01-26 2007-04-22 2007-12-27 2008-02-17 A Flickr user’s album Credits: http://www.flickr.com/people/regina_austria 68

I MAGE N ET EXAMPLES ARE BIASED - By web image search engines D. Vreeswijk, K. van de Sande, C. Snoek, A. Smeulders, All Vehicles are Cars: Subclass Preferences in Container Concepts, ICMR 2012 Credit: figure from [Vreeswijk et al. 2012] 69

T EST D ATA - Three test datasets - contributed by distinct research groups Test dataset Contributors MIRFlickr [Huiskes 2010] LIACS Medialab, Leiden University NUS-WIDE [Chua 2009] LMS, NaVonal University of Sigapore Flickr51 [Wang 2010] Microsod Research Asia 70

MIRF LICKR http://press.liacs.nl/mirflickr/ - Image collection - 25,000 high-quality photographic images from Flickr - Labeling criteria - Potential labels: visibile to some extent - Relevant labels: saliently present - Test tag set - 14 relevant labels: baby, bird, car, cloud, dog, flower, girl, man, night people, portrait, river, sea, tree - Applicability - Tag assignment - Tag refinement M. Huiskes, B. Thomee , M. Lew. “New trends and ideas in visual concept detecVon: the MIR Flickr retrieval evaluaVon iniVaVve”, MIR 2010 71

NUS-WIDE http://lms.comp.nus.edu.sg/research/NUS-WIDE.htm - Image collection - 260K images randomly crawled from Flickr - Labeling criteria - An active learning strategy to reduce the amount of manual labeling - Test tag set - 81 tags containing objects ( car, dog ), people ( police, military ), scene ( airport, beach ), and events ( swimming, wedding ) - Applicability - tag assignment - tag refinement - tag retrieval T.-S. Chua, J. Tang, R. Hong, H. Li, Z. Luo, Y.-T. Zheng. “NUS-WIDE: A Real-World Web Image Database from NaVonal University of Singapore”, CIVR 2009 72

F LICKR 51 - Image collection - 80k images collected from Flickr using a predefined set of tags as queries - Labeling criteria - Given a tag, manually check the relevance of images labelled with the tag - Three relevance levels: very relevant, relevant, and irrelevant - Test tag set - 51 tags, and some are ambiguous, e.g, apple, jaguar - Applicability - Tag retrieval [1] M. Wang, X.-S. Hua, H.-J. Zhang. “Towards a relevant and diverse search of social images”, IEEE TransacVons on MulVmedia 2010 [2] Y. Gao, M. Wang , Z.-J. Zha, J. Sheng, X. Li, X. Wu. “Visual-Textual Joint Relevance Learning for Tag-Based Social Image Search”, IEEE TransacVons on Image Processing, 2013 73

V ISUAL F EATURES - Traditional bag of visual words [van de Sande 2010] - SIFT points quantized by a codebook of size 1,024 - Plus a compact 64-d color feature vector [Li 2007] - CNN features - A 4,096-d FC7 vector after ReLU activation, extracted by the pre-trained 16- layer VGGNet [Simonyan 2015] 74

E VALUATION Three tasks as introduced in Part 1 - Tag assignment - Tag refinement - Tag retrieval 75

E VALUATING TAG A SSIGNMENT /R EFINEMENT - A good method for tag assignment shall - rank relevant tags before irrelevant tags for a given image - rank relevant images before irrelevant images for a given tag - Two criteria - Image-centric: Mean image Average Precision (MiAP) - Tag-centric: Mean Average Precision (MAP) MiAP is biased towards frequent tags MAP is a ff ected by rare tags 76

E VALUATING TAG R ETRIEVAL - A good method for tag retrieval shall - rank relevant images before irrelevant images for a given tag - Two criteria - Mean Average Precision (MAP) to measure the overall ranks - Normalized Discounted Cumulative Gain (NDCG) to measure the top ranks 77

SUMMARY Data servers [1] http://www.micc.unifi.it/tagsurvey [2] http://www.mmc.ruc.edu.cn/research/tagsurvey/data.html 78

LIMITATIONS IN OUR PROTOCOL - Tag informativeness in tag assignment dog dog versus pet beach X. Qian, X.-S. Hua, Y. Tang, T. Mei, Social Image Tagging With Diverse Semantics, IEEE Transactions on Cybernetics 2014 How to assess informativeness? 79

LIMITATIONS IN OUR PROTOCOL - Image diversity in tag retrieval Figure from [Wang et al. 2010] How to measure diversity? M. Wang, X.-S. Hua, H.-J. Zhang, Towards a relevant and diverse search of social images, IEEE Transactions on Multimedia 2010 80

LIMITATIONS IN OUR PROTOCOL - Semantic ambiguity - E.g., search for jaguar in Flickr51 SemanticField RelExamples Need fine-grained annotation X. Li, S. Liao, W. Lan, X. Du, G. Yang, Z e ro - s h o t i m a g e t a g g i n g b y hierarchical semantic embedding, SIGIR 2015 81

R EFERENCES • [Chua 2009] T.-S. Chua, J. Tang, R. Hong, H. Li, Z. Luo, Y.-T. Zheng. NUS-WIDE: A Real-World Web Image Database from National University of Singapore, CIVR 2009 • [Huiskes 2010] M. Huiskes, B. Thomee, M Lew, New trends and ideas in visual concept detection: the MIR Flickr retrieval evaluation initiative, MIR 2010. • [Li 2007] M. Li, Texture Moment for Content-Based Image Retrieval, ICME 2007 • [Li 2012] X. Li, C. Snoek, M. Worring, A. Smeulders, Harvesting social images for bi-concept search, IEEE Transactions on Multimedia 2012 • [Li 2015] X. Li, S. Liao, W. Lan, X. Du, G. Yang, Zero-shot image tagging by hierarchical semantic embedding, SIGIR 2015 • [Simonyan 2015] K. Simonyan, A. Zisserman, Very Deep Convolutional Networks for Large-Scale Image Recognition, ICLR 2015 • [Qian 2014] X. Qian, X.-S. Hua, Y. Tang, T. Mei, Social Image Tagging With Diverse Semantics, IEEE Transactions on Cybernetics 2014 • [van de Sande 2010] K. van de Sande, T. Gevers, C. Snoek, Evaluating Color Descriptors for Object and Scene Recognition, IEEE Transactions on Pattern Analysis and Machine Intelligence, 2010 • [ Vreeswijk 2012] D. Vreeswijk, K. van de Sande, C. Snoek, A. Smeulders, All Vehicles are Cars: Subclass Preferences in Container Concepts, ICMR 2012 • [Wang 2010] M. Wang, X.-S. Hua, H.-J. Zhang, Towards a relevant and diverse search of social images, IEEE Transactions on Multimedia 2010 82

P ART 4 E VALUATION : E LEVEN K EY M ETHODS • Goal: evaluates key methods based on various Media and Learning paradigm • Q: What are their key ingredients ? • Q: What is the computational cost of each of them ? 83

K EY M ETHODS • Covering all published methods is obviously impractical • We do not consider methods: - Which do not show significant improvements or novelties w.r.t. the seminal papers in the field - Methods that are di ffi cult to replicate • We drive our choice by the intention to cover methods that aim for each of the three tasks, exploiting varied modalities and using distinct learning mechanisms • We select 11 representative methods 84

K EY M ETHODS • Each method is required to output tag relevance of each test image and each test tag f ( x 1 , t 1 ) f ( x 1 , t 2 ) f ( x 1 , t m ) . . . f ( x 2 , t 1 ) f ( x 2 , t 2 ) f ( x 2 , t m ) . . . n images . . . ... . . . . . . f ( x n , t 1 ) f ( x n , t 2 ) f ( x n , t m ) . . . m tags 85

K EY M ETHODS Media \ Learning Instance Based Model Based Transduc7ve Based Tag Seman7cField [Zhu et al. 2012] TagCooccur [Sigurbjörnsson and van Zwol 2008] Tag + Image TagRanking TagProp RobustPCA [Liu et al. 2009] [Guillaumin et al. 2009] [Zhu et al. 2010] TagFeature KNN [Chen et al. 2012] [Makadia et al. 2010] RelExample [Li and Snoek 2013] Tag + Image + User TagVote TensorAnalysis TagCooccur+ [Sang et al. 2012a] [Li et al. 2009b] 86

K EY M ETHODS Media \ Learning Instance Based Model Based Transduc7ve Based Tag Seman7cField [Zhu et al. 2012] TagCooccur [Sigurbjörnsson and van Zwol 2008] Tag + Image TagRanking TagProp RobustPCA [Liu et al. 2009] [Guillaumin et al. 2009] [Zhu et al. 2010] TagFeature KNN [Chen et al. 2012] [Makadia et al. 2010] RelExample [Li and Snoek 2013] Tag + Image + User TagVote TensorAnalysis TagCooccur+ [Sang et al. 2012a] [Li et al. 2009b] 87

S EMANTIC F IELD [Zhu et al. 2012] Instance-Based Tag • Tags of similar semantics usually co-occur in user images • SemanticField measures an averaged similarity between a tag and the user tags already assigned to the image • Two similarity measures between words: - Flickr context similarity - Wu-Palmer similarity on WordNet red is it similar? sun sunset beach 0.9 birthday canon is it similar? band lights cat 0.1 concert personal guitar 88 Zhu et al. Sampling and Ontologically Pooling Web Images for Visual Concept Learning. IEEE TMM 2012

F LICKR CONTEXT SIMILARITY • Based on the Normalized Google Distance. h(x) • Measures the co-occurence of two tags with respect to their single tag occurrencies. h(y) • No semantics is involved, works for any tag. h(x,y) NGD( x, y ) = max { log h ( x ) , log h ( y ) } − log h ( x, y ) , FCS (bridge, river) = 0.65 log N − min { log h ( x ) , log h ( y ) } FCS( x, y ) = e − NGD( x,y ) / σ (b) Y-G. Jiang, C.-W. Ngo, S.-F. Chang. SemanVc context transfer across heterogeneous sources for domain adapVve video search. ACM MulVmedia 2009 89

W U -P ALMER SIMILARITY • It is a measure between concepts in an ontology 2 ∗ depth(LCS( w 1 , w 2 )) h i Sim( w 1 , w 2 ) = max restricted to taxonomic links. length( w 1 , w 2 ) + 2 ∗ depth(LCS( w 1 , w 2 )) • Considers the depth of x, y and their least common subsumer (LCS). • Typically used with WordNet. 90 Z. Wu, M. Palmer. Verb semanVcs and lexical selecVon. ACL 1994

S EMANTIC F IELD [Zhu et al. 2012] Instance-Based Tag l x f SemF ield ( x, t ) := 1 X sim ( t, t i ) , l x i =1 • Sim is the similarity between t and the other image tags • Needs some user tags. Not applicable to Tag Assignment • Complexity O(m · l x ): the number of image tags l x times m tags • Memory O(m 2 ): quadratic w.r.t. the vocabulary of m tags 91

K EY M ETHODS Media \ Learning Instance Based Model Based Transduc7ve Based Tag Seman7cField [Zhu et al. 2012] TagCooccur [Sigurbjörnsson and van Zwol 2008] Tag + Image TagRanking TagProp RobustPCA [Liu et al. 2009] [Guillaumin et al. 2009] [Zhu et al. 2010] TagFeature KNN [Chen et al. 2012] [Makadia et al. 2010] RelExample [Li and Snoek 2013] Tag + Image + User TagVote TensorAnalysis TagCooccur+ [Sang et al. 2012a] [Li et al. 2009b] 92

T AG R ANKING [Liu et al. 2009] Instance-Based Tag + Image S(bird) S(tree) bird tree flower flower tree bird sky sky (1) bird (0.36) (2) flower (0.28) S(flower) S(sky) (3) sky (0.21) (4) tree (0.15) Exemplar Similarity Gaussian Kernel Concurrence Similarity p ( t | x ) Random walk on Tag graph Density Estimation • TagRanking assigns a rank to each user tag, based on their relevance to the image content. • Tag probabilities are first estimated in the KDE phase. • Then a random walk is performed on a tag graph, built from visual exemplar similarity and tags semantic similarity. 93 D. Liu, X.-S. Hua, L. Yang, M. Wang, H.-J. Zhang. Tag ranking. WWW 2009

T AG R ANKING [Liu et al. 2009] Instance-Based Tag + Image • Suitable only for Tag Retrieval: it doesn’t add or remove user tags. f T agRanking ( x, t ) = − rank ( t ) + 1 , l x • l x is a tie-breaker when two images have the same tag rank. • Complexity O(m · d · n + L · m 2 ): KDE on n images + L iter random walk • Memory O(max(d · n, m 2 )): max of the two steps 94

K EY M ETHODS Media \ Learning Instance Based Model Based Transduc7ve Based Tag Seman7cField [Zhu et al. 2012] TagCooccur [Sigurbjörnsson and van Zwol 2008] Tag + Image TagRanking TagProp RobustPCA [Liu et al. 2009] [Guillaumin et al. 2009] [Zhu et al. 2010] TagFeature KNN [Chen et al. 2012] [Makadia et al. 2010] RelExample [Li and Snoek 2013] Tag + Image + User TagVote TensorAnalysis TagCooccur+ [Sang et al. 2012a] [Li et al. 2009b] 95

KNN [Makadia et al. 2010] Instance-Based Tag + Image • Similar images share similar tags wood sunset Bookshelf • Finds k nearest images sun handwork 1.5 ship 2.8 with a distance d sea miami sunset 3.2 • Counts the frequency of road backhome tags in the neighborhood 1.9 4.9 0.3 train • Assign the top ranked palm concert livingroom tags to the test image sun singer sea effects A. Makadia, V. Pavlovic, and S. Kumar. A new baseline for image annotaVon. ECCV 2008 96

KNN [Makadia et al. 2010] Instance-Based Tag + Image • Similar images share similar tags wood sunset Bookshelf • Finds k nearest images sun handwork 1.5 ship 2.8 with a distance d sea miami sunset 3.2 • Counts the frequency of road backhome tags in the neighborhood 1.9 4.9 0.3 train • Assign the top ranked palm concert livingroom tags to the test image sun singer sea effects 97

KNN [Makadia et al. 2010] Instance-Based Tag + Image f KNN ( x, t ) := k t , • k t is the number of images with t in the visual neighborhood of x. • User tags on test image are not used. Not applicable to Tag Refinement. • Complexity O(d · |S| + k · log|S|): proportional to d feature dimensionality and k nearest neighbors • Memory O(d · |S|): d-dimensional features 98

T AG V OTE [Li et al. 2009b] Instance-Based Tag + Image + User • Adds two improvements to KNN-voting: - Unique-user constraint - Tag prior frequency X. Li, C. Snoek, M. Worring. Learning Social Tag Relevance by Neighbor VoVng. IEEE TMM 2009 99

T AG V OTE [Li et al. 2009b] Instance-Based Tag + Image f T agV ote ( x, t ) := k t − k n t |S| , images labeled with in . Following • k t is the number of images with t in the visual neighborhood of x • n t is the frequency of tag t in S • Like KNN, user tags on test image are not used. Not applicable to Tag Refinement • Complexity O(d · |S| + k · log|S|) – same complexity as KNN • Memory O(d · |S|) 100

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.