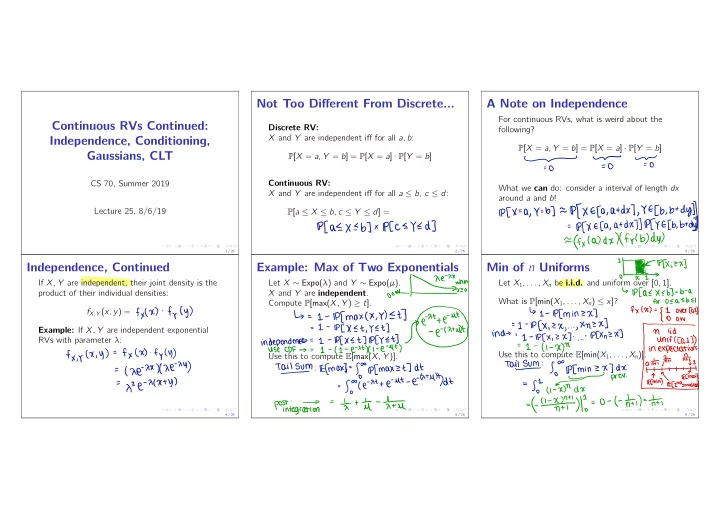

Continuous RVs Continued: Independence, Conditioning, Gaussians, CLT

CS 70, Summer 2019 Lecture 25, 8/6/19

1 / 26

Not Too Different From Discrete...

Discrete RV: X and Y are independent iff for all a, b: P[X = a, Y = b] = P[X = a] · P[Y = b] Continuous RV: X and Y are independent iff for all a ≤ b, c ≤ d: P[a ≤ X ≤ b, c ≤ Y ≤ d] =

2 / 26

Plas

X Eb ]

x IPCC EYE

d ]

A Note on Independence

For continuous RVs, what is weird about the following? P[X = a, Y = b] = P[X = a] · P[Y = b] What we can do: consider a interval of length dx around a and b!

3 / 26

- To

To

=O

IPCX

- a , Y

- b)

=p f X Efa ,

atdx

)

, YE [ b.

btdy ))

=PEX E Ca ,

at DX) ) REY E [ b

, btdy=

ffx(a) dxlffyl

b) dy)

Independence, Continued

If X, Y are independent, their joint density is the product of their individual densities: fX,Y (x, y) = Example: If X, Y are independent exponential RVs with parameter λ:

4 / 26

fxlx )

. fyC y )

f × , y ( X

, y ) =f x ( x )

- f

y ( y )

=(

xe

- xxxx

e

- M )

He

- XCX ty )

Example: Max of Two Exponentials

Let X ∼ Expo(λ) and Y ∼ Expo(µ). X and Y are independent. Compute P[max(X, Y ) ≥ t]. Use this to compute E[max(X, Y )].

5 / 26

'is

:

↳

=I

- lpfmaxcx

Et ]

- ut

I

- IP [ X

Et

, YET ]indecencies

:

:

¥¥E¥¥

,

Tails

:Efmaxt

- fo

- p[

maxzt

]

dt

= So- ( e

- atte

- Ut

- e-

HUH )dt

poftiegra.TN

=at

- 1 tu

- life

Min of n Uniforms

Let X1, . . . , Xn be i.i.d. and uniform over [0, 1]. What is P[min(X1, . . . , Xn) ≤ x]? Use this to compute E[min(X1, . . . , Xn)].

6 / 26

!

"

Txizx ]

↳ IPCAEXEBT

- b

- a

for

OEAEBEI ↳

1-

IPC

minzx

]

fxtx

)

- {

I

- verlay

=L

- IPCX ,

2X

, . . . ,Xnzx

]

° aw

.in

.ni

" "

"

" ' '

÷÷÷iL¥÷

Tailsvm

:So

- lpcmin

ZXIDX

iefmax

= goty

prer

.( I

- X

)ndx

'

ECmin%ECzndsmanesty-f.a-xn.IT#I--o-tnttiI--nt