1

Automatically learned Strategies (Special Topics in Meta-heuristics)

Eric Bourreau, Remi Colleta

LIRMM (CS lab) Montpellier University France {Eric.Bourreau,Remi.Coletta}@lirmm.fr

EURO XXI 2/17

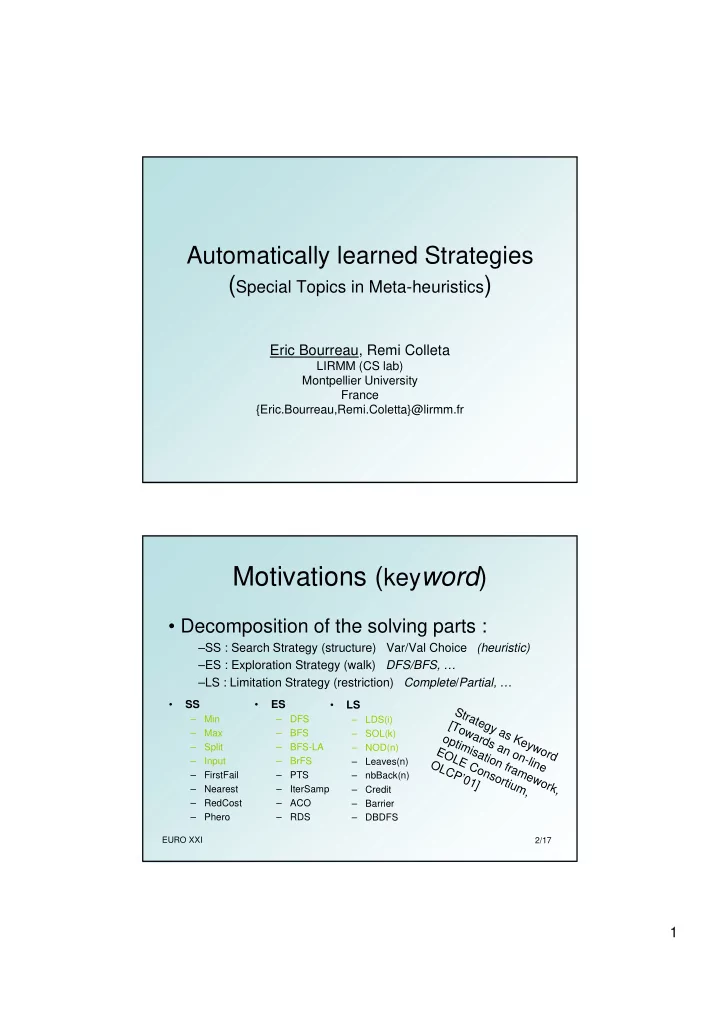

Motivations (keyword)

- Decomposition of the solving parts :

–SS : Search Strategy (structure) Var/Val Choice (heuristic) –ES : Exploration Strategy (walk) DFS/BFS, … –LS : Limitation Strategy (restriction) Complete/Partial, …

- SS

– Min – Max – Split – Input – FirstFail – Nearest – RedCost – Phero

- ES

– DFS – BFS – BFS-LA – BrFS – PTS – IterSamp – ACO – RDS

- LS

– LDS(i) – SOL(k) – NOD(n) – Leaves(n) – nbBack(n) – Credit – Barrier – DBDFS

Strategy as Keyword [Towards an on-line

- ptimisation framework,

EOLE Consortium, OLCP’01]