Motivation: disease progression modelling Motivation: disease progression modelling Motivation: disease progression modelling

GPLVM maps latent to observed data using GP mappings

Covariate-GPLVM

zi ∼ N (0, 1) Y y(j)

i

= f (j)(zi) + εij

GPLVM maps latent to observed data using GP mappings Covariate-GPLVM extends GPLVM by:

- 1. Incorporating covariates

Covariate-GPLVM

zi ∼ N (0, 1) Y y(j)

i

= f (j)(zi) + εij x y(j)

i

= f (j)(xi, zi) + εij

GPLVM maps latent to observed data using GP mappings Covariate-GPLVM extends GPLVM by:

- 1. Incorporating covariates

- 2. Providing a feature-level decomposition

Covariate-GPLVM

zi ∼ N (0, 1) Y y(j)

i

= f (j)(zi) + εij x y(j)

i

= f (j)(xi, zi) + εij y

(j) i

= μ(j) + f (j)

z (z) + f (j) x (x) + f (j) zx (z, x) + εij

Feature-level decomposition

Readily available for linear models, otherwise challenging:

y(j)

i

= μ(j) + f (j)

z (z) + f (j) x (x) + f (j) zx (z, x) + εij

Feature-level decomposition

Readily available for linear models, otherwise challenging: Naive decompositions (with standard GP priors) can lead to misleading conclusions With appropriate functional constraints we learn an identifiable non-linear decomposition

y(j)

i

= μ(j) + f (j)

z (z) + f (j) x (x) + f (j) zx (z, x) + εij

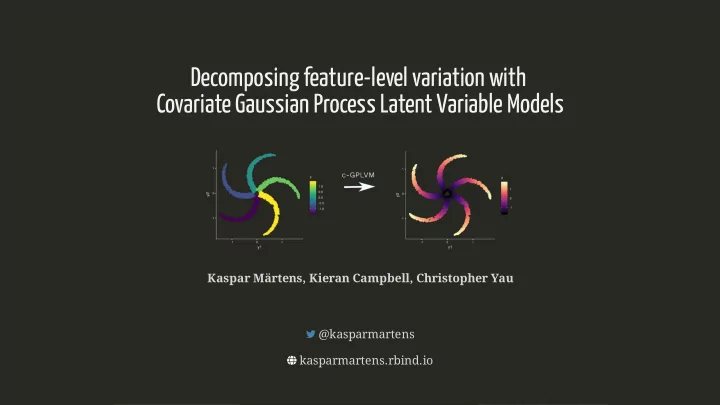

Decomposing feature-level variation with Decomposing feature-level variation with Covariate Gaussian Process Latent Variable Models Covariate Gaussian Process Latent Variable Models

@kasparmartens @kasparmartens kasparmartens.rbind.io kasparmartens.rbind.io Poster #261 Poster #261

Decomposing feature-level variation with Decomposing feature-level variation with Covariate Gaussian Process Latent Variable Models Covariate Gaussian Process Latent Variable Models

Kaspar Märtens, Kieran Campbell, Christopher Yau Kaspar Märtens, Kieran Campbell, Christopher Yau @kasparmartens @kasparmartens kasparmartens.rbind.io kasparmartens.rbind.io