SLIDE 1

Formal connection to moments: MX(t) =

∞

- k=0

E[(tX)k]/k! =

∞

- k=0

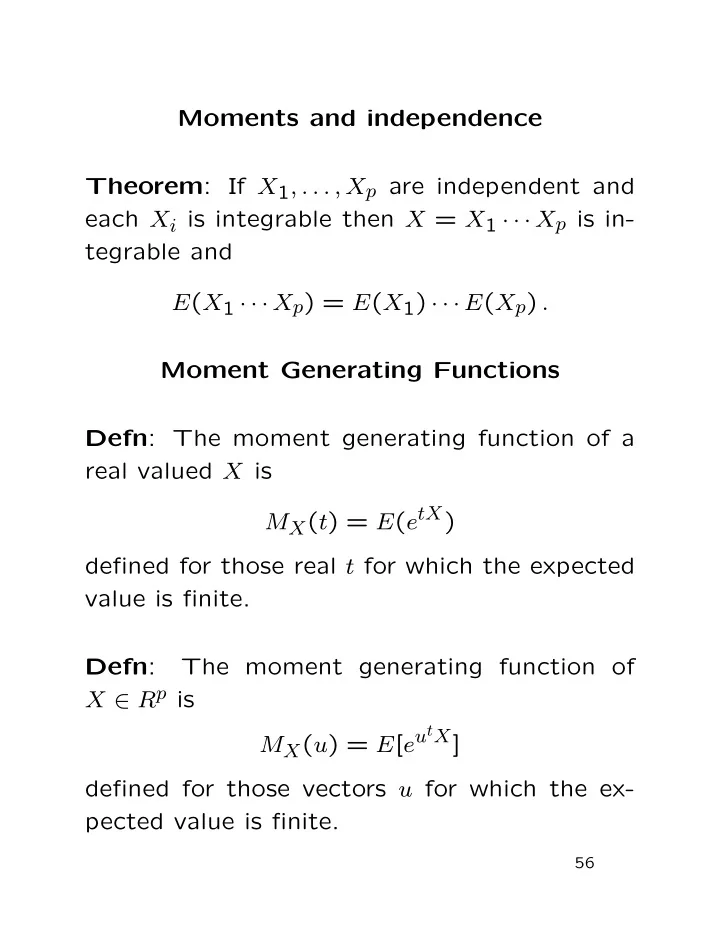

Moments and independence Theorem : If X 1 , . . . , X p are - - PDF document

Moments and independence Theorem : If X 1 , . . . , X p are independent and each X i is integrable then X = X 1 X p is in- tegrable and E ( X 1 X p ) = E ( X 1 ) E ( X p ) . Moment Generating Functions Defn : The moment