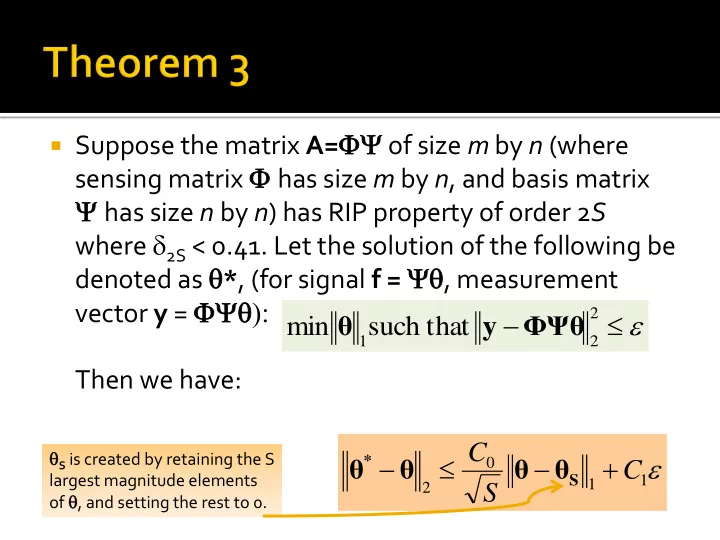

SLIDE 1 Suppose the matrix A=FY of size m by n (where

sensing matrix F has size m by n, and basis matrix Y has size n by n) has RIP property of order 2S where d2S < 0.41. Let the solution of the following be denoted as q*, (for signal f = Yq, measurement vector y = FYq): Then we have:

1 1 2

C S C

S *

θ θ θ θ

qS is created by retaining the S largest magnitude elements

- f q, and setting the rest to 0.

2 2 1

that such min ΦΨθ y θ

SLIDE 2

The proof can be found in a paper by Candes

“The restricted isometry property and its implications for compressed sensing”, published in 2008.

The proof as such is just between 1 and 2

pages long.

SLIDE 3 The proof uses various properties of vectors

in Euclidean space.

The Cauchy Schwartz inequality: The triangle inequality: Reverse triangle inequality:

2 2

| | w v w v

2 2 2

w v w v

2 2 2

w v w v

SLIDE 4 Relationship between various norms: Refer to Theorem 3. For the sake of simplicity

alone, we shall assume Y to be the identity matrix.

Hence x = θ. Even if Y were not identity, the

proof as such does not change.

vector sparse

is if | |

2 2 1 2

k k n v v v 1 v v v

SLIDE 5 We have:

2 ) (

2 2 2

y Φx y Φx x x Φ

* *

Triangle inequality Given constraint + feasibility

This result is called the Tube constraint. y=Φx

2ε x0

In the following, x0 = true signal

SLIDE 6 Define vector . Decompose h into vectors hT0, hT1, hT2,… which

are all at the most s-sparse.

T0 contains indices corresponding to the s

largest elements of x, T1 contains indices corresponding to the s largest elements of h(T0-c) = h-hT0, T2 contains indices corresponding to the s largest indices of h(T0 U T1)-c = h-hT0-hT1, and so

*

x x h

SLIDE 7 We will assume x0 is s-sparse (later we will

remove this requirement).

We now establish the so-called cone

constraint.

1 1 1 *

x h x x

1 1 1 1 1 1 1

| | | |

T c T c T T T i i i T i i i

h h x h h x x h x h x

c

The vector h has its origin at x0 and it lies in the intersection of the L1 ball and the tube. The vector h must also necessarily obey this constraint – the cone constraint. 0-valued

SLIDE 8 We will now prove that such a vector h is

- rthogonal to the null-space of Φ.

In fact, we will prove that . In other words, we will prove that the

magnitude of h is not much greater than 2ε, which means that the solution x* of the

- ptimization problem is close enough to x0.

2 2

h Φh

SLIDE 9 In step 3, we use a bunch of algebraic

manipulations to prove that the magnitude of h outside of T0 U T1 is upper bounded by the magnitude of h on T0 U T1 .

In other words, we prove that: The algebra involves various inequalities

mentioned earlier.

2 2 2 T1 T1 T0 T0 T0 T0 c

(T0

h h h

SLIDE 10 We now prove that the magnitude of h on

T0 U T1 is upper bounded by a reasonable quantity.

For this, we show using the RIP of Φ of order

2s and a series of manipulations that:

This implies that

) 2 1 2 ( ) 1 (

2 2 2 2 1 2 2 1 2 2 1 2 T s s T T T T T T s

h h h h d d d F

) 1 2 ( 1 1 4 ) 1 2 ( 1 1 2 1 2 1 1 2

2 2 2 2 2 2 1 2 1 2 2 2 2 2 1

s s s s T T T T s s s s T T

h h h h d d d d d d d d

SLIDE 11 The steps change a bit. The cone constraint

changes to:

1 1 1 *

x h x x

1 , 1 1 1 1 , 1 1 1 , 1

2 | | | |

c T T c T c T c T T T T i i i T i i i

x h h x x h h x x h x h x

c

SLIDE 12 All the other steps remain as is, except the

last one which produces the following bound:

s x x h

T s s s s 1 , 2 2 2 2 2

) 1 2 ( 1 ) 1 2 ( 1 ) 2 1 ( 1 1 2 d d d d

SLIDE 13 Step 3 of the proof uses the following

corollary of the RIP for two s-sparse unit vectors with disjoint support:

Proof of corollary:

s 2

| | d

2 1 Φx

Φx

) )

, 1 ) 2 )( 1 ( ) 2 )( 1 ( 4 1 ) 1 ( ) 1 ( 4 1 4 1 | | RIP by , ) 1 ( ) ( ) 1 (

1 2 2 2 2 2 2 2 2 2 2 2 2 2 2 2 2 2 2 2 2

2 2 1 2 1 2 1 2 1 2 1 2 1 2 1 2 1 2 1 2 1 2 1 2 1 2 1

x x x x x x x x x x x x x x x x x x x x x x x x x x x x

s s s s s s S

d d d d d d d Φ Φ Φ Φ Φ Φ Φ

SLIDE 14 This step also uses the following corollary of

the RIP for two s-sparse unit vectors with disjoint support:

What if the original vectors x1 and x2 were not

unit-vectors, but both were s-sparse?

s 2

| | d

2 1 Φx

Φx

2 2 2 2 2 2

| | | |

2 1 2 1 2 1 2 1

x x Φx Φx x x Φx Φx

s s

d d

SLIDE 15 The bound is Note the requirement that δ2s should be less than 20.5-

1.

You can prove that the two constant factors – one

before and the other before |x0-x0,TO|1, are both increasing functions of δ2s in the domain [0,1].

So sensing matrices with smaller values of δ2s are

always nicer!

1 2 2 2 2 2

) 2 1 ( 1 ) 1 2 ( 1 1 ) 2 1 ( 1 1 4

T0 T0 0, 0,

x x h

s s s s

s d d d d

SLIDE 16 Suppose the matrix A=FY of size m by n (where

sensing matrix F has size m by n, and basis matrix Y has size n by n) has RIP property of order S where dS < 0.307. Let the solution of the following be denoted as q*, (for signal f = Yq, measurement vector y=FYq): Then we have:

d d

k k

S 307 . 1 ) 307 . ( 1

1 2 S *

θ θ θ θ

qS is created by retaining the S largest magnitude elements

- f q, and setting the rest to 0.

2 2 1

that such min ΦΨθ y θ

SLIDE 17

Theorems 3,5,6 refer to orthonormal bases for

the signal to have sparse or compressible representations.

However that is not a necessary condition. There exist the so-called “over-complete

bases” in which the number of columns exceeds the number of rows (n x K, K > n).

Such matrices afford even sparser signal

representations.

SLIDE 18 Why? We explain with an example. A cosine wave (with grid-aligned frequency) will have a sparse

representation in the DCT basis V1.

An impulse signal has sparse representation in the identity basis

V2.

Now consider a signal which is the superposition of a small

number of cosines and impulses.

The combined signal has sparse representation in neither the DCT

basis nor the identity basis.

But the combined signal will have a sparse representation in the

combined dictionary [V1 V2].

SLIDE 19

We know that certain classes of random

matrices satisfy the RIP with very high probability.

However, we also know that small RICs are

desirable.

This gives rise to the question: Can we design

matrices with smaller RIC than a randomly generated matrix?

SLIDE 20 Unfortunately, there is no known efficient

algorithm for even computing the RIC given a fixed matrix!

But we know that the mutual coherence of ΦY

is an upper bound to the RIC:

So we can design a CS matrix by starting with a

random one, and then performing a gradient descent on the mutual coherence to reach a matrix with a smaller mutual coherence!

) 1 ( s

s

d

SLIDE 21 The procedure is summarized below:

} )) ( ( { e convergenc until Repeat . matrix by a pick Randomly ΦΨ Φ Φ Φ Φ n m

Pick the step-size adaptively so that you actually descend on the mutual coherence.

2 2

) ( ) ( ) ( ) ( max ) (

j j i i j i

ΦΨ ΦΨ ΦΨ ΦΨ ΦΨ

SLIDE 22 The aforementioned is one example of a

procedure to “design” a CS matrix – as

- pposed to picking one randomly.

Note that mutual coherence has one more

advantage over RIC – the former is not tied to any particular sparsity level!

But one must bear in mind that the mutual

coherence is an upper bound to the RIC!

SLIDE 23 The main problem is how to find a derivative

- f the “max” function which is non-

differentiable!

Use the softmax function which is

differentiable:

n i i n i i

x x

1 1

} max{ ) exp( log 1 lim

SLIDE 24

This method can now be used to design CS matrices. As the mutual coherence function is expected to be

very non-convex, one must run a multi-start strategy in practice.

For each “start”, you begin with a random Φ, do a

gradient descent on till convergence.

Repeat this procedure many times, each time

beginning from a different randomly chosen initial condition.

Choose the value of that is the smallest – among all

these starts.

SLIDE 25

In many cases, one needs additional constraints on

the matrix Φ.

For example, in a Hitomi video camera architecture, Φ

is a concatenation of non-negative and diagonal matrices.

The non-negativity can be imposed by means of

projected gradient descent.

See the next slide for the modified algorithm to

maintain non-negativity.

SLIDE 26 https://arxiv.org/abs/1609.0 2135

SLIDE 27 } 0. to in entries negative Set )) ( ( { e convergenc until Repeat . matrix by a pick Randomly Φ Φ Φ Φ Φ Φ n m

Pick the step-size adaptively so that you actually descend on the mutual coherence after setting the negative entries to 0.

SLIDE 28 This method does not directly target but

instead considers the Gram matrix DTD where

The aim is to design Φ in such a way that the

Gram matrix resembles the identity matrix as much as possible, in other words we want:

. normalized

columns all with ΦΨ D I ΦΨ Φ Ψ

T T

SLIDE 29 We have extensively examined the issue of

reconstruction of signals or images from compressive reconstructions – algorithms, systems as well as theory (theorems).

Now imagine you had compressive measurements for

each of a set of K classes of images.

The task is to classify the measurements into one of the

K categories without intermediate reconstruction.

This is called as the problem of compressive

classification.

SLIDE 30 Consider a vector y which is a noisy measurement

- f vector x in the following way:

y = x + n, n ~ N(0,σ2).

Let us suppose that x belongs to one of P classes,

each class containing a single representative vector si, 1 <= i <= P.

The likelihood that y is a noisy sample from the ith

class is given as:

2 2

2 exp 2 1 ) | (

i i

s y s y p

SLIDE 31 The maximum likelihood classifiers assigns to

y the class j such that

By using the –log, we see that this reduces to

a nearest neighbour classifier with Euclidean distance.

2 2 } ,..., 2 , 1 {

2 exp 2 1 ) | ( ) | ( max arg

i i k

s y s y s y p p j

P k

SLIDE 32 Consider the earlier problem was an image

classification problem where we had P image templates in a database.

We observed Gaussian noisy versions of one of these

templates and wanted to determine which one it was.

Now in addition, let us suppose that the noisy version

- f the image were acquired in some different “pose”,

i.e. with some translations and/or rotation.

Let the pose parameters be denoted by a vector θ

which belong to a set of values Θ.

In such a case, this becomes a joint problem – of

classification as well as pose estimation.

SLIDE 33 The problem is solved as follows:

) , | ( argmax ~ 2 ) ~ ( exp 2 1 ) ~ , | ( ) ~ , | ( max arg

2 2 } ,..., 2 , 1 {

θ s y θ θ s y θ s y θ s y

i θ i i i i i k k

p p p j

P k

This denotes image si deformed by pose parameter tilde(θ)i. By “deformation” we mean rotation and/or translation in this example. In general, it could mean any

- ther type of transformation

including geometric scaling, blurring, etc.

SLIDE 34

If the parameters θ denoted pure translation,

then one way to classify y is to determine for which i, the following quantity is maximized: where t = (x,y) denotes spatial coordinates.

This is called a matched filter and it is

equivalent to GMLC if all the candidate signals si had the same magnitude, and we assume additive white Gaussian noise.

t t y θ t si d ) ( ) (

SLIDE 35 Consider the following compressive

acquisition model:

The MLC for this case is now:

n m R R x R y N x y

n m n m

F F

,

, , ), , ( ~ ,

2

F F F

2 2 } ,..., 2 , 1 {

2 exp 2 1 ) | ( ) | ( max arg

i i k

s y s y s y p p j

P k

SLIDE 36 But the compressive measurement y could be

acquired from an image which was acquired in a different pose than any of the images in the database.

The GMLC is now given as:

) , | ( argmax ~ 2 ) ~ ( exp 2 1 ) ~ , | ( ) ~ , | ( max arg

2 2 } ,..., 2 , 1 {

θ s y θ θ s y θ s y θ s y

i θ i i i i i k k

F F F F

p p p j

P k

This has an interesting name – the smashed filter (derived from the name “matched filter”), taking into account the compressive nature of the measurements.