Memory and I/O buses

I/O bus

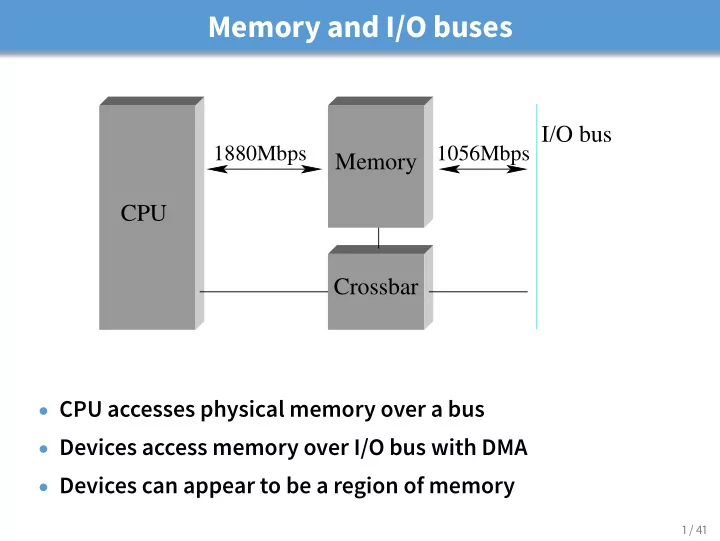

1880Mbps 1056Mbps

Crossbar Memory CPU

- CPU accesses physical memory over a bus

- Devices access memory over I/O bus with DMA

- Devices can appear to be a region of memory

1 / 41

Memory and I/O buses I/O bus 1880Mbps 1056Mbps Memory CPU - - PowerPoint PPT Presentation

Memory and I/O buses I/O bus 1880Mbps 1056Mbps Memory CPU Crossbar CPU accesses physical memory over a bus Devices access memory over I/O bus with DMA Devices can appear to be a region of memory 1 / 41 Realistic ~2005 PC

1 / 41

2 / 41

3 / 41

4 / 41

5 / 41

6 / 41

7 / 41

8 / 41

9 / 41

10 / 41

11 / 41

12 / 41

Buffer descriptor list Memory buffers 100 1400 1500 1500 1500

13 / 41

Host I/O bus Adaptor Network link Bus interface Link interface

14 / 41

15 / 41

16 / 41

16 / 41

17 / 41

18 / 41

19 / 41

19 / 41

19 / 41

20 / 41

21 / 41

22 / 41

23 / 41

23 / 41

24 / 41

24 / 41

25 / 41

26 / 41

27 / 41

28 / 41

29 / 41

30 / 41

31 / 41

31 / 41

32 / 41

33 / 41

33 / 41

33 / 41

34 / 41

35 / 41

35 / 41

36 / 41

37 / 41

38 / 41

39 / 41

40 / 41

41 / 41