SLIDE 1

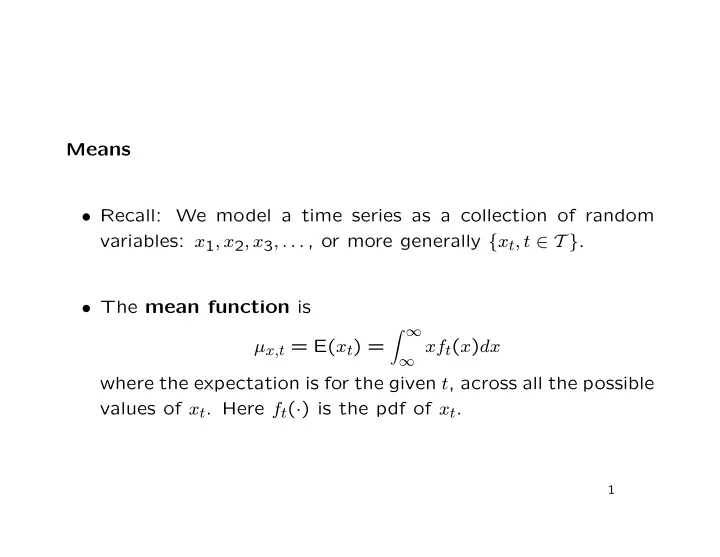

Means

- Recall: We model a time series as a collection of random

variables: x1, x2, x3, . . . , or more generally {xt, t ∈ T }.

- The mean function is