*** St 512 ***

1

- D. A. Dickey

MATRICES

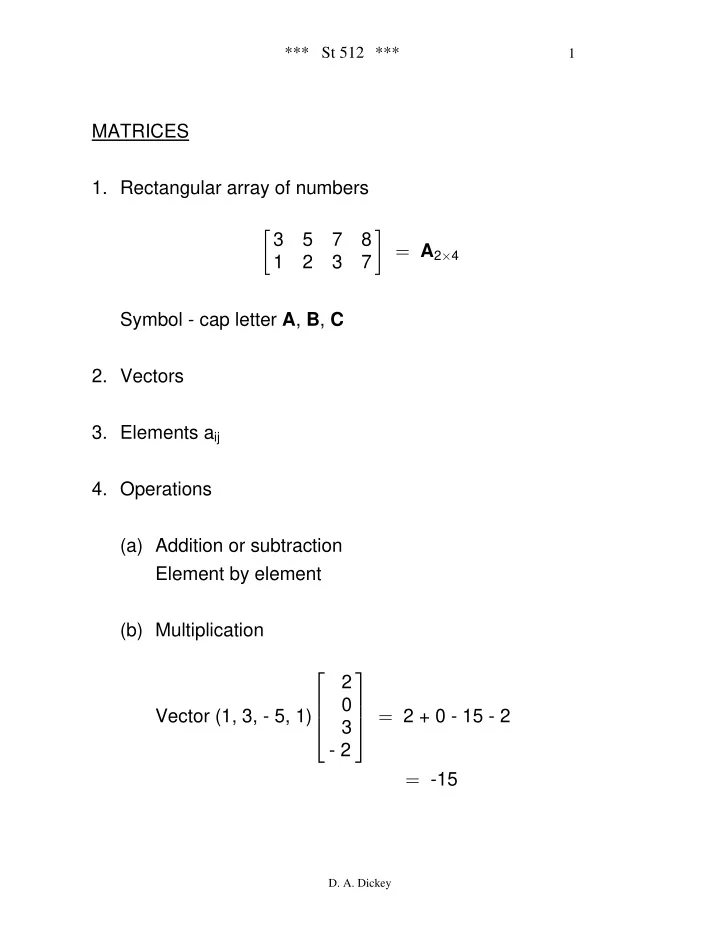

- 1. Rectangular array of numbers

”

- 3

5 7 8 1 2 3 7 œ A2 4

‚

Symbol - cap letter , , A B C

- 2. Vectors

- 3. Elements aij

- 4. Operations

(a) Addition or subtraction Element by element (b) Multiplication Vector (1, 3, - 5, 1) 2 + 0 - 15 - 2 2 3

- 2

Ô × Ö Ù Ö Ù Õ Ø œ

- 15