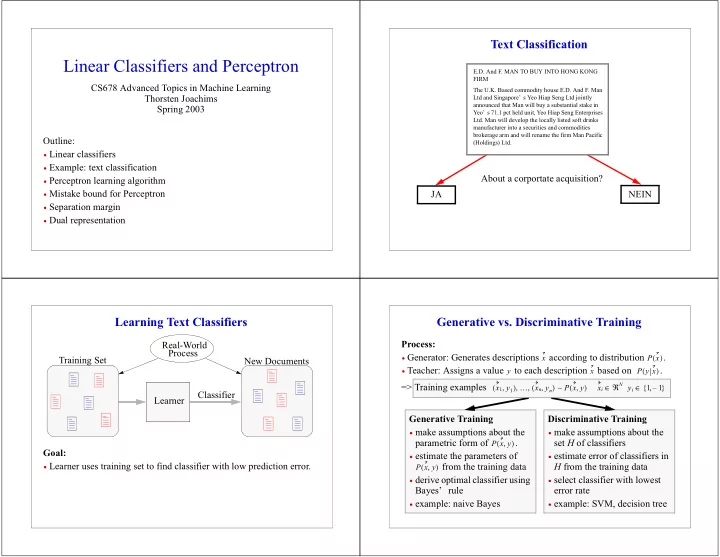

LinearClassifiersandPerceptron

CS678AdvancedTopicsinMachineLearning ThorstenJoachims Spring2003 Outline:

- Linearclassifiers

- Example:textclassification

- Perceptronlearningalgorithm

- MistakeboundforPerceptron

- Separationmargin

- Dualrepresentation

TextClassification

E.D.AndF.MANTOBUYINTOHONGKONG FIRM TheU.K.BasedcommodityhouseE.D.AndF.Man LtdandSingapore’ sYeoHiapSengLtdjointly announcedthatManwillbuyasubstantialstakein Yeo’ s71.1pctheldunit,YeoHiapSengEnterprises Ltd.Manwilldevelopthelocallylistedsoftdrinks manufacturerintoasecuritiesandcommodities brokeragearmandwillrenamethefirmManPacific (Holdings)Ltd.

Aboutacorportateacquisition? JA NEIN

LearningTextClassifiers

Goal:

- Learnerusestrainingsettofindclassifierwithlowpredictionerror.

TrainingSet NewDocuments Learner Classifier Real-World Process

Generativevs.DiscriminativeTraining

Process:

- Generator:Generatesdescriptions accordingtodistribution

.

- Teacher:Assignsavalue toeachdescription basedon

.

x P x ( ) y x P y x ( )

DiscriminativeTraining

- makeassumptionsaboutthe

setHofclassifiers

- estimateerrorofclassifiersin

Hfromthetrainingdata

- selectclassifierwithlowest

errorrate

- example:SVM,decisiontree

GenerativeTraining

- makeassumptionsaboutthe

parametricformof .

- estimatetheparametersof

fromthetrainingdata

- deriveoptimalclassifierusing

Bayes’ rule

- example:naiveBayes

P x y , ( ) P x y , ( )

=>Trainingexamples

x1 y1 , ( ) … xn yn , ( ) , , P x y , ( ) xi ℜ

N y

∈

i

∼ 1 1 – { , } ∈