Lecture 9: Hidden Markov Model Kai-Wei Chang CS @ University of - PowerPoint PPT Presentation

Lecture 9: Hidden Markov Model Kai-Wei Chang CS @ University of Virginia kw@kwchang.net Couse webpage: http://kwchang.net/teaching/NLP16 CS6501 Natural Language Processing 1 This lecture v Hidden Markov Model v Different views of HMM v HMM

Lecture 9: Hidden Markov Model Kai-Wei Chang CS @ University of Virginia kw@kwchang.net Couse webpage: http://kwchang.net/teaching/NLP16 CS6501 Natural Language Processing 1

This lecture v Hidden Markov Model v Different views of HMM v HMM in supervised learning setting CS6501 Natural Language Processing 2

Recap: Parts of Speech v Traditional parts of speech v ~ 8 of them CS6501 Natural Language Processing 3

Recap: Tagset v Penn TreeBank tagset”, 45 tags: v PRP$, WRB, WP$, VBG v Penn POS annotations: The/DT grand/JJ jury/NN commmented/VBD on/IN a/DT number/NN of/IN other/JJ topics/NNS ./. v Universal Tag set, 12 tags v NOUN, VERB, ADJ, ADV, PRON, DET, ADP, NUM, CONJ, PRT, “.”, X CS6501 Natural Language Processing 4

Recap: POS Tagging v.s. Word clustering v Words often have more than one POS: back v The back door = JJ v On my back = NN v Win the voters back = RB v Promised to back the bill = VB v Syntax v.s. Semantics (details later) These examples from Dekang Lin CS6501 Natural Language Processing 5

Recap: POS tag sequences v Some tag sequences more likely occur than others v POS Ngram view https://books.google.com/ngrams/graph?co ntent=_ADJ_+_NOUN_%2C_ADV_+_NOU N_%2C+_ADV_+_VERB_ Existing methods often model POS tagging as a sequence tagging problem CS6501 Natural Language Processing 6

Evaluation v How many words in the unseen test data can be tagged correctly? v Usually evaluated on Penn Treebank v State of the art ~97% v Trivial baseline (most likely tag) ~94% v Human performance ~97% CS6501 Natural Language Processing 7

Building a POS tagger v Supervised learning v Assume linguistics have annotated several examples Tag set: POS Tagger DT, JJ, NN, VBD… The/DT grand/JJ jury/NN commented/VBD on/IN a/DT number/NN of/IN other/JJ topics/NNS ./. CS6501 Natural Language Processing 8

POS induction v Unsupervised learning v Assume we only have an unannotated corpus Tag set: POS Tagger DT, JJ, NN, VBD… The grand jury commented on a number of other topics . CS6501 Natural Language Processing 9

TODAY: Hidden Markov Model v We focus on supervised learning setting v What is the most likely sequence of tags for the given sequence of words w v We will talk about other ML models for this type of prediction tasks later. CS6501 Natural Language Processing 10

Don’t worry! There is no problem Let’s try with your eyes or computer. a /DT d 6 g /NN 0 s /VBZ chas 05 g /VBG a /DT cat /NN . /. a /DT f 6 x /NN 0 s /VBZ 9 u 5505 g /VBG . /. a /DT b 6 y /NN 0 s /VBZ s 05 g 05 g /VBG . /. a /DT ha 77 y /JJ b 09 d /NN What is the POS tag sequence of the following sentence? a ha 77 y cat was s 05 g 05 g . CS6501 Natural Language Processing 11

Let’s try v a /DT d 6 g /NN 0 s /VBZ chas 05 g /VBG a /DT cat /NN . /. a/DT dog/NN is/VBZ chasing/VBG a/DT cat/NN ./. v a /DT f 6 x /NN 0 s /VBZ 9 u 5505 g /VBG . /. a/DT fox/NN is/VBZ running/VBG ./. v a /DT b 6 y /NN 0 s /VBZ s 05 g 05 g /VBG . /. a/DT boy/NN is/VBZ singing/VBG ./. v a /DT ha 77 y /JJ b 09 d /NN a/DT happy/JJ bird/NN v a ha 77 y cat was s 05 g 05 g . a happy cat was singing . CS6501 Natural Language Processing 12

How you predict the tags? v Two types of information are useful v Relations between words and tags v Relations between tags and tags v DT NN, DT JJ NN… CS6501 Natural Language Processing 13

Statistical POS tagging v What is the most likely sequence of tags for the given sequence of words w P( DT JJ NN | a smart dog) = P(DD JJ NN a smart dog) / P (a smart dog) ∝ P(DD JJ NN a smart dog) = P(DD JJ NN) P(a smart dog | DD JJ NN ) CS6501 Natural Language Processing 14

Transition Probability v Joint probability 𝑄(𝒖, 𝒙) = 𝑄 𝒖 𝑄(𝒙|𝒖) v 𝑄 𝒖 = 𝑄 𝑢 + ,𝑢 , , …𝑢 . = 𝑄 𝑢 + 𝑄 𝑢 , ∣ 𝑢 + 𝑄 𝑢 1 ∣ 𝑢 , , 𝑢 + … 𝑄 𝑢 . 𝑢 + … 𝑢 .2+ ∼ P t + P t , 𝑢 + 𝑄 𝑢 1 𝑢 , … 𝑄(𝑢 . ∣ 𝑢 .2+ ) . 𝑄 𝑢 7 ∣ 𝑢 72+ = Π 78+ Markov assumption v Bigram model over POS tags! (similarly, we can define a n-gram model over POS tags, usually we called high-order HMM) CS6501 Natural Language Processing 15

Emission Probability v Joint probability 𝑄(𝒖, 𝒙) = 𝑄 𝒖 𝑄(𝒙|𝒖) v Assume words only depend on their POS-tag v 𝑄 𝒙 𝒖 ∼ 𝑄 𝑥 + 𝑢 + 𝑄 𝑥 , 𝑢 , … 𝑄(𝑥 . ∣ 𝑢 . ) . 𝑄 𝑥 7 𝑢 7 = Π 78+ Independent assumption i.e., P(a smart dog | DD JJ NN ) = P(a | DD) P(smart | JJ ) P( dog | NN ) CS6501 Natural Language Processing 16

Put them together v Joint probability 𝑄(𝒖, 𝒙) = 𝑄 𝒖 𝑄(𝒙|𝒖) v 𝑄 𝒖, 𝒙 = P t + P t , 𝑢 + 𝑄 𝑢 1 𝑢 , … 𝑄 𝑢 . 𝑢 .2+ 𝑄 𝑥 + 𝑢 + 𝑄 𝑥 , 𝑢 , …𝑄(𝑥 . ∣ 𝑢 . ) . 𝑄 𝑥 7 𝑢 7 𝑄 𝑢 7 ∣ 𝑢 72+ = Π 78+ e.g., P(a smart dog , DD JJ NN ) = P(a | DD) P(smart | JJ ) P( dog | NN ) P(DD | start) P(JJ | DD) P(NN | JJ ) CS6501 Natural Language Processing 17

Put them together v Two independent assumptions v Approximate P( t ) by a bi(or N)-gram model v Assume each word depends only on its POStag initial probability 𝑞(𝑢 + ) CS6501 Natural Language Processing 18

HMMs as probabilistic FSA Julia Hockenmaier: Intro to NLP CS6501 Natural Language Processing 19

Table representation Let 𝜇 = {𝐵, 𝐶, 𝜌} represents all parameters CS6501 Natural Language Processing 20

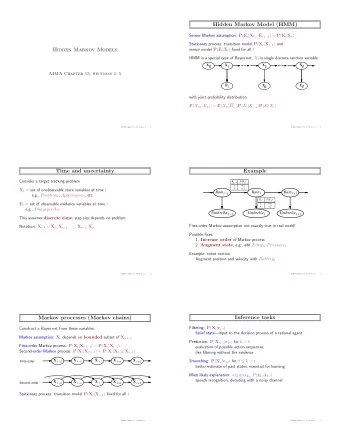

Hidden Markov Models (formal) v States T = t 1 , t 2 … t N; v Observations W= w 1 , w 2 … w N; v Each observation is a symbol from a vocabulary V = {v 1 ,v 2 ,…v V } v Transition probabilities v Transition probability matrix A = {a ij } 𝑏 7V = 𝑄 𝑢 7 = 𝑘 𝑢 72+ = 𝑗 1 ≤ 𝑗, 𝑘 ≤ 𝑂 v Observation likelihoods v Output probability matrix B={b i (k)} 𝑐 7 (𝑙) = 𝑄 𝑥 7 = 𝑤 _ 𝑢 7 = 𝑗 v Special initial probability vector π 𝜌 7 = 𝑄 𝑢 + = 𝑗 1 ≤ 𝑗 ≤ 𝑂 CS6501 Natural Language Processing 21

How to build a second-order HMM? v Second-order HMM v Trigram model over POS tags . 𝑄 𝑢 7 ∣ 𝑢 72+ , 𝑢 72, v 𝑄 𝒖 = Π 78+ . 𝑄 𝑢 7 ∣ 𝑢 72+ , 𝑢 72, 𝑄(𝑥 7 ∣ 𝑢 7 ) v 𝑄 𝒙, 𝒖 = Π 78+ CS6501 Natural Language Processing 22

Probabilistic FSA for second-order HMM Julia Hockenmaier: Intro to NLP CS6501 Natural Language Processing 23

Prediction in generative model v Inference: What is the most likely sequence of tags for the given sequence of words w initial probability 𝑞(𝑢 + ) v What are the latent states that most likely generate the sequence of word w CS6501 Natural Language Processing 24

Example: The Verb “race” v Secretariat/NNP is/VBZ expected/VBN to/TO race /VB tomorrow/NR v People/NNS continue/VB to/TO inquire/VB the/DT reason/NN for/IN the/DT race /NN for/IN outer/JJ space/NN v How do we pick the right tag? CS6501 Natural Language Processing 25

Disambiguating “race” CS6501 Natural Language Processing 26

Disambiguating “race” v P(NN|TO) = .00047 v P(VB|TO) = .83 v P(race|NN) = .00057 v P(race|VB) = .00012 v P(NR|VB) = .0027 v P(NR|NN) = .0012 v P(VB|TO)P(NR|VB)P(race|VB) = .00000027 v P(NN|TO)P(NR|NN)P(race|NN)=.00000000032 v So we (correctly) choose the verb reading, CS6501 Natural Language Processing 27

Jason and his Ice Creams v You are a climatologist in the year 2799 v Studying global warming v You can’t find any records of the weather in Baltimore, MA for summer of 2007 v But you find Jason Eisner’s diary v Which lists how many ice-creams Jason ate every date that summer v Our job: figure out how hot it was http://videolectures.net/hltss2010_eisner_plm/ http://www.cs.jhu.edu/~jason/papers/eisner.hmm.xls CS6501 Natural Language Processing 28

(C)old day v.s. (H)ot day ard" o p(…|C) p(…|H) p(…|START) p(1|…) 0.7 0.1 p(2|…) 0.2 0.2 #cones p(3|…) 0.1 0.7 p(C|…) 0.8 0.1 0.5 p(H|…) 0.1 0.8 0.5 OP|…) 0.1 0.1 0 Weather States that Best Explain Ice Cream Consumption 3.5 Ice Creams p(H) 3 2.5 2 1.5 1 0.5 0 1 3 5 7 9 11 13 15 17 19 21 23 25 27 29 31 33 Diary Day CS6501 Natural Language Processing 29

Three basic problems for HMMs How likely the sentence ”I love cat” occurs v Likelihood of the input: v Compute 𝑄(𝒙 ∣ 𝜇) for the input 𝒙 and HMM 𝜇 v Decoding (tagging) the input: v Find the best tag sequence POS tags of ”I love cat” occurs 𝑏𝑠𝑛𝑏𝑦 d 𝑄(𝒖 ∣ 𝒙, 𝜇) v Estimation (learning): How to learn the model? v Find the best model parameters v Case 1: supervised – tags are annotated v Case 2: unsupervised -- only unannotated text CS6501 Natural Language Processing 30

Three basic problems for HMMs v Likelihood of the input: v Forward algorithm How likely the sentence ”I love cat” occurs v Decoding (tagging) the input: v Viterbi algorithm POS tags of ”I love cat” occurs v Estimation (learning): How to learn the model? v Find the best model parameters v Case 1: supervised – tags are annotated v Maximum likelihood estimation (MLE) v Case 2: unsupervised -- only unannotated text v Forward-backward algorithm CS6501 Natural Language Processing 31

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.