1

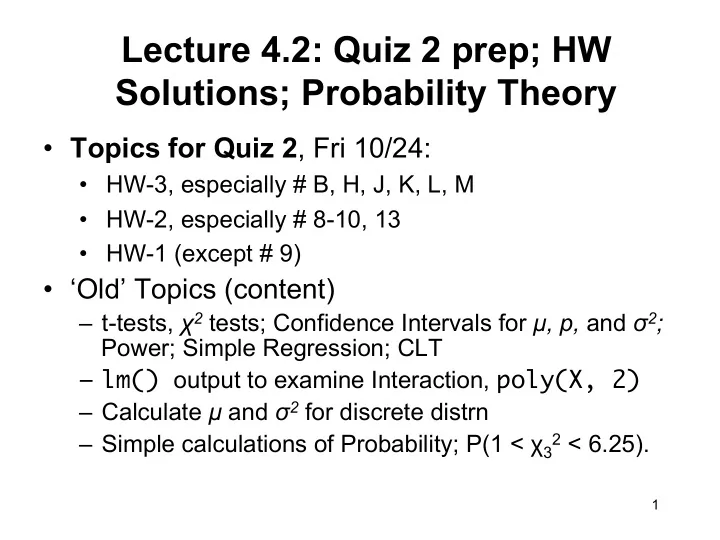

Lecture 4.2: Quiz 2 prep; HW Solutions; Probability Theory

- Topics for Quiz 2, Fri 10/24:

- HW-3, especially # B, H, J, K, L, M

- HW-2, especially # 8-10, 13

- HW-1 (except # 9)

- ‘Old’ Topics (content)

– t-tests, χ2 tests; Confidence Intervals for µ, p, and σ2; Power; Simple Regression; CLT – lm() output to examine Interaction, poly(X, 2) – Calculate µ and σ2 for discrete distrn – Simple calculations of Probability; P(1 < χ3

2 < 6.25).