SLIDE 1

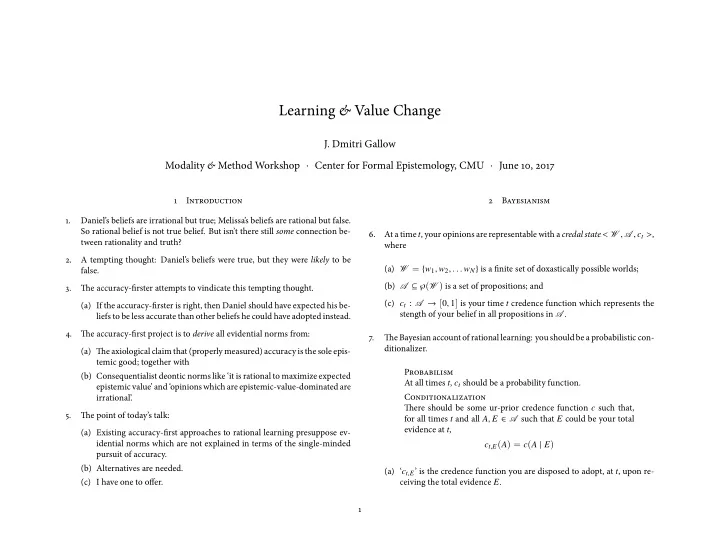

Learning & Value Change

- J. Dmitri Gallow

Learning & Value Change (c) I have one to ofger. . 5. Tie - - PowerPoint PPT Presentation

Learning & Value Change (c) I have one to ofger. . 5. Tie point of todays talk: J. Dmitri Gallow idential norms which are not explained in terms of the single-minded pursuit of accuracy. (b) Alternatives are needed. 2 epistemic

w∈W

1

See, e.g., Oddie (1997), Joyce (2009), and Pettigrew (2011).

!

c

w∈E

c

w∈E

!

!

c : c(E)=1 c(¬E)=0

w∈E

def

def

wj∈W ln [| (1 − δij) − c(w j) |].

p (c) =

w∈W

w∈E

wE

wE p(w)κw is just a constant, the above will be maximized

w∈E

c

w∈E

w∈W

w∈E

w∈E

w∈E