Bayes Nets: Learning Parameters and Structure

Machine Learning 10-701 Anna Goldenberg

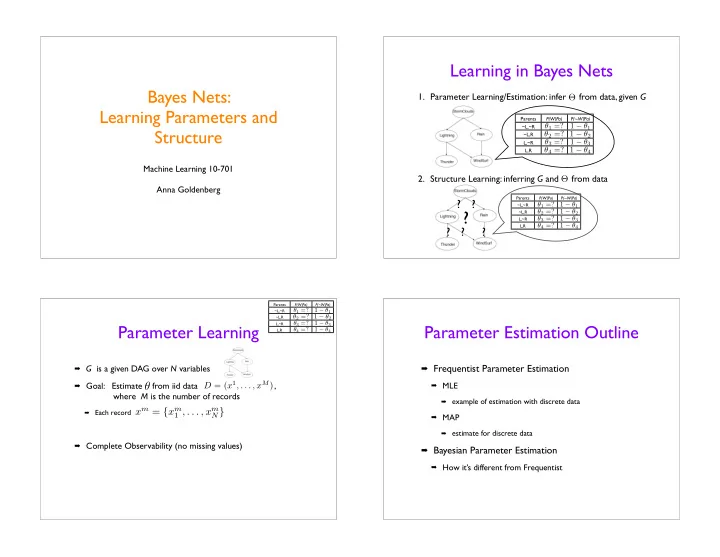

- 1. Parameter Learning/Estimation: infer from data, given G

- 2. Structure Learning: inferring G and from data

Learning in Bayes Nets

Θ

Parents P(W|Pa) P(~W|Pa) ~L,~R

θ1 =? 1 − θ1

~L,R

θ2 =? 1 − θ2

L,~R

θ3 =? 1 − θ3

L,R

θ4 =? 1 − θ4

Θ

? ? ? ? ?

?

Parents P(W|Pa) P(~W|Pa) ~L,~R

θ1 =? 1 − θ1

~L,R

θ2 =? 1 − θ2

L,~R

θ3 =? 1 − θ3

L,R

θ4 =? 1 − θ4

Parameter Learning

G is a given DAG over N variables Goal: Estimate from iid data ,

where M is the number of records

Each record

Complete Observability (no missing values)

θ

D = (x1, . . . , xM)

xm = {xm

1 , . . . , xm N}

Parents P(W|Pa) P(~W|Pa) ~L,~R

θ1 =? 1 − θ1

~L,R

θ2 =? 1 − θ2

L,~R

θ3 =? 1 − θ3

L,R

θ4 =? 1 − θ4

Parameter Estimation Outline

Frequentist Parameter Estimation

MLE

example of estimation with discrete data

MAP

estimate for discrete data

Bayesian Parameter Estimation

How it’s different from Frequentist