1

Informed Search

Material in part from http://www.cs.cmu.edu/~awm/tutorials

- Chap. 4

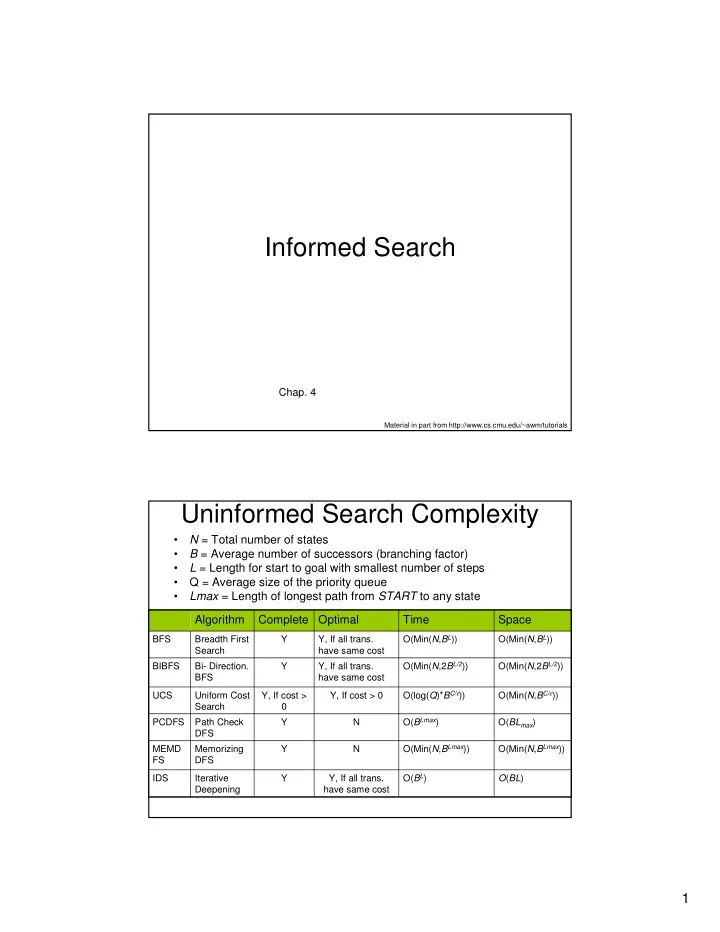

Uninformed Search Complexity

- N = Total number of states

- B = Average number of successors (branching factor)

- L = Length for start to goal with smallest number of steps

- Q = Average size of the priority queue

- Lmax = Length of longest path from START to any state

O(Min(N,2BL/2)) O(Min(N,2BL/2)) Y, If all trans. have same cost Y Bi- Direction. BFS BIBFS O(BL) O(BL) Y, If all trans. have same cost Y Iterative Deepening IDS O(Min(N,BLmax)) O(Min(N,BLmax)) N Y Memorizing DFS MEMD FS O(BLmax) O(BLmax) N Y Path Check DFS PCDFS O(Min(N,BC/ε)) O(log(Q)*BC/ε)) Y, If cost > 0 Y, If cost > Uniform Cost Search UCS O(Min(N,BL)) O(Min(N,BL)) Y, If all trans. have same cost Y Breadth First Search BFS

Space Time Optimal Complete Algorithm