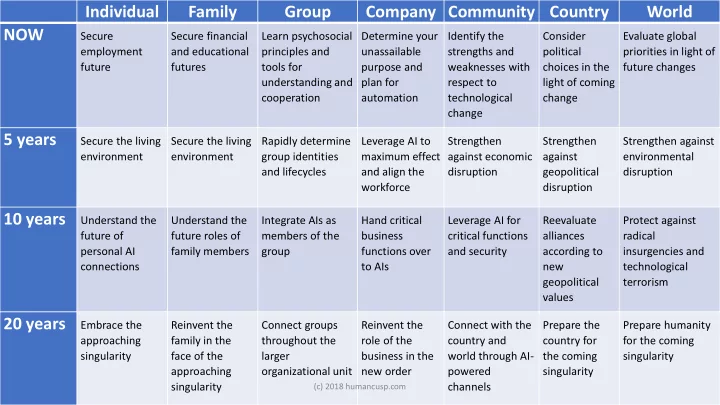

Individual Family Group Company Community Country World NOW

Secure employment future Secure financial and educational futures Learn psychosocial principles and tools for understanding and cooperation Determine your unassailable purpose and plan for automation Identify the strengths and weaknesses with respect to technological change Consider political choices in the light of coming change Evaluate global priorities in light of future changes

5 years

Secure the living environment Secure the living environment Rapidly determine group identities and lifecycles Leverage AI to maximum effect and align the workforce Strengthen against economic disruption Strengthen against geopolitical disruption Strengthen against environmental disruption

10 years

Understand the future of personal AI connections Understand the future roles of family members Integrate AIs as members of the group Hand critical business functions over to AIs Leverage AI for critical functions and security Reevaluate alliances according to new geopolitical values Protect against radical insurgencies and technological terrorism

20 years

Embrace the approaching singularity Reinvent the family in the face of the approaching singularity Connect groups throughout the larger

- rganizational unit

Reinvent the role of the business in the new order Connect with the country and world through AI- powered channels Prepare the country for the coming singularity Prepare humanity for the coming singularity

(c) 2018 humancusp.com