SLIDE 1

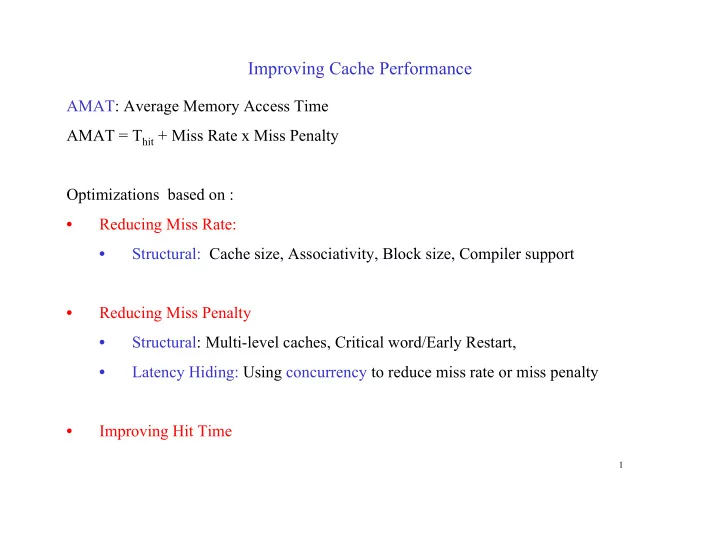

Improving Cache Performance

AMAT: Average Memory Access Time AMAT = Thit + Miss Rate x Miss Penalty Optimizations based on :

- Reducing Miss Rate:

- Structural: Cache size, Associativity, Block size, Compiler support

- Reducing Miss Penalty

- Structural: Multi-level caches, Critical word/Early Restart,

- Latency Hiding: Using concurrency to reduce miss rate or miss penalty

- Improving Hit Time

1