SLIDE 12 Challenges in training Deep Neural Networks

1

Actual evaluation function = Surrogate Loss function (most often)

Classification: F measure = MLE/Cross-entropy/Hinge Loss Regression: Mean Absolute Error = Sum of Squares Error Loss Sequence prediction: BLEU score = Cross-entropy

2

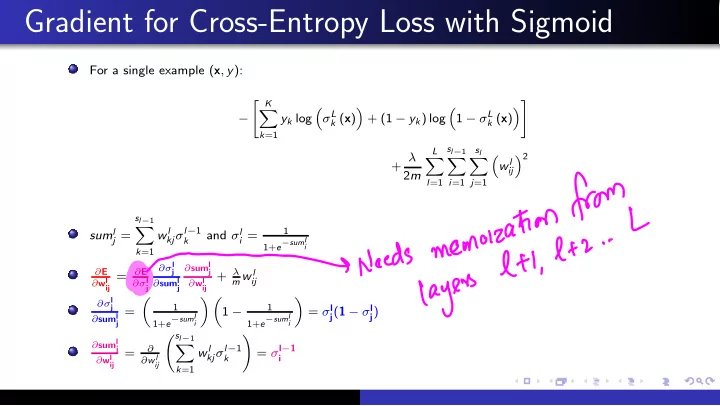

Appropriately exploiting decomposability of surrogate loss functions

Stochasticity vs. redundancy, Mini-batch, etc.

3

Local minima, extremely fluctuating curvature2, large gradients, ill-conditioning

Momentum (gradient accummulator), Adaptive gradient, Clipping

4

Overfitting ⇒ Need for generalization

Universal Approximation Properties and Depth (Section 6.4): With a single hidden layer of a sufficient size, one can represent any smooth function to any desired accuracy The greater the desired accuracy, the more hidden units are required L1 (L2) regularization, Early stopping, dropout, etc.

2see demo