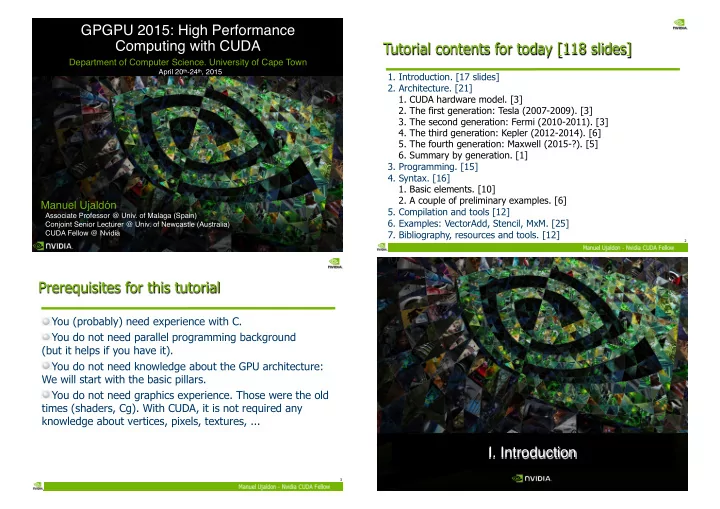

GPGPU 2015: High Performance Computing with CUDA

Department of Computer Science. University of Cape Town

April 20th-24th, 2015

Manuel Ujaldón

Associate Professor @ Univ. of Malaga (Spain) Conjoint Senior Lecturer @ Univ. of Newcastle (Australia) CUDA Fellow @ Nvidia

Tutorial contents for today [118 slides]

- 1. Introduction. [17 slides]

- 2. Architecture. [21]

- 1. CUDA hardware model. [3]

- 2. The first generation: Tesla (2007-2009). [3]

- 3. The second generation: Fermi (2010-2011). [3]

- 4. The third generation: Kepler (2012-2014). [6]

- 5. The fourth generation: Maxwell (2015-?). [5]

- 6. Summary by generation. [1]

- 3. Programming. [15]

- 4. Syntax. [16]

- 1. Basic elements. [10]

- 2. A couple of preliminary examples. [6]

- 5. Compilation and tools [12]

- 6. Examples: VectorAdd, Stencil, MxM. [25]

- 7. Bibliography, resources and tools. [12]

2

Prerequisites for this tutorial

You (probably) need experience with C. You do not need parallel programming background (but it helps if you have it). You do not need knowledge about the GPU architecture: We will start with the basic pillars. You do not need graphics experience. Those were the old times (shaders, Cg). With CUDA, it is not required any knowledge about vertices, pixels, textures, ...

3

- I. Introduction