Generation of non-uniform random numbers General method Function ran - PDF document

Generation of non-uniform random numbers General method Function ran f () Theorem.- Let x be a r.v. with probability distribution func- tion F x ( x ) u = F x ( x ) is a r.v. uniformly distributed in the interval (0 , 1) ( U (0 ,

Generation of non-uniform random numbers General method Function ran f () Theorem.- Let ˆ x be a r.v. with probability distribution func- tion F ˆ x ( x ) u = F ˆ x ( ˆ x ) is a r.v. uniformly distributed in the interval (0 , 1) ˆ ( ˆ U (0 , 1) variable). u is a ˆ x = F − 1 If ˆ U (0 , 1) r.v., then the r.v. ˆ x ( ˆ u ) has F ˆ x ( x ) as ˆ probability distribution function Exponential distribution, i.e. a exp( − ax ) if x ≥ 0 f ˆ x ( x ) = 0 if x < 0 The distribution function is: � F ˆ x ( x ) = −∞ xf ˆ x ( x ) dx = 1 − exp( − ax ) x ( u ) = − 1 a log(1 − u ) ≡ − 1 x = F − 1 a log( u ) ˆ function ran_e(a) ran_e=-log(ran_u())/a return end 1

Cauchy distribution: x ( x ) = 1 1 f ˆ 1 + x 2 π 1 1 + x 2 = 1 1 2 + 1 � x � x F ˆ x ( x ) = −∞ f ˆ x ( x ) dx = π arctan( x ) −∞ π x = tan( π ( u − 1 2)) Example: r ( r ) = re − 1 2 r 2 , f ˆ 0 ≤ r ≤ ∞ � r r ( r ) dr = 1 − e − 1 2 r 2 F ˆ r ( r ) = −∞ f ˆ � � r = − 2 log(1 − u ) ≡ − 2 log( u ) Bernouilli distribution. The distribution probability function is: 0 x < 0 F ˆ x ( x ) = 1 − p 0 ≤ x < 1 1 x ≥ 1 The inverse function takes only two values: 0 u < 1 − p F − 1 x ( u ) = ˆ 1 u ≥ 1 − p 2

function ran_b(p) if (ran_u().lt.1-p) then ran_b=0 else ran_b=1 endif end An equivalent program is: function ran_b(p) if (ran_u().lt.p) then ran_b=1 else ran_b=0 endif end Generation of a vector ( x 1 , x 2 , ...., x n ) of random variables with a given joint probability density function f ˆ x n ( x 1 , ..., x n ) x 1 ,..., ˆ 3

If ( u 1 , ..., u n ) is a vector of n ˆ U (0 , 1) independent random vari- ables, ( x 1 , ..., x n ) is the solution of: F x 1 ( x 1 ) = u 1 F x 2 ( x 2 | x 1 ) = u 2 F x n ( x n | x 1 , ..., x n − 1 ) = u n There are n ! ways of ordering the n variables x 1 , ..., x n Not very useful in practice Numerical Inversion Divide [0 , 1] in M subintervals [ i M , i +1 M ] i =0 , 1 ,...,M − 1 Compute and store x i = F − 1 ( i/M ) for i = 0 , ..., M . Substitute F ˆ x ( x ) by its piecewise linear approximation be- tween points [ i M , i +1 M ]. Generate u according to ˆ U (0 , 1) x = F − 1 x ( u ) is approximated by: ˆ x = ( Mu − i ) x i +1 + ( i + 1 − Mu ) x i i is such that u ∈ [ i M , i +1 M ), or i = [ Mu ], (integer part of Mu ). 4

Change of variables y = y ( x ) y ( y ) = f ˆ x ( x ) f ˆ | dy dx | Linear transformation y = ax + b . 1 x ( y − b f ˆ y ( y ) = | a | f ˆ ) a x is ˆ y is ˆ U ( b, a + b ) for a > 0 or ˆ If ˆ U (0 , 1), then ˆ U ( a + b, b ) for a < 0 x is a Gaussian ˆ G (0 , 1) r.v. then y is a ˆ If ˆ G ( b, a ) r.v. The change y = x a leads to: 1 1 a − 1 f ˆ y ( y ) = | a | y valid for 0 < y < 1 if a > 0 or for 1 < y < ∞ if a < 0. x ( x ) = (1 + α ) x α f ˆ u a with a = 1 / ( α +1), being ˆ u a ˆ x = ˆ U (0 , 1) random variable. ˆ Gaussian distribucion Gaussian random variable of mean 0 and variance 1, ˆ z x = σ ˆ z + µ ˆ 5

1 2 πe − z 2 √ f ˆ z ( z ) = 2 1 2 dz = 1 2(1+erf( z � z � z 2 πe − z 2 √ √ F ˆ z ( z ) = −∞ f ˆ z ( z ) dz = 2)) = u −∞ √ 2erf − 1 (2 u − 1) z = Implemented in NAG function ran_g() ran_g = g05cef(ran_u(),ifail) return end Aproximations to inverse error function c 0 + c 1 t + c 2 t 2 z ≈ t − 1 + d 1 t + d 2 t 2 + d 3 t 3 � t = − 2 log(1 − u ) c 0 =2.515517, c 1 =0.802853, c 2 =0.010328, d 1=1.432788, d 2 =0.189269 y d 3 = 0.001308 error less than 4 . 5 × 10 − 4 if 0 . 5 ≤ u ≤ 1 . 0. 6

function ran_g() data c0,c1,c2/2.515517,0.802853,0.010328/ data d1,d2,d3/1.432788,0.189269,0.001308/ u=ran_u() if (u.gt.0.5) then t=sqrt(-2.0*log(1.-u)) ran_g=t-(c0+t*(c1+c2*t))/(1.0+t*(d1+t*(d2+t*d3))) else t=sqrt(-2.0*log(u)) ran_g=-t+(c0+t*(c1+c2*t))/(1.0+t*(d1+t*(d2+t*d3))) endif return end Box-Muller-Wiener algorithm (BMW) Two Gaussian independent random variables ˆ x 1 , ˆ x 2 (mean zero and variance one). x 2 ( x 2 ) = 1 2 πe − ( x 2 1 + x 2 2 ) / 2 f ˆ x 2 ( x 1 , x 2 ) = f ˆ x 1 ( x 1 ) f ˆ x 1 , ˆ 7

Polar coordinates: r cos( ˆ x 1 = ˆ θ ) ˆ r sin( ˆ x 2 = ˆ θ ) (1) ˆ r and ˆ Joint pdf of ˆ θ is : x 1 , x 2 f ˆ θ ( r, θ ) = J f ˆ x 2 ( x 1 , x 2 ) x 1 , ˆ r , ˆ r, θ θ ( r, θ ) = 1 2 πre − r 2 f ˆ 2 r , ˆ f ˆ θ ( r, θ ) = f ˆ r ( r ) f ˆ θ ( θ ) r , ˆ with r ( r ) = re − r 2 f ˆ 2 and θ ( θ ) = 1 f ˆ 2 π r y ˆ θ independent random variables. ˆ ˆ θ is a random variable uniformly distributed in the interval [0 , 2 π ] ˆ θ = 2 π ˆ v 8

u is a ˆ U (0 , 1) variable. We saw that: ˆ � r = − 2 log( ˆ u ) ˆ Hence we arrive at: � x 1 = − 2 log( ˆ u ) cos(2 π ˆ v ) ˆ � x 2 = − 2 log( ˆ u ) sin(2 π ˆ v ) ˆ Box-Muller-Wiener algorithm. Exact, produces 2 independent Gaussian random variables starting from 2 independent uniform random variables Slow Rejection methods improve somewhat (20%), but not in vector computers. Use numerical inversion. Central limit theorem: � � 12 u k − N � N � z = ˆ � k =1 ˆ � � N 2 u k are ˆ where ˆ U (0 , 1) variables, tends to a Gaussian in the limit 9

N → ∞ . The algorithm is used typically with N = 12 Joint Gaussian variables. Generate d -dimensional real Gaussian field h ( � r ) � h ( � r ) � = 0 r ′ ) � = C ( � r ′ ) = C ( � r ′ ) � h ( � r ) h ( � r,� r − � Numerically r ) = 1 C ( s ) ( � r ′ h ( s ) ( � r ′ ) h ( s ) ( � r ′ ) r + � � N � Perform an ensemble average over M (preferably a large num- ber) realizations: r ) � ≡ 1 M r ) ≡ � C ( s ) ( � s =1 C ( s ) ( � C ( � r ) � M Constructive sequential algorithm (Schmidt’s ortonormaliza- tion process). Generate a set { u j } , j = 1 , . . . , N , of independent Gaussian variables of zero mean and variance one. h 1 = β 11 u 1 10

β 11 = ( C 1 , 1 ) 1 / 2 h 2 = β 21 u 1 + β 22 u 2 � h 2 2 � = C 2 , 2 � h 1 h 2 � = C 1 , 2 In general, we write j h j = i =1 β ji u i � � h j h i � = C i,j , i = 1 , . . . , j Useful when the number N of variables is small. For large N , use Fourier transform. Fourier Filtering Method. r� S ( � k ) = L − d/ 2 � r e i � k C ( � r ) � satisfies exactly: S ( � k ) = L − d | ˆ h ( s ) ( � k ) | 2 To generate realizations h ( s ) ( � r ), s = 1 , . . . , M of the field h ( � r ), the FFM proceeds in the following way: r ), compute S ( � (i) Given C ( � k ) (ii) Generate a set of independent Gaussian random variables 11

u ( s ) ( � r ) of mean zero and variance one. u ( s ) ( � k ) of u ( s ) ( � (iii) Compute the Fourier transform ˆ r ). (iv) Generate the Fourier transform of the field as: h ( s ) ( � ˆ k ) = L d/ 2 S ( � u ( s ) ( � k ) 1 / 2 ˆ k ) (v) Compute the required field h ( s ) ( � r ) as the inverse Fourier transform of ˆ h ( s ) ( � k ). Step (i) needs to be done only once for each function C ( � r ) Step (iii) can be avoided by generating directly the random field u ( s ) ( � k ) in Fourier space Respect the symmetries of the field u ( s ) ( � k ), namely: u ( s ) ( − � k ) = [ u ( s ) ( � k )] ∗ . Power-law correlations: r ) ∼ r − γ for r → ∞ C ( � S ( k ) ∼ k γ − d for k → 0. To be precise ( d = 1) 12

i − γ if 0 < i ≤ L/ 2 − 1 ( L − i ) − γ C i = if L/ 2 ≤ i ≤ L − 1 1 if i = 0 , S min ( k ) < 0 !! minimal substraction procedure . Substracting the minimum value S 0 ( k ) = S ( k ) − S min , S 0 ( k ) is used instead of S ( k ) C (0) is no longer equal to 1 . C (0 , L = 2 21 ) ∼ 1 . 01 C (0 , L = 2 6 ) ∼ 1 . 17 Another possibility: C j = (1 + j 2 ) − γ/ 2 if − L/ 2 + 1 ≤ j ≤ L/ 2 β 2 π 1 / 2 k S ( k ) = K β ( k ) Γ( β + 1) 2 K β ( k ) is the modified Bessel function of order β = ( γ − 1) / 2. 13

Variable composition x 1 , ... ˆ x n independent random variables with distribution func- ˆ tion F ( x ). z = max( ˆ x 1 , . . . , ˆ x n ) ˆ z ( z ) = F ( z ) n F ˆ x i is ˆ If ˆ U (0 , 1), F ( z ) = z , then: z ( z ) = z n , F ˆ z ∈ [0 , 1] f ( z ) = nz n − 1 14

In many cases: f ˆ x ( x ) = i α i f i ( x ) � i α i = 1 � We can interpret α i as the probabilities of a discrete random variable ˆ z taking integer values: f ˆ z ( z ) = i α i δ ( z − i ) � (a) choose an integer value i according to the r.v. ˆ z (b) choose a value of the r.v. defined by f i ( x ). f ˆ x ( x ) = i Prob( ˆ z = i ) f ( x | ˆ z = i ) = i α i f i ( x ) � � Example x ( x ) = 5 6(1 + x 4 ) , f ˆ 0 ≤ x ≤ 1 Can be written as: x ( x ) = α 1 f 1 ( x ) + α 2 f 2 ( x ) = 5 61 + 1 6(5 x 4 ) f ˆ f 1 ( x ) is a ˆ U (0 , 1) r.v. f 2 ( x ) can be sampled by x = u 1 / 5 (with u a ˆ U (0 , 1) variable). 15

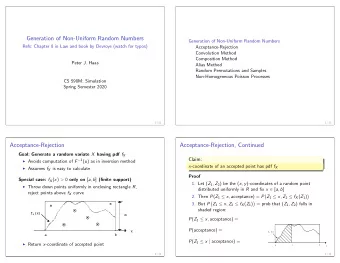

if (ran_u().gt.1./6.) then x=ran_u() else x=ran_u()**(1./5.) endif Equivalent algorithm: x=ran_u() if (ran_u().lt.1./6.) x=x**(1./5.) Rejection Methods One proposes a value for the random variable ˆ x which is ac- cepted (otherwise rejected) with a given probability. Example: x ( x ) = C exp( − x 2 f ˆ 2 ) − 1 ≤ x ≤ 1 No need to know the normalization constant C It is more probable to obtain values of ˆ x for which f ˆ x ( x ) is larger. 16

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.