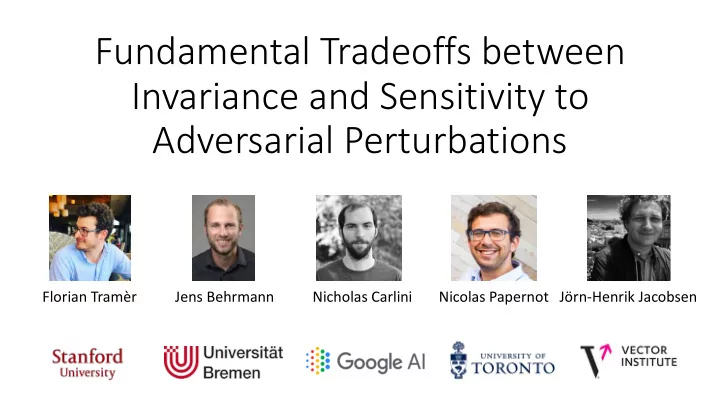

Fundamental Tradeoffs between Invariance and Sensitivity to Adversarial Perturbations

Florian Tramèr Jens Behrmann Nicholas Carlini Nicolas Papernot Jörn-Henrik Jacobsen

Fundamental Tradeoffs between Invariance and Sensitivity to - - PowerPoint PPT Presentation

Fundamental Tradeoffs between Invariance and Sensitivity to Adversarial Perturbations Florian Tramr Jens Behrmann Nicholas Carlini Nicolas Papernot Jrn-Henrik Jacobsen What are Adversarial Examples? any input to a ML model that is

Florian Tramèr Jens Behrmann Nicholas Carlini Nicolas Papernot Jörn-Henrik Jacobsen

99% Guacamole “Small” perturbations Nonsensical inputs 99% Guacamole 99% Guacamole “Large” perturbations

Adversarial Examples

Lp bounded (excessive sensitivity)

“my classifier has 97% accuracy for perturbations of L2 norm bounded by 𝜁 = 2 ”

3 Adversarial Examples

excessive sensitivity excessive invariance

3

Model with 88% certified robust accuracy

1 1

Hermit-crab x! − x " ≤ 22 Guacamole

(we don’t yet know how to do this on ImageNet)

Hermit-crab x! − x " ≤ 22 Hermit-crab

Model certifies that it labels both inputs the same

input input from

semantics- preserving transformation diff result a few tricks

21% 0% 37% 88% Open problem: better automated attacks no attack ℓ# ≤ 0.3 ℓ# ≤ 0.4 ℓ# ≤ 0.4 (manual)

Most robust model provably gets all invariance examples wrong!

More robust models