Fundamental Equations

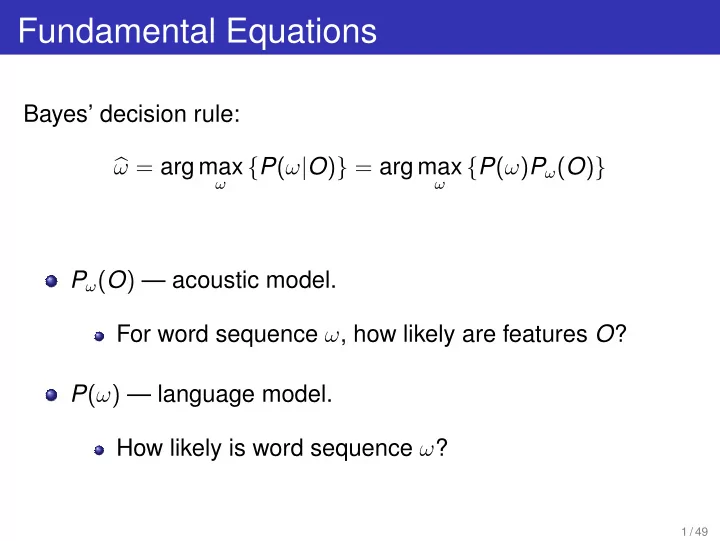

Bayes’ decision rule:

- ω = arg max

ω

{P(ω|O)} = arg max

ω

{P(ω)Pω(O)} Pω(O) — acoustic model. For word sequence ω, how likely are features O? P(ω) — language model. How likely is word sequence ω?

1 / 49

Fundamental Equations Bayes decision rule: = arg max { P ( | O ) } - - PowerPoint PPT Presentation

Fundamental Equations Bayes decision rule: = arg max { P ( | O ) } = arg max { P ( ) P ( O ) } P ( O ) acoustic model. For word sequence , how likely are features O ? P ( ) language model. How likely is

ω

ω

1 / 49

Watson Group IBM T.J. Watson Research Center Yorktown Heights, New York, USA {picheny,bhuvana,stanchen,nussbaum}@us.ibm.com

1

2

3

4

5

3 / 49

4 / 49

5 / 49

6 / 49

7 / 49

8 / 49

9 / 49

10 / 49

11 / 49

′ = f(O) (assume f can be inverted)

′) is:

′) =

d O

′)) =

d O

′) 12 / 49

Φ

Φ

13 / 49

ω,Φ P(O|ω, θ′).

ω,Φ

14 / 49

15 / 49

THE THIS THUD DIG DOG DOG DOGGY ATE EIGHT MAY MY MAY

16 / 49

17 / 49

1

2

3

4

5

18 / 49

19 / 49

20 / 49

21 / 49

22 / 49

23 / 49

24 / 49

25 / 49

i := Oi . . . Oj ∼ N(µ, Σ)

1 decide

1 ∈ N(µ, Σ) and

1 ∈ N(µ1, Σ1) OT t+1 ∈ N(µ2, Σ2)

1 ) − BIC(µ1, Σ1, Ot 1) − BIC(µ2, Σ2, OT t+1)

26 / 49

t

27 / 49

(D+ 1

2 D(D+1))

2

1

28 / 49

29 / 49

30 / 49

1

2

3

4

31 / 49

1

2

3

4

5

32 / 49

33 / 49

A,ω {P(ω)P(O|ω, µ, σ, A)}

34 / 49

A

1

2

3

35 / 49

N

− (Ot −µk )2

2σ2 k

T

k=1,...,K

− (Ot −µk )2

2σ2 k

36 / 49

T

k=1,...,K

− (Ot −µk )2

2σ2 k

1 = s1, . . . , st = arg max kT

1

T

−

(Ot −µkt )2 2σ2 kt

37 / 49

T

T

stA) = 0

38 / 49

T

stA) = 0

T

t = T

stA

st

T

t

st

T

t

39 / 49

T

k=1,...,K

(Ot −AµT k )2 2σ2

st

T

t

40 / 49

41 / 49

42 / 49

1

2

3

4

5

43 / 49

′

t = A · Ot + b

′

t) = N(O

′

t|µk, σk) ⇔ P(Ot) = |A| N(AOt + b|µk, σk)

44 / 49

1

2

3

4

5

45 / 49

46 / 49

1

2

3

4

5

47 / 49

1

2

3

4

48 / 49

49 / 49