Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 1

Frequent Itemsets

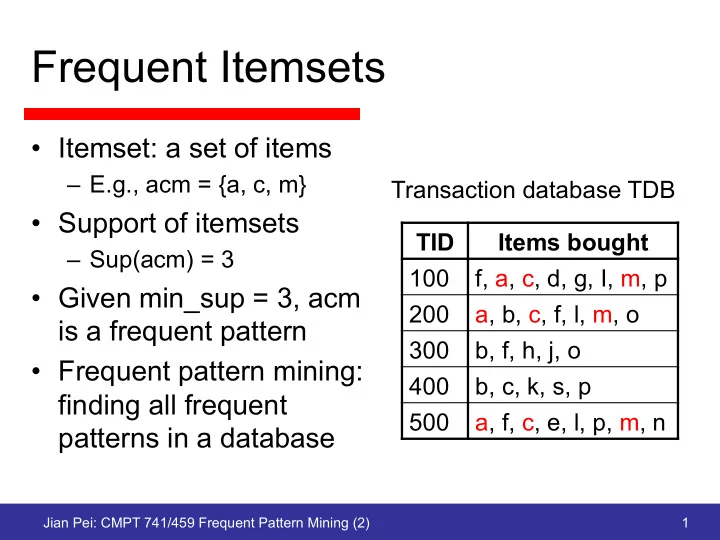

- Itemset: a set of items

– E.g., acm = {a, c, m}

- Support of itemsets

– Sup(acm) = 3

- Given min_sup = 3, acm

is a frequent pattern

- Frequent pattern mining:

Frequent Itemsets Itemset: a set of items E.g., acm = {a, c, m} - - PowerPoint PPT Presentation

Frequent Itemsets Itemset: a set of items E.g., acm = {a, c, m} Transaction database TDB Support of itemsets TID Items bought Sup(acm) = 3 100 f, a, c, d, g, I, m, p Given min_sup = 3, acm 200 a, b, c, f, l, m, o is a

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 1

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 2

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 3

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 4

TID Items 10 a, c, d 20 b, c, e 30 a, b, c, e 40 b, e

Min_sup=2

Itemset Sup a 2 b 3 c 3 d 1 e 3

Data base D 1-candidates

Scan D

Itemset Sup a 2 b 3 c 3 e 3

Freq 1-itemsets

Itemset ab ac ae bc be ce

2-candidates

Itemset Sup ab 1 ac 2 ae 1 bc 2 be 3 ce 2

Counting

Scan D

Itemset Sup ac 2 bc 2 be 3 ce 2

Freq 2-itemsets

Itemset bce

3-candidates

Itemset Sup bce 2

Freq 3-itemsets

Scan D

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 5

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 6

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 7

ABCD ABC ABD ACD BCD AB AC BC AD BD CD A B C D {} Itemset lattice

frequent, the counting of AD can begin

determined frequent, the counting of BCD can begin Transactions 1-itemsets 2-itemsets … Apriori 1-itemsets 2-items 3-items DIC

and S. Tsur, SIGMOD’97. DIC: Dynamic Itemset Counting

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 8

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 9

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 10

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 11

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 12

30 100

10 27 . 1 1 2 100 100 2 100 1 100 × ≈ − = ⎟ ⎟ ⎠ ⎞ ⎜ ⎜ ⎝ ⎛ + + ⎟ ⎟ ⎠ ⎞ ⎜ ⎜ ⎝ ⎛ + ⎟ ⎟ ⎠ ⎞ ⎜ ⎜ ⎝ ⎛

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 13

ABCD ABC ABD ACD BCD AB AC BC AD BD CD A B C D {}

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 14

Set enumeration tree

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 15

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 16

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 17

root f:4 c:3 a:3 m:2 p:2 b:1 b:1 m:1 c:1 b:1 p:1 Header table item f c a b m p TID Items bought (ordered) freq items 100 f, a, c, d, g, I, m, p f, c, a, m, p 200 a, b, c, f, l,m, o f, c, a, b, m 300 b, f, h, j, o f, b 400 b, c, k, s, p c, b, p 500 a, f, c, e, l, p, m, n f, c, a, m, p

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 18

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 19

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 20

root f:4 c:3 a:3 m:2 p:2 b:1 b:1 m:1 c:1 b:1 p:1 Header table item f c a b m p

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 21

root f:4 c:3 a:3 m:2 p:2 b:1 b:1 m:1 c:1 b:1 p:1 Header table item f c a b m p

Header table item f c a root f:3 c:3 a:3

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 22

root f:3 c:3 a:3

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 23

a2:n2 a3:n3 a1:n1 root

b1:m1 c1:k1 c2:k2 c3:k3

a2:n2 a3:n3 a1:n1 root

r1

b1:m1 c1:k1 c2:k2 c3:k3

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 24

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 25

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 26

fcamp fcabm fb cbp fcamp

p-proj DB fcam cb fcam m-proj DB fcab fca fca b-proj DB f cb … a-proj DB fc … c-proj DB f … f-proj DB … am-proj DB fc fc fc cm-proj DB f f f

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 27

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 28

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 29

Ghoting et al., VLDB05

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 30

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 31

Tid Items Freq-item projection 100 c, d, e, f, g, i c, d, e, g 200 a, c, d, e, m a, c, d, e 300 a, b, d, e, g, k a, d, e, g 400 a, c, d, h a, c, d

F-list = a-c-d-e-g

Header table H frequent projections 400 300 200 100 c d e g e d c a d d a c 3 2 a c e g d 3 4 3 g e a

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 32

Header table H frequent projections 400 300 200 100 c d e g e d c a d d a c 3 2 a c e g d 3 4 3 g e a

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 33

3 2 a c e g d 3 4 3 Header table H frequent projections 400 300 200 100 c d e g e d c a d d a c g e a 1 c e g d 2 3 Header table Ha 2

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 34

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 35

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 36

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 37

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 38

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 39

Jian Pei: CMPT 741/459 Frequent Pattern Mining (2) 40