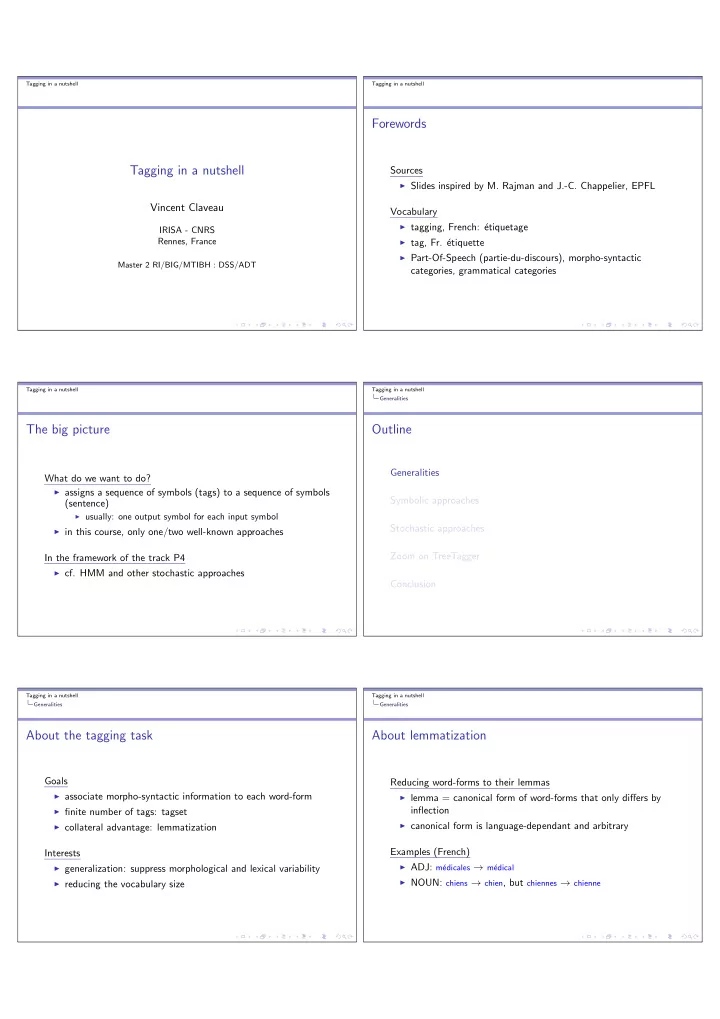

SLIDE 2 Tagging in a nutshell Generalities

Examples of tagged texts 1/2

Vous/PRV:pl faites/VCJ:pl preuve/SBC:sg de/PREP mesure/SBC:sg dans/PREP vos/DTN:pl propos/SBC:pl ,/, et/COO votre/DTN:sg discours/SBC:sg est/ECJ:sg toujours/ADV empreint/ADJ1PAR:sg de/PREP r´ eserve/SBC:sg ./. Vous/PRV:pl n’/ADV ˆ etes/ECJ:pl certainement/ADV pas/ADV indiff´ erent/SBC:sg ,/, mais/COO peu/ADV expansif/SBC:pl ./. Votre/DTN:sg approche/SBC:sg plutˆ

peut/VCJ:sg amener/VNCFF vos/DTN:pl interlocuteurs/SBC:pl ` a/PREP penser/VNCFF que/SUB vous/PRV:pl portez/VCJ:pl une/DTN:sg grande/ADJ:sg attention/SBC:sg aux/DTC:pl conventions/SBC:pl ou/COO aux/DTC:pl usages/SBC:pl ./. Votre/DTN:sg comportement/SBC:sg peut/VCJ:sg ,/, par/PREP contre/PREP ,/, paraˆ ıtre/VNCFF assez/ADV ferm´ e/ADJ2PAR:sg ` a/PREP ceux/PRO:pl qui/REL ont/ACJ:pl coutume/ADJ:sg de/PREP r´ eagir/VNCFF spontan´ ement/ADV ./. Votre/DTN:sg approche/SBC:sg s´ erieuse/ADJ:sg peut/VCJ:sg amener/VNCFF vos/DTN:pl interlocuteurs/SBC:pl ` a/PREP penser/VNCFF que/SUB vous/PRV:pl consid´ erez/VCJ:pl le/DTN:sg temps/SBC:sg comme/SUB un/DTN:sg... Tagging in a nutshell Generalities

Examples of tagged texts 2/2

===== D´ EBUT DE PHRASE ===== 1 3 6 Bien sˆ ur bien sˆ ur ADV 0x0000 Rgp

1

2 3 6 , , PCTFAIB

1

3 3 6 rien rien A2 PII 0xE080 Pi-.sn 3—3 S 1

4 3 6 n’ ne A2 ADV 0x0200 Rpn 5 V 1

5 3 6

A5 VINDP3S

5 V 1

6 3 6 un un A3 DETIMS 0xA000 Da-ms-i 7—7 D 1

7 3 6 site Web site web NCMS 0xA040 Ncms 7—7 D 1

8 3 6 ` a ` a PREP 0x0000 Sp 9 F 1

9 3 6 choisir choisir VINF

9 F 1

10 3 6 un un A3 DETIMS 0xA000 Da-ms-i 11 D 1

11 3 6 nom nom NCMS 0xA040 Ncms 11 D 1

12 3 6 en en A3 PREP 0x0000 Sp 13 H 1

13 3 6 www www NCI 0xF020 Nc.. 13 H 1

14 3 6 : : PCTFORTE

- Yps

- ===== FIN DE PHRASE =====

Tagging in a nutshell Generalities

Problems

Ambiguities

◮ most words are polyfunctional ◮ Ex Fr.: r`

egle common noun, verb indicative 1st person, 3rd

person, subjunctive...

◮ depends on the tagset

Contextual disambiguation

◮ use context to choose the most reliable part-of-speech

◮ je r`

egle la longueur avec la r` egle

◮ hard task, not always possible

◮ la belle ferme le voile ◮ la petite brise la glace Tagging in a nutshell Generalities

Problems

Unknown word-form

◮ named entities

◮ person names, places, companies...

◮ imports

◮ words, phrases or sentences from another language: leasing...

◮ specialized terms

◮ from specialized domains: parenth´

esage, kinesim´ etrie...

◮ language register

◮ je la kiffe `

a donf, un fruit sur

Tagging in a nutshell Generalities

Formalization of the task

Sequence to sequence

◮ foreach word, given its context, find the correct tag ◮ correct means there exists a ground truth (given by a human

expert), but even humans may disagree in some cases Two families of approaches

◮ symbolic: Brill’s tagger ◮ stochastic: Multext tagger (HMM)

Tagging in a nutshell Generalities

Evaluation

Comparison with ground-truth

◮ human annotation ◮ costly: only a big-enough abstract of the corpus

Standard measures

◮ precision (sometimes recall) ◮ possibly evaluation category by category