1/22

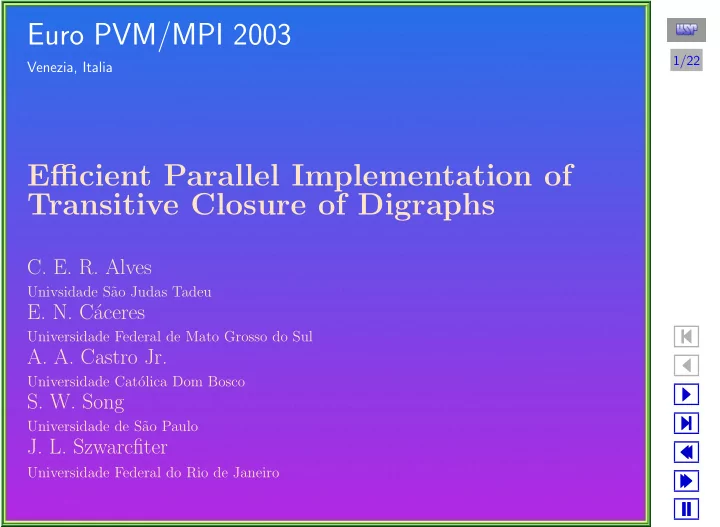

- Euro PVM/MPI 2003

Venezia, Italia

Efficient Parallel Implementation of Transitive Closure of Digraphs

- C. E. R. Alves

Univsidade S˜ ao Judas Tadeu

- E. N. C´

aceres

Universidade Federal de Mato Grosso do Sul

- A. A. Castro Jr.

Universidade Cat´

- lica Dom Bosco

- S. W. Song

Universidade de S˜ ao Paulo

- J. L. Szwarcfiter

Universidade Federal do Rio de Janeiro