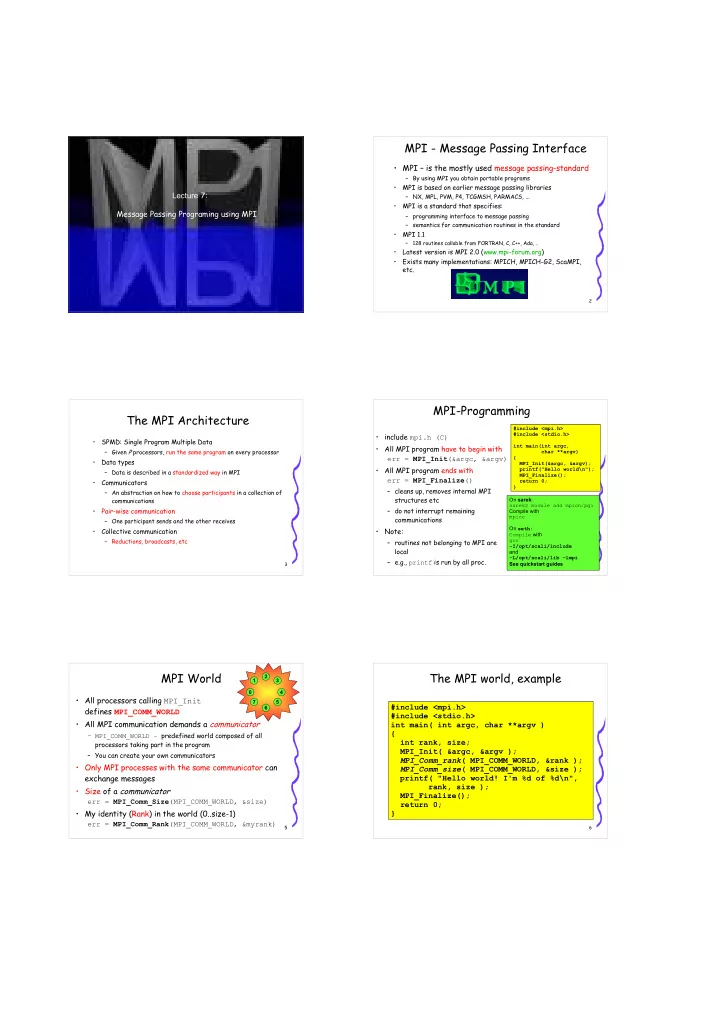

Lecture 7: Message Passing Programing using MPI

2MPI - Message Passing Interface

- MPI – is the mostly used message passing-standard

– By using MPI you obtain portable programs

- MPI is based on earlier message passing libraries

– NX, MPL, PVM, P4, TCGMSH, PARMACS, ...

- MPI is a standard that specifies:

– programming interface to message passing – semantics for communication routines in the standard

- MPI 1.1

– 128 routines callable from FORTRAN, C, C++, Ada, ..

- Latest version is MPI 2.0 (www.mpi-forum.org)

- Exists many implementations: MPICH, MPICH-G2, ScaMPI,

etc.

3The MPI Architecture

- SPMD: Single Program Multiple Data

– Given P processors, run the same program on every processor

- Data types

– Data is described in a standardized way in MPI

- Communicators

– An abstraction on how to choose participants in a collection of communications

- Pair-wise communication

– One participant sends and the other receives

- Collective communication

– Reductions, broadcasts, etc

4MPI-Programming

- include mpi.h (C)

- All MPI program have to begin with

err = MPI_Init(&argc, &argv)

- All MPI program ends with

err = MPI_Finalize() – cleans up, removes internal MPI structures etc – do not interrupt remaining communications

- Note:

– routines not belonging to MPI are local – e.g., printf is run by all proc.

#include <mpi.h> #include <stdio.h> int main(int argc, char **argv) { MPI_Init(&argc, &argv); printf("Hello world\n"); MPI_Finalize(); return 0; } On sarek: sarek$ module add mpich/pgi Compile with mpicc On seth: Compile with gcc

- I/opt/scali/include

and

- L/opt/scali/lib –lmpi

See quickstart guides

5MPI World

- All processors calling MPI_Init

defines MPI_COMM_WORLD

- All MPI communication demands a communicator

– MPI_COMM_WORLD – predefined world composed of all processors taking part in the program – You can create your own communicators

- Only MPI processes with the same communicator can

exchange messages

- Size of a communicator

err = MPI_Comm_Size(MPI_COMM_WORLD, &size)

- My identity (Rank) in the world (0..size-1)

err = MPI_Comm_Rank(MPI_COMM_WORLD, &myrank)

1 3 4 2 6 7 5

6The MPI world, example

#include <mpi.h> #include <stdio.h> int main( int argc, char **argv ) { int rank, size; MPI_Init( &argc, &argv ); MPI_Comm_rank( MPI_COMM_WORLD, &rank ); MPI_Comm_size( MPI_COMM_WORLD, &size ); printf( "Hello world! I'm %d of %d\n", rank, size ); MPI_Finalize(); return 0; }