SLIDE 7 Programmability of CUDA vs. Java for GPUs

- CUDA requires programmers to explicitly write operations for

– managing device memories – copying data

between CPU and GPU

– expressing parallelism

- Java 8 enables programmers

to just focus on

– expressing parallelism

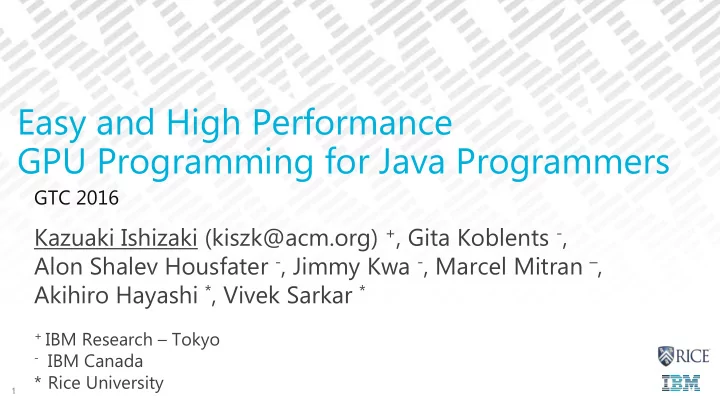

7 Easy and High Performance GPU Programming for Java Programmers

// code for GPU __global__ void GPU(float* d_a, float* d_b, int n) { int i = threadIdx.x; if (n <= i) return; d_b[i] = d_a[i] * 2.0; } void fooJava(float A[], float B[], int n) { // similar to for (idx = 0; i < n; i++) IntStream.range(0, N).parallel().forEach(i -> { b[i] = a[i] * 2.0; }); } void fooCUDA(N, float *A, float *B, int N) { int sizeN = N * sizeof(float); cudaMalloc(&d_A, sizeN); cudaMalloc(&d_B, sizeN); cudaMemcpy(d_A, A, sizeN, HostToDevice); GPU<<<N, 1>>>(d_A, d_B, N); cudaMemcpy(B, d_B, sizeN, DeviceToHost); cudaFree(d_B); cudaFree(d_A); }