Recall, Expected Value

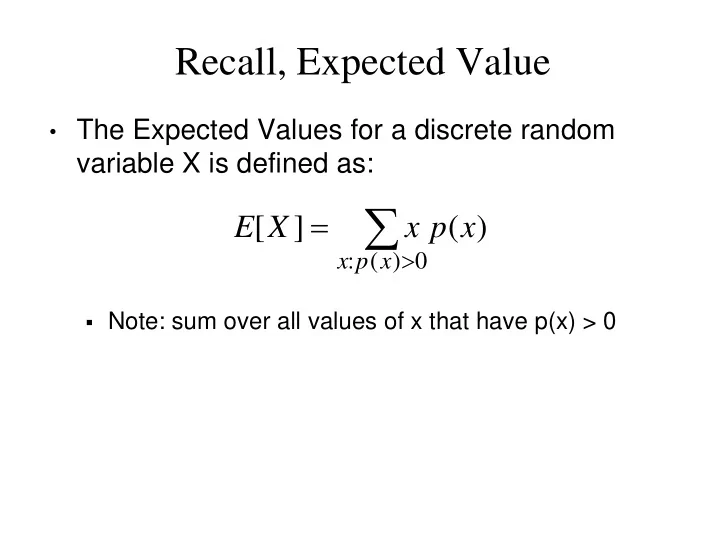

- The Expected Values for a discrete random

variable X is defined as:

- Note: sum over all values of x that have p(x) > 0

= [ ] ( ) E X x p x > : ( ) 0 x p x Note: sum - - PowerPoint PPT Presentation

Recall, Expected Value The Expected Values for a discrete random variable X is defined as: = [ ] ( ) E X x p x > : ( ) 0 x p x Note: sum over all values of x that have p(x) > 0 The St. Petersburg Paradox

Proof: E[X] =

∞ = +

= + + +

1 2 3 1 2 1

2 2 1 ... 2 2 1 2 2 1 2 2 1

i i i

∞ = =∑

∞ =0 2

1

i

=

n i i n

3 2 1

n n i i

+ =

1

n n i i

+ =

1

1. Y = $1 2. Bet Y 3. If Win then STOP 4. If Loss then Y = 2 * Y, go to Step 2

1 38 20 1 1 38 18 38 20 38 18 2 2 38 18 38 20 ] [ E

1

= − = = − =

∞ = − = ∞ = i i i j j i i i

Z

2 2 2 2 2 2 2

2 2

2

P(X = 0) = p(0) = 1 – p

n i p p i n i p i X P

i n i

,..., 1 , ) 1 ( ) ( ) ( = − = = =

−

8 1 ) 1 ( 3 ) (

3

= − = = p p X P 8 3 ) 1 ( 2 3 ) 2 (

1 2

= − = = p p X P 8 3 ) 1 ( 1 3 ) 1 (

2 1

= − = = p p X P 8 1 ) 1 ( 3 3 ) 3 (

3

= − = = p p X P

k P(X=k)

k P(X=k)

with probability 0.1

4783 . ) 9 . ( ) 1 . ( 7 ) (

7

≈ = = X P 3720 . ) 9 . ( ) 1 . ( 1 7 ) 1 (

6 1

≈ = = X P 6561 . ) 9 . ( ) 1 . ( 4 ) (

4

= = = X P

4219 . ) 25 . ( ) 75 . ( 3 4 ) 3 (

1 3

≈ = = X P

n n n

n n n n n n P

2

2 ! ! )! 2 ( 2 1 2 1 2 ) tie voters 2 ( = =

2 / 1 n n

− +

n n n n n

2 2 1 2 2 2 / 1 2

− + − +

π π a c ac a 2 ) ( ) 2 / ( 1 =