6.8, 6.9 Probability

- P. Danziger

Probability Probability

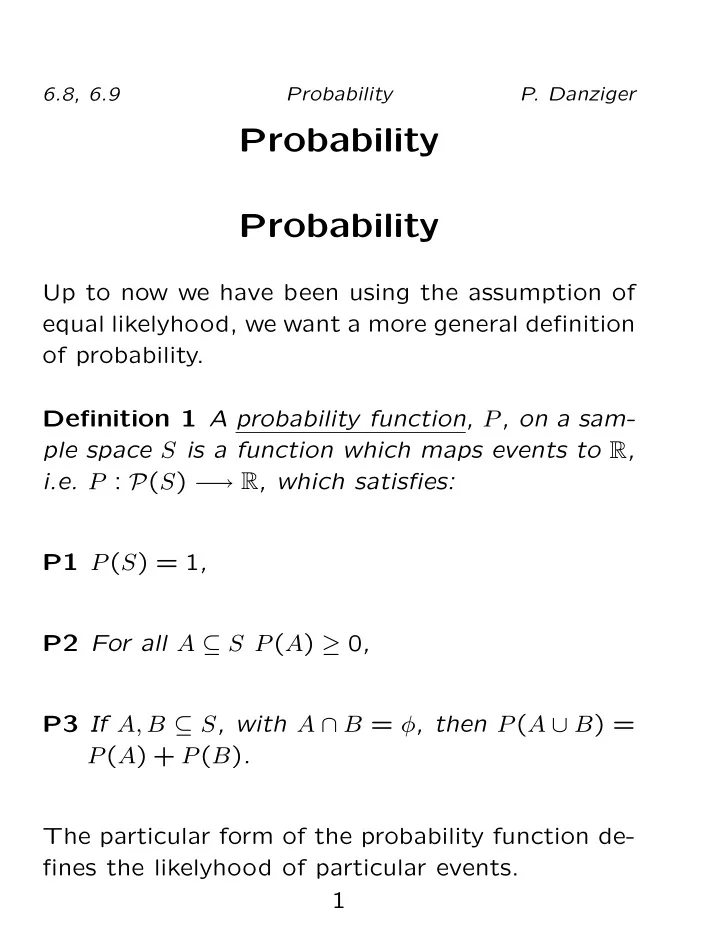

Up to now we have been using the assumption of equal likelyhood, we want a more general definition

- f probability.

Probability Probability Up to now we have been using the assumption - - PDF document

6.8, 6.9 Probability P. Danziger Probability Probability Up to now we have been using the assumption of equal likelyhood, we want a more general definition of probability. Definition 1 A probability function, P , on a sam- ple space S is a

k

k

k

2

1 2.98023224×10−8 1 25 7.45058060×10−7 2 300 8.94069672×10−6 3 2300 6.85453415×10−5 4 12650 3.76999378×10−4 5 53130 1.58339739×10−3 6 177100 5.27799129×10−3 7 480700 1.43259764×10−2 8 1081575 3.22334468×10−2 9 2042975 6.08853996×10−2 10 3268760 9.74166393×10−2 11 4457400 1.32840872×10−1 12 5200300 1.54981017×10−1 k

k

k

2

13 5200300 1.54981017×10−1 14 4457400 1.32840872×10−1 15 3268760 9.74166393×10−2 16 2042975 6.08853996×10−2 17 1081575 3.22334468×10−2 18 480700 1.43259764×10−2 19 177100 5.27799129×10−3 20 53130 1.58339739×10−3 21 12650 3.76999378×10−4 22 2300 6.85453415×10−5 23 300 8.94069672×10−6 24 25 7.45058060×10−7 25 1 2.98023224×10−8

1 )(39 3 )

4 )

3 8·1 3 3 8·1 3+3 8·1 3+ 2 10·1 3

3 24 3 24+ 3 24+ 1 15

15